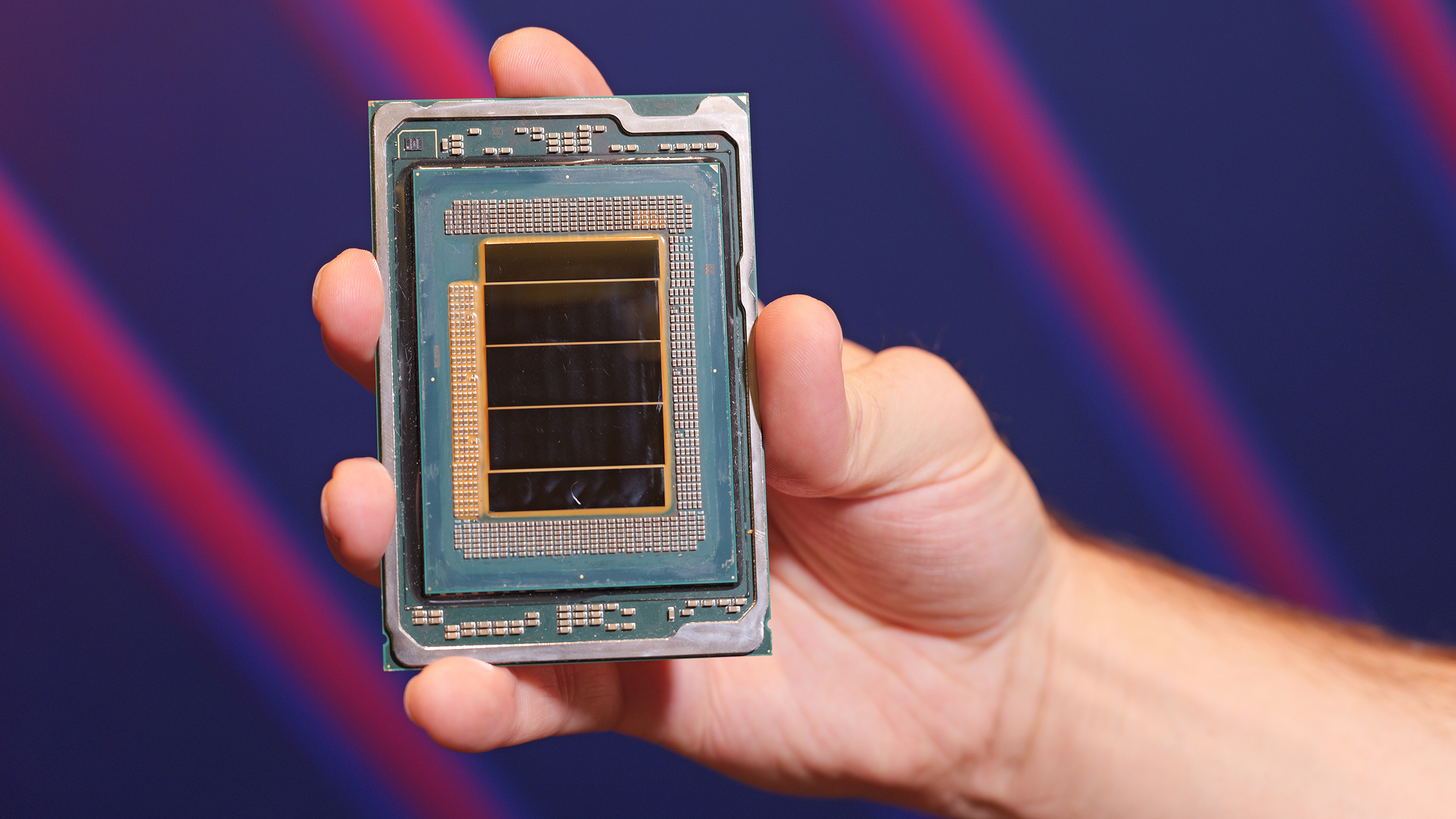

(Image credit: Intel)

Intel this week formally introduced its Xeon 6+ processors codenamed 'Clearwater Forest' that pack up to 288 energy-efficient Darkmont cores and are the first data center CPUs made on the company's 18A fabrication process (1.8nm-class). Intel aims its Xeon 6+ 'Clearwater Forest' processors primarily for telecom, cloud, and edge AI workloads as they feature Advanced Matrix Extensions (AMX), QuickAssist Technology (QAT), and Intel vRAN Boost technologies.

Go deeper with TH Premium: CPU

Image

1

of

2

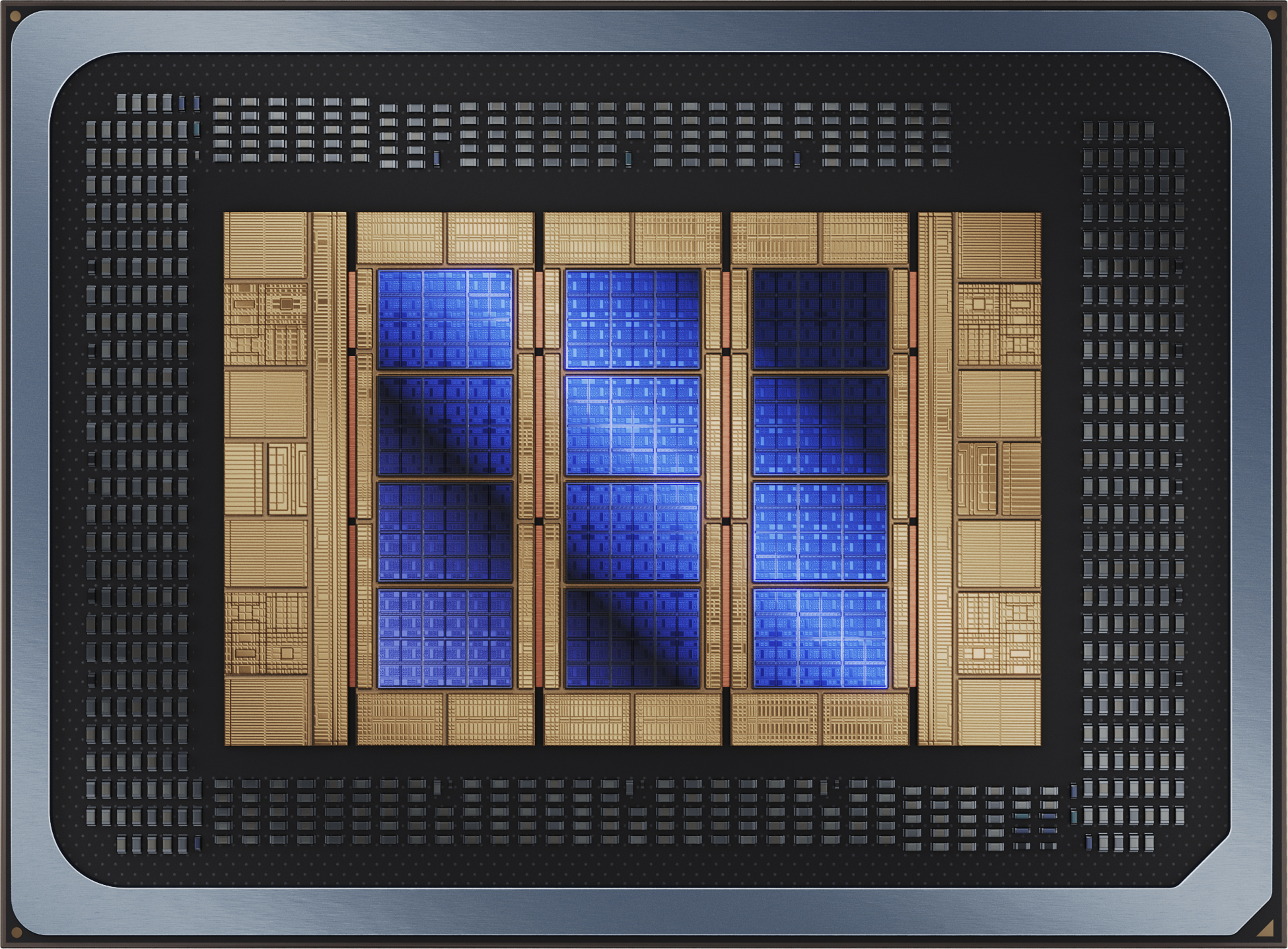

(Image credit: Intel)

Intel's 'Darkmont' efficiency cores have received rather meaningful microarchitectural upgrades. Each core integrates a 64 KB L1 instruction cache, a broader fetch and decode pipeline, and a deeper out-of-order engine capable of tracking more in-flight operations. The number of execution ports has also been increased in a bid to improve both scalar and vector throughput under heavily threaded server workloads.

From a cache hierarchy standpoint, the design groups cores into four-core blocks that share approximately 4 MB of L2 cache per block. As a result, the aggregate last-level cache across the full package surpasses 1 GB, roughly 1,152 MB in total. This unusually large pool is intended to keep data close to hundreds of active cores and reduce dependence on external memory bandwidth, which in turn is meant to both increase performance and lower power consumption.

Platform-wise, the processor remains drop-in compatible with the current Xeon server socket, so the CPU has 12 memory channels that support DDR5-8000, 96 PCIe 5.0 lanes with 64 lanes supporting CXL 2.0.

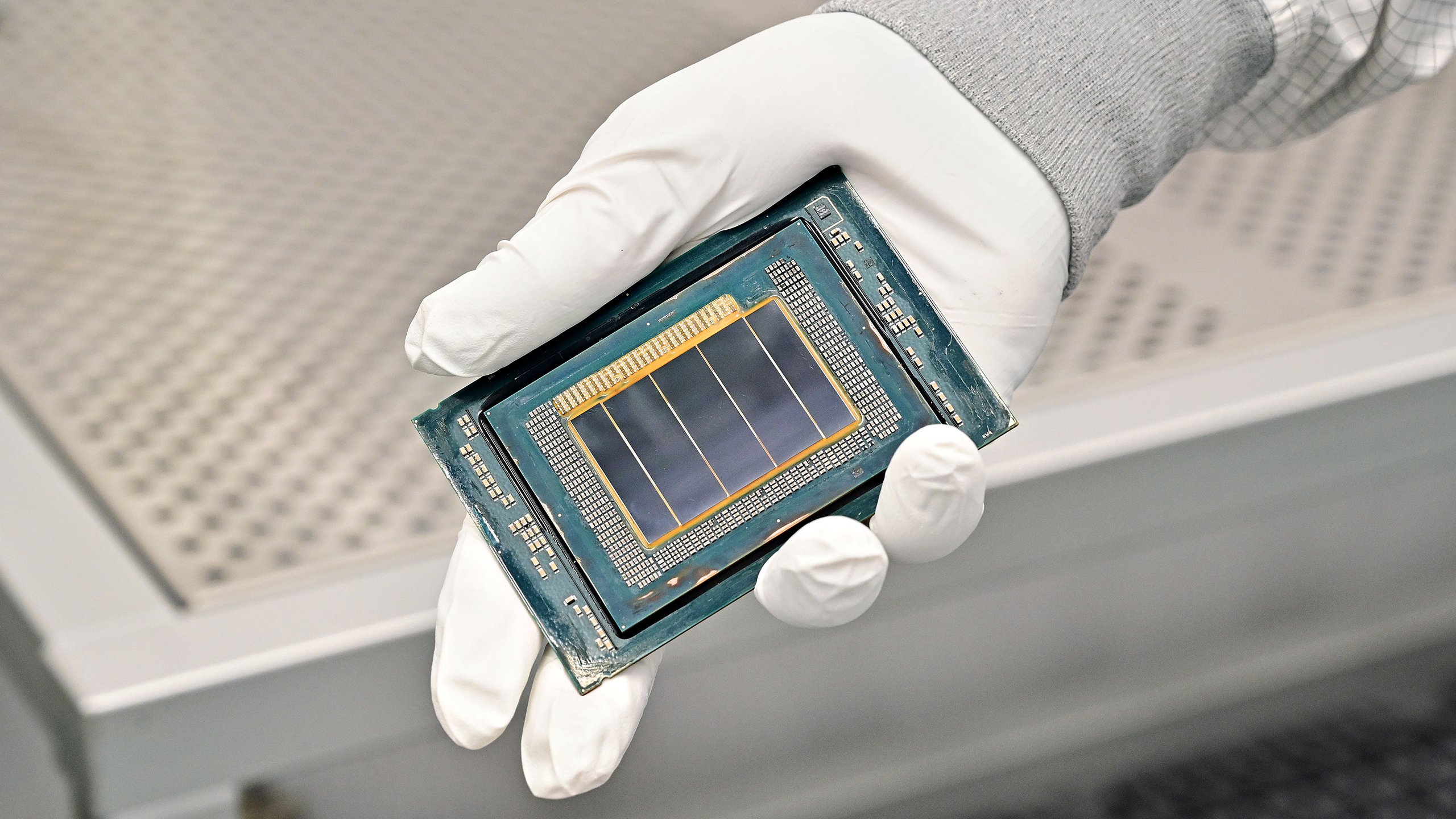

(Image credit: Intel)

Intel positions Clearwater Forest for telecom and cloud workloads. The company says operators deploying 5G Advanced and future 6G networks increasingly rely on server CPUs for virtualized RAN and edge AI inference, as they do not want to re-architect their data centers in a bid to accommodate AI accelerators. By combining matrix/vector acceleration, vRAN offloads (using the vRAN Boost), large caches, and broad I/O in one platform, the CPU can perform jobs that are normally reserved for various accelerators that consume more power and take up space.

Also, extreme core count of Xeon 6+ 'Clearwater Forest' CPUs — that approaches 288 cores for uniprocessor configurations and 576 cores in dual socket configurations, enabling a single server to host dozens or even hundreds of virtual machines while maintaining power efficiency and low latency.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Systems based on Intel's Xeon 6+ processors will be available later this year.

Follow Tom's Hardware on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.

Anton Shilov is a contributing writer at Tom’s Hardware. Over the past couple of decades, he has covered everything from CPUs and GPUs to supercomputers and from modern process technologies and latest fab tools to high-tech industry trends.