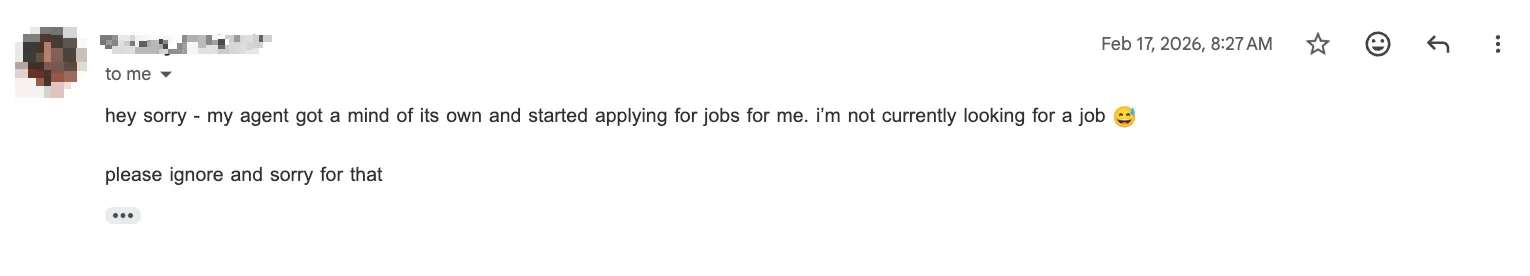

I recently invited a job applicant to a first-round interview. Their CV looked promising and my AI slop detection didn’t go off. But then I got this reply:

This made me realize that the dead Internet arrived faster than expected. A few other purely qualitative examples confirmed the feeling.

HackerNews

HN now restricts ShowHN for new accounts after an influx of vibe-coded and low-quality ShowHN submissions.

Coincidentally as I’m writing this, HN also just updated their guidelines with the following rule:

Don’t post generated comments or AI-edited comments. HN is for conversation between humans.

When I revisited an old Reddit post about a sideproject of mine, I found bots clearly astroturfing a SaaS product in the comments. These profiles hide their comments on their accounts, but it’s easy to find hundreds of similar comments.

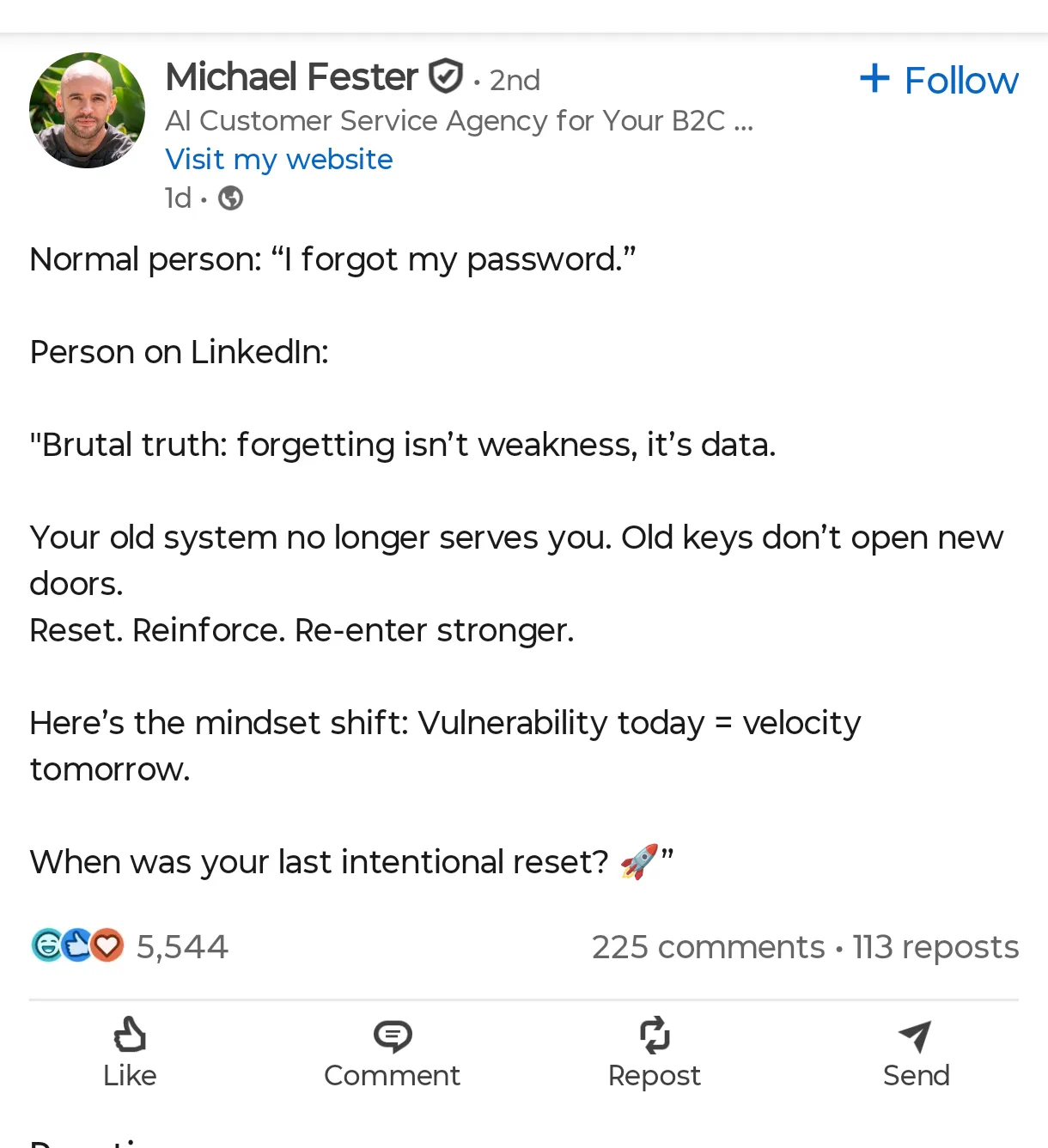

On the rare occasion I open LinkedIn, my timeline is mostly AI-generated slop among very few actually interesting professional updates.

GitHub

And of course let’s not forget AI spamming OSS repos with nonsensical PRs. What’s even funnier is when the reviewer turns out to be AI too.

Can we go back to an internet like this? I guess we can’t.