[

A few months ago, J. D. Vance, sitting vice president of the United States, gave an interview to Ross Douthat of the New York Times. During that interview, Vance and Douthat had an interesting exchange:

Douthat: How much do you worry about the potential downsides of AI? Not even on the apocalyptic scale, but on the scale of the way human beings respond to a sense of their own obsolescence? These kinds of things.

Vance: So, one, on the obsolescence point, I think the history of tech and innovation is that while it does cause job disruptions, it more often facilitates human productivity as opposed to replacing human workers. And the example I always give is the bank teller in the 1970s. There were very stark predictions of thousands, hundreds of thousands of bank tellers going out of a job. Poverty and commiseration.

What actually happens is we have more bank tellers today than we did when the ATM was created, but they’re doing slightly different work. More productive. They have pretty good wages relative to other folks in the economy.

I tend to think that is how this innovation happens.

There are two interesting things about what Vance said, both relating to the example that he chose about bank tellers and ATMs.

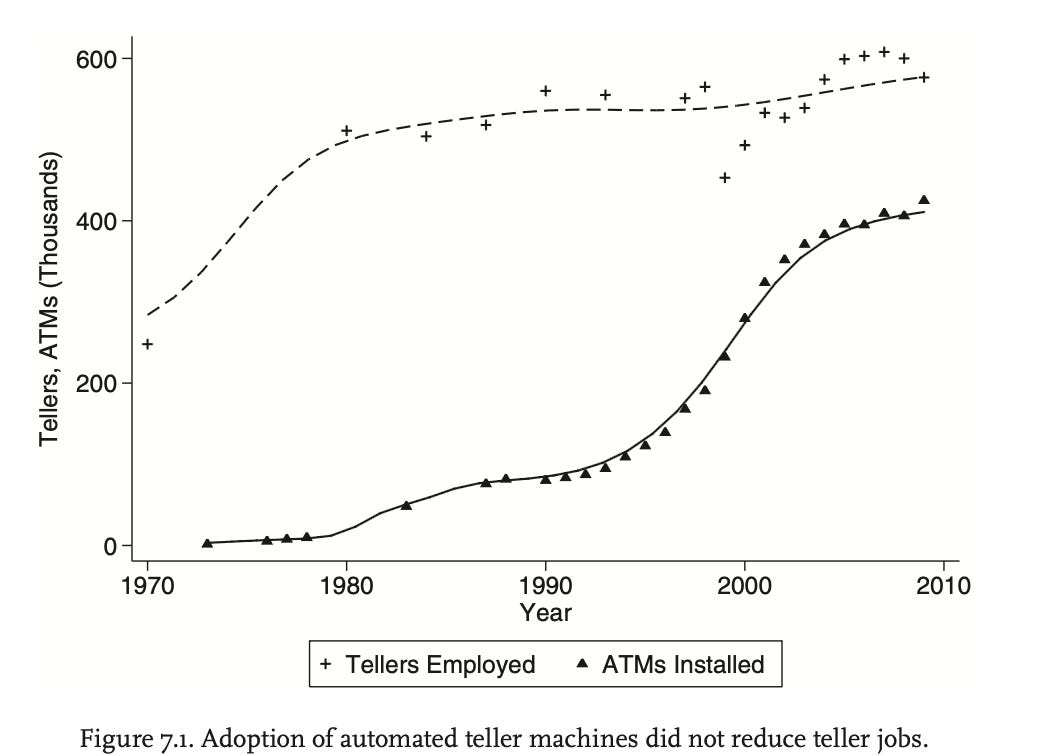

The first thing is what it tells us about who J. D. Vance is. The bank teller story—how ATMs were predicted to increase bank teller unemployment, but in fact did not—isn’t a story you’ll hear from politicians; in fact, for a long time, Barack Obama would claim, incorrectly, that ATMs had decreased the number of bank tellers, in order to suggest that the elevated unemployment rate during his presidency was due to productivity gains from technology. I’ve never heard a politician cite the bank teller story before: but I have seen the bank teller story cited in a lot of blogs. I’ve seen it cited, for example, by Scott Alexander and Matt Yglesias and Freddie deBoer; and I’ve heard it, upstream of the humble bloggers, from such fine economists as Daron Acemoglu and David Autor. The story of how ATMs didn’t automate bank tellers is, indeed, something of a minor parable of the economics profession. You can see it encapsulated in this wonderful graph from the economist James Bessen:

[

From James Bessen, Learning by Doing (2015)

So Vance’s choice of example tells us the same thing that his appearance on the Joe Rogan Experience did, which is that J. D. Vance—however much he might like to hide it—really, really loves reading blogs.

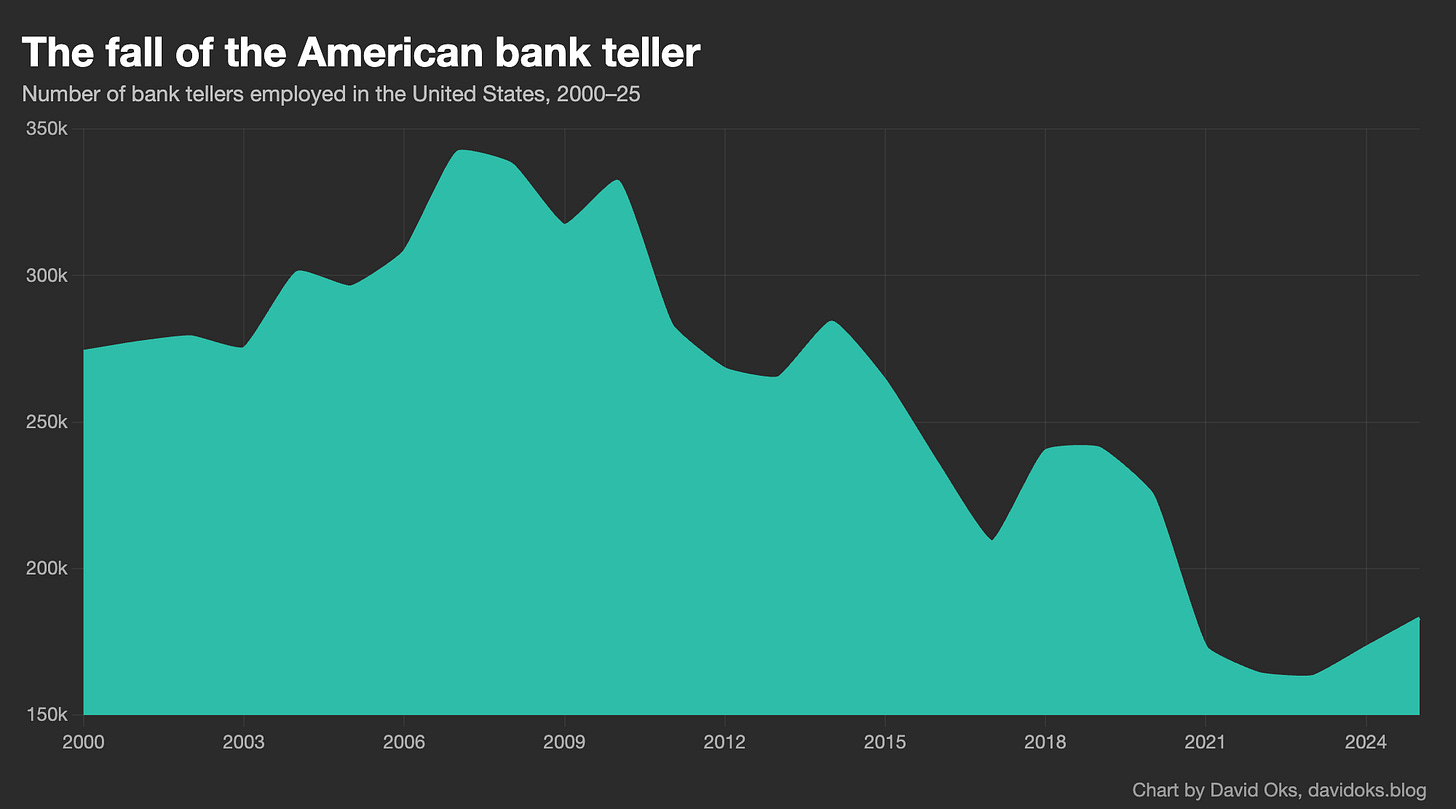

But the other thing about the bank teller story that Vance cites is that it’s wrong. We do not, contrary to what Vance claims, have “more bank tellers today than we did when the ATM was created”: we in fact have far fewer. The story he tells Douthat might have been true in 2000 or 2005, but it hasn’t been true for years. Bank teller employment has fallen off a cliff. Here is a graph of bank teller employment since 2000:

[

So what happened to bank tellers? Autor, Bessen, Vance, and the like are right to point out that ATMs did not reduce bank teller employment. But they miss the second half of the story, which is that another technology did. And that technology was the iPhone. The huge decline in bank teller employment that we’ve seen over the last 15-odd years is mainly a story about iPhones and what they made possible.

But why? Why did the ATM, literally called the automated teller machine, not automate the teller, while an entirely orthogonal technology—the iPhone—actually did?

The answer, I think, is complementarity.

In my last piece, on why I don’t think imminent mass job loss from AI is likely, I talked a lot about complementarity. The core point I made was that labor substitution is about comparative advantage, not absolute advantage: the relevant question for labor impacts is not whether AI can do the tasks that humans can do, but rather whether the aggregate output of humans working with AI is inferior to what AI can produce alone. And I suggested that given the vast number of frictions and bottlenecks that exist in any human domain—domains that are, after all, defined around human labor in all its warts and eccentricities, with workflows designed around humans in mind—we should expect to see a serious gap between the incredible power of the technology and its impacts on economic life.

That gap will probably close faster than previous gaps did: AI is not “like” electricity or the steam engine; an AI system is literally a machine that can think and do things itself. But the gap exists, and will exist even as the technology continues to amaze us with what it can now accomplish.

But by talking about why ATMs didn’t displace bank tellers but iPhones did, I want to highlight an important corollary, which is that the true force of a technology is felt not with the substitution of tasks, but the invention of new paradigms. This is the famous lesson of electricity and productivity growth, which I’ll return to in a future piece. When a technology automates some of what a human does within an existing paradigm, even the vast majority of what a human does within it, it’s quite rare for it to actually get rid of the human, because the definition of the paradigm around human-shaped roles creates all sorts of bottlenecks and frictions that demand human involvement. It’s only when we see the construction of entirely new paradigms that the full power of a technology can be realized. The ATM substituted tasks; but the iPhone made them irrelevant.

Let’s start with the actual story of how the ATM affected bank tellers.

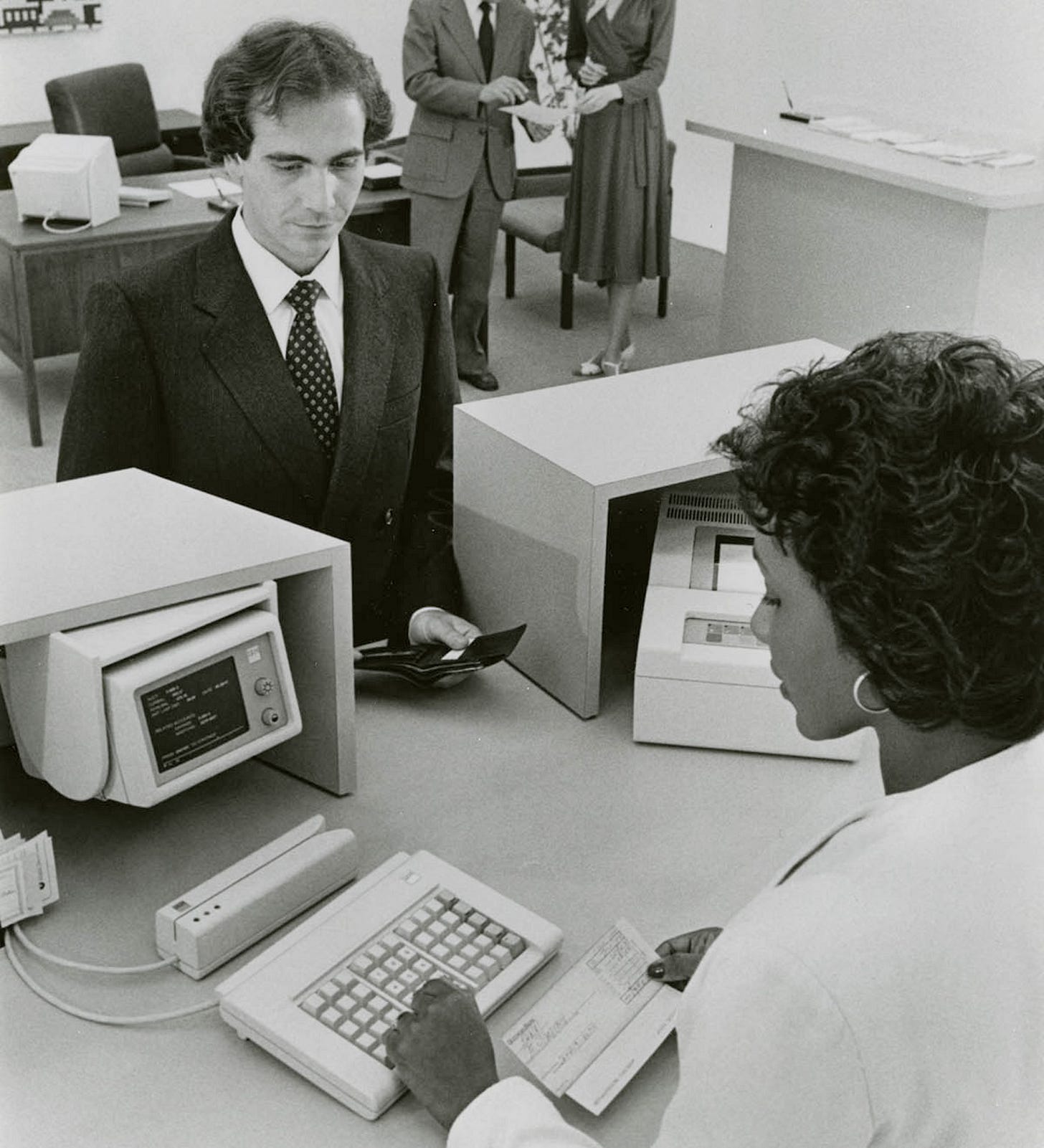

[

In the 1940s or ‘50s, if you owned a bank, you needed physical locations—these were your “branches”—and you needed people to staff those branches. You’d have your bank managers, your loan officers, and you’d have your bank tellers. When a customer wanted to deposit a check or check their balance or make a withdrawal, they’d talk to one of the tellers; and because this was the highest-volume type of interaction that people would have with your bank, you’d have to hire tellers in huge numbers.

The bank teller thus became a classic “mid-skill” occupation. It required a high school diploma and about a month of on-the-job training around counting cash and processing checks and settling accounts at the end of each day, but it didn’t require a college degree. And because they handled such a core part of the banking workflow, banks required a huge number of tellers: the average bank branch in an urban area might employ about two dozen people as tellers.

But in the 1950s and ‘60s, as Western economies were booming and enjoying their magnificent postwar economic expansions, labor was getting much more expensive. This was a good thing—it was simply the other side of rising wages—but it was also painful for enterprises that relied on lots of manual labor. And so we find that all the fashionable business concepts of the 1950s and ‘60s revolved around reducing labor costs to the maximum extent possible. It’s no coincidence that it was in the 1950s that the word “automation” entered the English language.

It used to be, for instance, that when you went shopping you’d have your stuff retrieved for you by a small army of clerks running around the shop; indeed that’s still how it’s done in places like India with an abundance of cheap labor. But humans were getting expensive in the 1950s and ‘60s, so everyone wanted to reduce the human component, and so in that period you saw the rise of supermarkets and discount stores, where the whole innovation is getting the stuff yourself. (Sam Walton’s Made in America is a good record of what that revolution was like from the inside; consumers tended to be quite happy with the whole thing, since corporate savings could be passed on in the form of cheaper goods.) And it’s the same reason why in the ‘50s and ‘60s you saw the rise of laundromats, vending machines, self-service gas stations, and “fast food” restaurants like McDonald’s.

So in the 1950s and ‘60s, the goal of every single business that employed humans was to find ways to replace humans with machines: in economic terms, to substitute capital for labor. And even though they were a relatively labor-light business to start with, this was true of banks as well. This was the case in the United States, but it was actually particularly true in Europe, where labor unrest among bank employees was an ongoing headache. (Financial sector employees were actually some of the most militant of all white-collar workers during this period: because of prolonged strikes by bank employees, Irish banks were closed 10 percent of the time between 1966 and 1976.)

Enter the computer. In the 1960s, to the great relief of bank management teams, it became possible to imagine that computers could be used to reduce the role of human labor in the banking process.

There were two key innovations that made this possible. The first was IBM’s invention of the magnetic stripe card in the 1960s: this was a thin strip of magnetized tape, bonded to a plastic card, that could encode and store data like account numbers, and which could be read by a machine when swiped through a card reader. And the second was Digital Equipment Corporation’s pioneering minicomputer, which dramatically reduced the price and size of general-purpose computing.

And so, bringing those two innovations together, you could finally imagine a machine that could do, programmatically, what a human teller might do: that could identify a customer automatically, via the magnetic stripe; that could communicate with the central servers of a bank to verify the customer’s account balance; and that could dispense cash or accept deposits accordingly.

And so in the 1960s, teams working concurrently in Sweden and the United Kingdom pioneered the earliest versions of what would eventually become known as the automated teller machine. These were primitive devices—they had the tendency to “eat” payment cards and to dispense incorrect amounts of money, and they didn’t see much uptake—but by the late 1960s it was clear where things were going. IBM, at that point the largest technology company in the world, soon took interest in the technology, and for the next few years groups of IBM engineers refined the technological and infrastructural layer to make the ATM functional.

And by the mid-1970s, after years of technical investment, the ATM was finally ready for prime time. By that point IBM, then enjoying its peak of influence, had decided the market wasn’t worth the investment, and so it ceded the nascent ATM industry to a company called Diebold.1

And in 1977 the ATM finally got its big break. Citibank, then the second-largest deposit bank in the United States, decided to make ATMs the subject of a large push: they spent a large sum installing the machines across its deposit branches. The New York Times reported it as “a $50 million gamble that the consumer can be wooed and won with electronic services.” But the response was tepid. In the same New York Times article, we encounter a scene from a bank branch in Queens where one of Citibank’s ATMs was installed: “most of the customers,” the article reports, “preferred to wait in line a few moments and deal with the teller rather than test the new machines.”

But Citibank’s gamble paid off. Consumer wariness toward ATMs turned out to be temporary: the advantages of the ATM over the human teller were obvious. Running an ATM was cheaper than paying a human—each ATM transaction cost the bank just 27 cents, compared to $1.07 for a human teller—and this could either be passed to the consumer in the form of lower fees or simply kept as profit. And ATMs were also just more convenient. An ATM could do in 30 seconds what would take a human teller at least a few minutes; and while a human teller was only available during business hours, ATMs could be used at any time of day.

And the benefits for the bank were even greater. ATMs were expensive to install, but once they were installed they were wonderfully lucrative and had low maintenance costs. The fee opportunities were wonderful, since banks could charge fees on out-of-network transactions. And since ATMs were not legally considered to be branches, banks could deploy ATMs without running afoul of banking laws that restricted interstate bank branching.

All of this meant that banks had a really strong incentive to put ATMs everywhere. And so they did. In 1975 there were about 31 ATMs per one million Americans; by the year 2000, that number had grown to 1,135, a 37-fold increase in just 25 years.

And what did this do to the bank tellers?

The natural expectation is that ATMs would make human bank tellers obsolete, or at least strongly reduce demand for bank teller jobs. And indeed the number of bank tellers per branch declined significantly: from 21 tellers per branch to about 13 per branch once ATMs had hit saturation. But this decline in teller intensity corresponded with an increase in aggregate teller employment. The number of ATMs per capita grew dramatically after 1975; but the number of bank tellers increased along with it. Bank tellers did become a smaller share of total employment, since the increase in bank teller employment was smaller than the increase in other occupations; but at no point in the period between 1970 and 2010 did the number of bank tellers actually enter a prolonged decline.

Why is that? Why did ATMs, which automated the bulk of the teller’s job, not lead to a decrease in teller employment?

We find the most elegant explanation in a paper from David Autor:

First, by reducing the cost of operating a bank branch, ATMs indirectly increased the demand for tellers: the number of tellers per branch fell by more than a third between 1988 and 2004, but the number of urban bank branches (also encouraged by a wave of bank deregulation allowing more branches) rose by more than 40 percent. Second, as the routine cash-handling tasks of bank tellers receded, information technology also enabled a broader range of bank personnel to become involved in “relationship banking.” Increasingly, banks recognized the value of tellers enabled by information technology, not primarily as checkout clerks, but as salespersons, forging relationships with customers and introducing them to additional bank services like credit cards, loans, and investment products.

We thus have a classic case of the Jevons effect. Teller labor was an input into an output that we can call “financial services.” ATMs allowed us to produce that output more efficiently and economize on the use of the labor input. But demand for the output was sufficiently elastic that more efficient production meant more demand: and demand increased to the point that there was actually greater demand for the labor input as well. And—this part is not quite the classic Jevons effect—the greater demand suggested to banks that there had been certain functions that were previously considered incidental to the teller job, like “relationship banking,” which were actually quite useful. And so ATMs were a truly complementary technology for the bank teller.

By the 2010s, people had begun to notice that there had been no mass unemployment of bank tellers. In 2015, James Bessen published a book called Learning by Doing, using the non-automation of bank tellers as a central example; soon it became a sort of load-bearing parable about what Matt Yglesias called “the myth of technological unemployment.” From Bessen the story diffused to Autor and Acemoglu; then to the economics bloggers; then to people like Eric Schmidt, who cited the ATM story in 2017 as one reason why he was a “denier” on the question of technological job loss. And they were right: ATMs really didn’t reduce bank teller employment.

But there was an ironic element to all of this: at the exact moment that people started talking about how technology had not displaced bank tellers, it stopped being true.

[

In the 2010s, bank teller employment entered a period of prolonged decline. This was not a product of the financial crisis that peaked in 2008: bank teller employment was roughly the same in 2010 as it had been in 2007. And the decline was not rapid but gradual. It continued even as banks returned to full health as the Great Recession abated. First there was a severe decline that started after 2010; then a slight recovery at the end of the decade; and then a collapse during the COVID years from which bank teller employment has never recovered. In 2010, there were 332,000 full-time bank tellers in the United States; by 2016, there were 235,000; by 2022, there were just 164,000.

This was not a long-delayed ATM shock: the ATM had reached full saturation long before. It was, rather, the effect of another technology, one that had nothing to do with banking. It was a product of the iPhone.

Apple first introduced the iPhone in 2007. By 2010, it was clear that the iPhone-style smartphone, with a touchscreen and an app store, was going to be the defining technological paradigm of the years to come: people were going to conduct huge portions of their life through the prism of the smartphone, which soon became simply “the phone.” And just as more forward-thinking institutions like Citibank knew in the 1970s that ATMs were the future, the smarter banks knew by the early 2010s that the future lay in what they called mobile banking.

The mobile banking vision was simple: the banking customers of the future would do all their banking via their banks’ mobile apps. They would buy things via payment cards or, later, via Apple Pay; they would check their balance or make deposits through the banking app; the customer’s relationship with the bank would be mediated entirely via the app. In this new world, there was no reason for the physical bank location to exist. Indeed there were new entrants, like Revolut or Klarna, that existed entirely as mobile apps. The branch was a thing of the past.

Mobile banking succeeded much more rapidly than the ATM did—which is remarkable, considering that mobile banking was a much bigger change than the ATM. I remember, as a kid, opening my first bank account at the Chase branch in my hometown, and the excitement of occasionally visiting there to deposit any checks I might have. I’m still a Chase customer, and I interact frequently with my Chase account for all sorts of reasons. But it’s been many years since I visited a physical Chase location. My relationship with Chase has transcended any need for the branch. I don’t think I’m alone in this: the Chase branch in my hometown, where I would once deposit checks, closed in 2023. The building now houses a doctor’s office.

And so the rise of mobile banking removed any real reason to have bank branches. Visits to bank branches declined dramatically throughout the 2010s, and banks aggressively redesigned the banking experience around the digital interface. The number of commercial bank branches per capita peaked in 2009 and has fallen by nearly 30 percent since, with most of the decline occurring in wealthier areas that were more likely to adopt digital banking first. Between 2008 and 2025, Bank of America, which at some point surpassed Citibank as the second-largest deposit bank in the United States after Chase, closed about 40 percent of its branches. Online banking had been around since the 1990s, Bank of America’s CEO said, but the iPhone was a “game changer” that “effectively allowed customers to carry a bank branch in their pockets.”

And as the branch disappeared, so did the teller. ATM had been an innovation within the existing world of physical banking, and thus its replacement of the bank teller could inevitably only be partial; as long as people were still visiting the bank branch, it was useful to repurpose tellers as “relationship bankers.” But when branch visits declined that stopped making sense. The iPhone represented a wholly different way of banking, and within it there was no real need for the bank teller: and so a large institution like Bank of America was able to reduce its headcount from 288,000 in 2010 to 204,000 in 2018.

Of course, the transition to mobile banking also created jobs: banks now needed software developers to build and maintain the digital interface, and they needed customer service representatives to handle any problems that might emerge. And so a “mid-skill” job was replaced by a thin stratum of “high-skill” jobs and a vast army of “low-skill” ones. The term for this in labor economics is “job polarization.”

So that’s the irony of the parable of the bank teller. Technology did kill the bank teller job. It wasn’t the ATM that did it, but the iPhone.

I think the story of the ATM and the iPhone offers us an important lesson about technology and its impacts on labor markets. Because Vance, of course, wasn’t really talking about ATMs when he talked about ATMs; he was talking about AI.

The lesson is worth stating plainly. The ATM tried to do the teller’s job better, faster, cheaper; it tried to fit capital into a labor-shaped hole; but the iPhone made the teller’s job irrelevant. One automated tasks within an existing paradigm, and the other created a new paradigm in which those tasks simply didn’t need to exist at all. And it is paradigm replacement, not task automation, that actually displaces workers—and, conversely, unlocks the latent productivity within any technology. That’s because as long as the old paradigm persists, there will be labor-shaped holes in which capital substitution will encounter constant frictions and bottlenecks.

This has, I think, serious implications for how we’re thinking about AI.

People in AI frequently talk about the vision of AI being a “drop-in remote worker”: AI systems that can be inserted into a workflow, learn it, and eventually do it on the level of a competent human. And they see that as the point where you’ll start to see serious productivity gains and labor displacement.

I am not a “denier” on the question of technological job loss; Vance’s blithe optimism is not mine. But I’m skeptical that simply slotting AI into human-shaped jobs will have the results people seem to expect. The history of technology, even exceptionally powerful general-purpose technology, tells us that as long as you are trying to fit capital into labor-shaped holes you will find yourself confronted by endless frictions: just as with electricity, the productivity inherent in any technology is unleashed only when you figure out how to organize work around it, rather than slotting it into what already exists. We are still very much in the regime of slotting it in. And as long as we are in that regime, I expect disappointing productivity gains and relatively little real displacement.

The real productivity gains from AI—and the real threat of labor displacement—will come not from the “drop-in remote worker,” but from something like Dwarkesh Patel’s vision of the fully-automated firm. At some point in the life of every technology, old workflows are replaced by new ones, and we discover the paradigms in which the full productive force of a technology can best be expressed. In the past this has simply been a fact of managerial turnover or depreciation cycles. But with AI it will likely be the sheer power of the technology itself, which really is wholly unlike anything that has come before, and unlike electricity or the steam engine will eventually be able to build the structures that harness its powers by itself.

I don’t think we’ve really yet learned what those new structures will look like. But, at the limit, I don’t quite know why humans have to be involved in those: though I suspect that by the time we’re dealing with the fully-automated organizations of the future, our current set of concerns will have been largely outmoded by new and quite foreign ones, as has always been the case with human progress.

But, however optimistic I might be about the human future, I don’t think it’s worth leaning on the history of past technologies for comfort. The ATM parable is a comforting story; and in times of uncertainty and fear we search naturally for solace and comfort wherever it may come. But even when it comes to bank tellers, it’s only the first half of the story.

Diebold’s history is a fascinating case study in the rise and fall of American industry—from its humble origins in 1859 as a manufacturer of safe and bank vaults, to its enormous success in the second half of the twentieth century as the world’s leading manufacturer of ATMs, to a period of debt-fueled hubris in the 1990s and 2000s that culminated in its filing for bankruptcy in 2023. You may remember the name Diebold from the controversies around the 2004 election, after the company had diversified into the field of voting machines: there was a popular conspiracy theory that claimed that rigged Diebold voting machines had stolen the state of Ohio for George W. Bush. We find in the course of its ill-starred life every doleful corporate trend of the twentieth century.

No posts