Modern kernel anti-cheat systems are, without exaggeration, among the most sophisticated pieces of software running on consumer Windows machines. They operate at the highest privilege level available to software, they intercept kernel callbacks that were designed for legitimate security products, they scan memory structures that most programmers never touch in their entire careers, and they do all of this transparently while a game is running. If you have ever wondered how BattlEye actually catches a cheat, or why Vanguard insists on loading before Windows boots, or what it means for a PCIe DMA device to bypass every single one of these protections, this post is for you.

This is not a comprehensive or authoritative reference. It is just me documenting what I found and trying to explain it clearly. Some of it comes from public research and papers I have linked at the bottom, some from reading kernel source and reversing drivers myself. If something is wrong, feel free to reach out. The post assumes some familiarity with Windows internals and low-level programming, but I have tried to explain each concept before using it.

1. Introduction

Why Usermode Protections Are Not Enough

The fundamental problem with usermode-only anti-cheat is the trust model. A usermode process runs at ring 3, subject to the full authority of the kernel. Any protection implemented entirely in usermode can be bypassed by anything running at a higher privilege level, and in Windows that means ring 0 (kernel drivers) or below (hypervisors, firmware). A usermode anti-cheat that calls ReadProcessMemory to check game memory integrity can be defeated by a kernel driver that hooks NtReadVirtualMemory and returns falsified data. A usermode anti-cheat that enumerates loaded modules via EnumProcessModules can be defeated by a driver that patches the PEB module list. The usermode process is completely blind to what happens above it.

Cheat developers understood this years before most anti-cheat engineers were willing to act on it. The kernel was, for a long time, the exclusive domain of cheats. Kernel-mode cheats could directly manipulate game memory without going through any API that a usermode anti-cheat could intercept. They could hide their presence from usermode enumeration APIs trivially. They could intercept and forge the results of any check a usermode anti-cheat might perform.

The response was inevitable: move the anti-cheat into the kernel.

The Arms Race

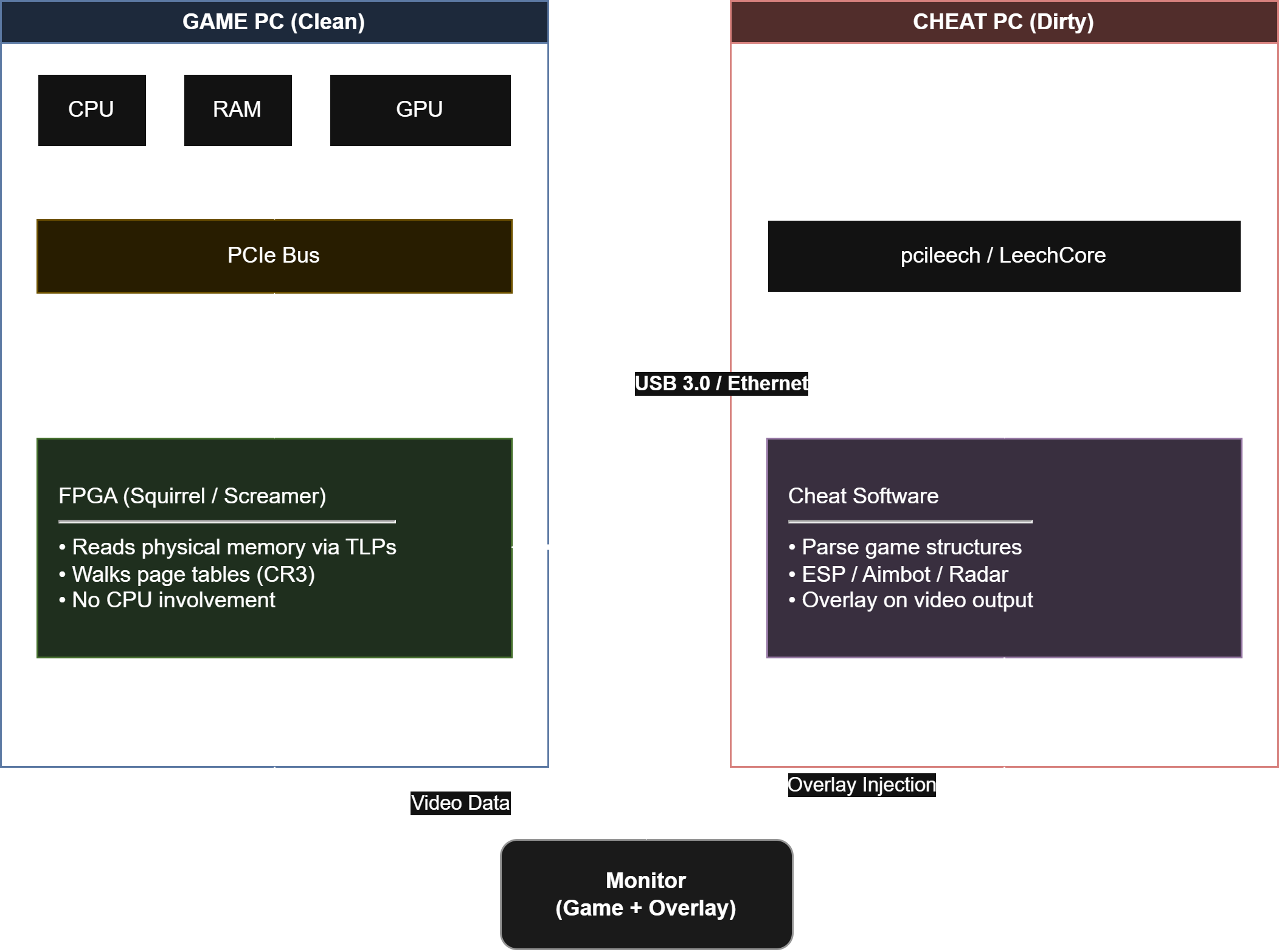

The escalation has been relentless. Usermode cheats gave way to kernel cheats. Kernel anti-cheats appeared in response. Cheat developers began exploiting legitimate, signed drivers with vulnerabilities to achieve kernel execution without loading an unsigned driver (the BYOVD attack). Anti-cheats responded with blocklists and stricter driver enumeration. Cheat developers moved to hypervisors, running below the kernel and virtualizing the entire OS. Anti-cheats added hypervisor detection. Cheat developers began using PCIe DMA devices to read game memory directly through hardware without ever touching the OS at all. The response to that is still being developed.

Each escalation requires the attacking side to invest more capital and expertise, which has an important effect: it filters out casual cheaters. A $30 kernel cheat subscription is accessible to many people. A custom FPGA DMA setup costs hundreds of dollars and requires significant technical knowledge to configure. The arms race, while frustrating for anti-cheat engineers, does serve the practical goal of making cheating expensive and difficult enough that most cheaters do not bother.

Major Anti-Cheat Systems

Four systems dominate the competitive gaming landscape:

BattlEye is used by PUBG, Rainbow Six Siege, DayZ, Arma, and dozens of other titles. Its kernel component is BEDaisy.sys, and it has been the subject of detailed public reverse engineering work, most notably by the secret.club researchers and the back.engineering blog.

EasyAntiCheat (EAC) is now owned by Epic Games and used in Fortnite, Apex Legends, Rust, and many others. Its architecture is broadly similar to BattlEye in its three-component design but differs significantly in implementation details.

Vanguard is Riot Games’ proprietary anti-cheat used in Valorant and League of Legends. It is notable for loading its kernel component (vgk.sys) at system boot rather than at game launch, and for its aggressive stance on driver allowlisting.

FACEIT AC is used for the FACEIT competitive platform for Counter-Strike. It is a kernel-level system with a well-regarded reputation in the competitive community for effective cheat detection, and has been the subject of academic analysis examining the architectural properties of kernel anti-cheat software more broadly.

The ARES 2024 Paper on Kernel Anti-Cheat Architecture

The 2024 paper “If It Looks Like a Rootkit and Deceives Like a Rootkit” (presented at ARES 2024) analyzed FACEIT AC and Vanguard through the lens of rootkit taxonomy, noting that both systems share technical characteristics with that class of software: kernel-level operation, system-wide callback registration, and broad visibility into OS activity. The authors are careful to distinguish between technical classification and intent, explicitly acknowledging that these systems are legitimate software serving a defensive purpose. The paper’s contribution is primarily taxonomic rather than accusatory.

The underlying observation is simply that effective kernel anti-cheat requires the same OS primitives that malicious kernel software uses, because those primitives are what provide the visibility needed to detect cheats. Any sufficiently capable kernel anti-cheat will look like a rootkit under static behavioral analysis, because capability and intent are orthogonal at the kernel API level. This is a constraint imposed by Windows architecture, not a design choice unique to any particular anti-cheat vendor.

2. Architecture of a Kernel Anti-Cheat

The Three-Component Model

Modern kernel anti-cheats universally follow a three-layer architecture:

Kernel driver: Runs at ring 0. Registers callbacks, intercepts system calls, scans memory, enforces protections. This is the component that actually has the power to do anything meaningful.

Usermode service: Runs as a Windows service, typically with

SYSTEMprivileges. Communicates with the kernel driver via IOCTLs. Handles network communication with backend servers, manages ban enforcement, collects and transmits telemetry.Game-injected DLL: Injected into (or loaded by) the game process. Performs usermode-side checks, communicates with the service, and serves as the endpoint for protections applied to the game process specifically.

The separation of concerns here is both architectural and security-motivated. The kernel driver can do things no usermode component can, but it cannot easily make network connections or implement complex application logic. The service can do those things but cannot directly intercept system calls. The in-game DLL has direct access to game state but runs in an untrustworthy ring-3 context.

Communication Channels

IOCTLs (I/O Control Codes) are the primary communication mechanism between usermode and a kernel driver. A usermode process opens a handle to the driver’s device object and calls DeviceIoControl with a control code. The driver handles this in its IRP_MJ_DEVICE_CONTROL dispatch routine. The entire communication is mediated by the kernel, which means a compromised usermode component cannot forge arbitrary kernel operations - it can only make requests that the driver is programmed to service.

Named pipes are used for IPC between the service and the game-injected DLL. A named pipe is faster and simpler than routing everything through the kernel, and it allows the service to push notifications to the game component without polling.

Shared memory sections created with NtCreateSection and mapped into both the service process and the game process via NtMapViewOfSection allow high-bandwidth, low-latency data sharing. Telemetry data (input events, timing data) can be written to a shared ring buffer by the game DLL and read by the service without the overhead of IPC per event.

Boot-time vs Runtime Driver Loading

The distinction between boot-time and runtime driver loading is more significant than it might appear.

BattlEye and EAC load their kernel drivers when the game is launched. BEDaisy.sys and its EAC equivalent are registered as demand-start drivers and loaded via ZwLoadDriver from the service when the game starts. They are unloaded when the game exits.

Vanguard loads vgk.sys at system boot. The driver is configured as a boot-start driver (SERVICE_BOOT_START in the registry), meaning the Windows kernel loads it before most of the system has initialized. This gives Vanguard a critical advantage: it can observe every driver that loads after it. Any driver that loads after vgk.sys can be inspected before its code runs in a meaningful way. A cheat driver that loads at the normal driver initialization phase is loading into a system that Vanguard already has eyes on.

The practical implication of boot-time loading is also why Vanguard requires a system reboot to enable: the driver must be in place before the rest of the system initializes, which means it cannot be loaded after the fact without a restart.

Driver Signing Requirements

Windows enforces Driver Signature Enforcement (DSE) on 64-bit systems, which requires that kernel drivers be signed with a certificate that chains to a trusted root and that the driver’s code integrity be verified at load time. This is implemented through CiValidateImageHeader and related functions in ci.dll. The kernel also enforces that driver certificates meet certain Extended Key Usage (EKU) requirements.

Anti-cheats handle signing in the obvious way: they pay for extended validation (EV) code signing certificates, go through Microsoft’s WHQL process for some components, or use cross-signing. The certificate requirements have tightened significantly over the years; Microsoft now requires EV certificates for new kernel drivers, and the kernel driver signing portal requires WHQL submission for drivers targeting Windows 10 and later in many cases.

The reason this matters for cheats is that DSE is a significant barrier. Without a signed driver or a way to bypass DSE, a cheat author cannot load arbitrary kernel code. BYOVD attacks (covered in section 7) are the primary mechanism for bypassing this restriction.

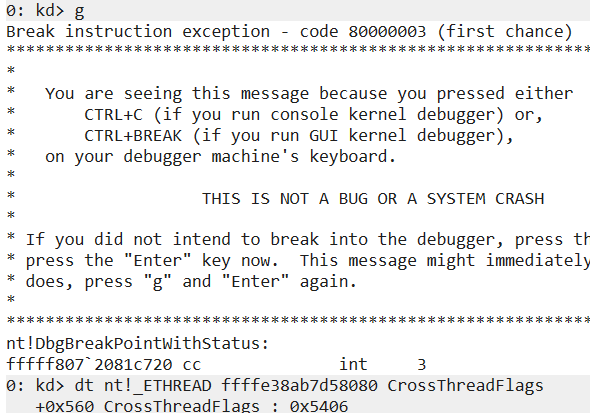

BattlEye Component Breakdown

BattlEye’s architecture is well-documented through reverse engineering:

BEDaisy.sys is the kernel driver. It registers callbacks for process creation, thread creation, image loading, and object handle operations. It implements the actual scanning and protection logic.

BEService.exe (or BEService_x64.exe) is the usermode service. It communicates with BEDaisy.sys via a device object that the driver exposes. It handles network communication with BattlEye’s backend servers, receives detection results from the driver, and is responsible for ban enforcement (kicking the player from the game server).

BEClient_x64.dll is injected into the game process. BattlEye does not inject this via CreateRemoteThread in the traditional sense - it is loaded as part of game initialization, with the game’s cooperation. This DLL is responsible for performing usermode-side checks within the game process context: it verifies its own integrity, performs various environment checks, and serves as the target for protections that the kernel driver applies specifically to the game process.

The communication flow goes: BEDaisy.sys detects something suspicious, signals BEService.exe via an IOCTL completion or a shared memory notification, BEService.exe reports to BattlEye’s servers, the server decides on an action (kick/ban), and BEService.exe instructs the game to terminate the connection.

BattlEye’s three-component architecture: BEDaisy.sys at ring 0 communicates upward via IOCTLs to BEService.exe running as a SYSTEM service, which manages BEClient_x64.dll injected in the game process.

BattlEye’s three-component architecture: BEDaisy.sys at ring 0 communicates upward via IOCTLs to BEService.exe running as a SYSTEM service, which manages BEClient_x64.dll injected in the game process.

Vanguard’s Architecture

vgk.sys is notably more aggressive than the BattlEye driver in its scope. Because it loads at boot, it can intercept the driver load process itself. Vanguard maintains an internal allowlist of drivers that are permitted to co-exist with a protected game. Any driver not on this list, or any driver that fails integrity checks, can result in Vanguard refusing to allow the game to launch. This is an allowlist model rather than a blocklist model, which is architecturally much stronger.

vgauth.exe is the Vanguard service, which handles the communication between vgk.sys and Riot’s backend infrastructure.

3. Kernel Callbacks and Monitoring

This is the foundation of everything a kernel anti-cheat does. The Windows kernel exposes a rich set of callback registration APIs intended for security products, and anti-cheats use every one of them.

ObRegisterCallbacks

ObRegisterCallbacks is perhaps the single most important API for process protection. It allows a driver to register a callback that is invoked whenever a handle to a specified object type is opened or duplicated. For anti-cheat purposes, the object types of interest are PsProcessType and PsThreadType.

<table><tbody><tr><td><pre>1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 </pre></td><td><pre><span>OB_CALLBACK_REGISTRATION</span> <span>callbackReg</span> <span>=</span> <span>{</span><span>0</span><span>};</span> <span>OB_OPERATION_REGISTRATION</span> <span>opReg</span><span>[</span><span>2</span><span>]</span> <span>=</span> <span>{</span><span>0</span><span>};</span> <span>// Altitude string is required - must be unique per driver</span> <span>UNICODE_STRING</span> <span>altitude</span> <span>=</span> <span>RTL_CONSTANT_STRING</span><span>(</span><span>L"31001"</span><span>);</span> <span>// Monitor handle opens to process objects</span> <span>opReg</span><span>[</span><span>0</span><span>].</span><span>ObjectType</span> <span>=</span> <span>PsProcessType</span><span>;</span> <span>opReg</span><span>[</span><span>0</span><span>].</span><span>Operations</span> <span>=</span> <span>OB_OPERATION_HANDLE_CREATE</span> <span>|</span> <span>OB_OPERATION_HANDLE_DUPLICATE</span><span>;</span> <span>opReg</span><span>[</span><span>0</span><span>].</span><span>PreOperation</span> <span>=</span> <span>ObPreOperationCallback</span><span>;</span> <span>opReg</span><span>[</span><span>0</span><span>].</span><span>PostOperation</span> <span>=</span> <span>ObPostOperationCallback</span><span>;</span> <span>// Monitor handle opens to thread objects</span> <span>opReg</span><span>[</span><span>1</span><span>].</span><span>ObjectType</span> <span>=</span> <span>PsThreadType</span><span>;</span> <span>opReg</span><span>[</span><span>1</span><span>].</span><span>Operations</span> <span>=</span> <span>OB_OPERATION_HANDLE_CREATE</span> <span>|</span> <span>OB_OPERATION_HANDLE_DUPLICATE</span><span>;</span> <span>opReg</span><span>[</span><span>1</span><span>].</span><span>PreOperation</span> <span>=</span> <span>ObPreOperationCallback</span><span>;</span> <span>opReg</span><span>[</span><span>1</span><span>].</span><span>PostOperation</span> <span>=</span> <span>NULL</span><span>;</span> <span>callbackReg</span><span>.</span><span>Version</span> <span>=</span> <span>OB_FLT_REGISTRATION_VERSION</span><span>;</span> <span>callbackReg</span><span>.</span><span>OperationRegistrationCount</span> <span>=</span> <span>2</span><span>;</span> <span>callbackReg</span><span>.</span><span>Altitude</span> <span>=</span> <span>altitude</span><span>;</span> <span>callbackReg</span><span>.</span><span>RegistrationContext</span> <span>=</span> <span>NULL</span><span>;</span> <span>callbackReg</span><span>.</span><span>OperationRegistration</span> <span>=</span> <span>opReg</span><span>;</span> <span>NTSTATUS</span> <span>status</span> <span>=</span> <span>ObRegisterCallbacks</span><span>(</span><span>&</span><span>callbackReg</span><span>,</span> <span>&</span><span>gCallbackHandle</span><span>);</span> </pre></td></tr></tbody></table>

The pre-operation callback receives a POB_PRE_OPERATION_INFORMATION structure. The critical field is Parameters->CreateHandleInformation.DesiredAccess. The callback can strip access rights from the desired access by modifying Parameters->CreateHandleInformation.DesiredAccess before the handle is created. This is how anti-cheats prevent external processes from opening handles to the game process with PROCESS_VM_READ or PROCESS_VM_WRITE access.

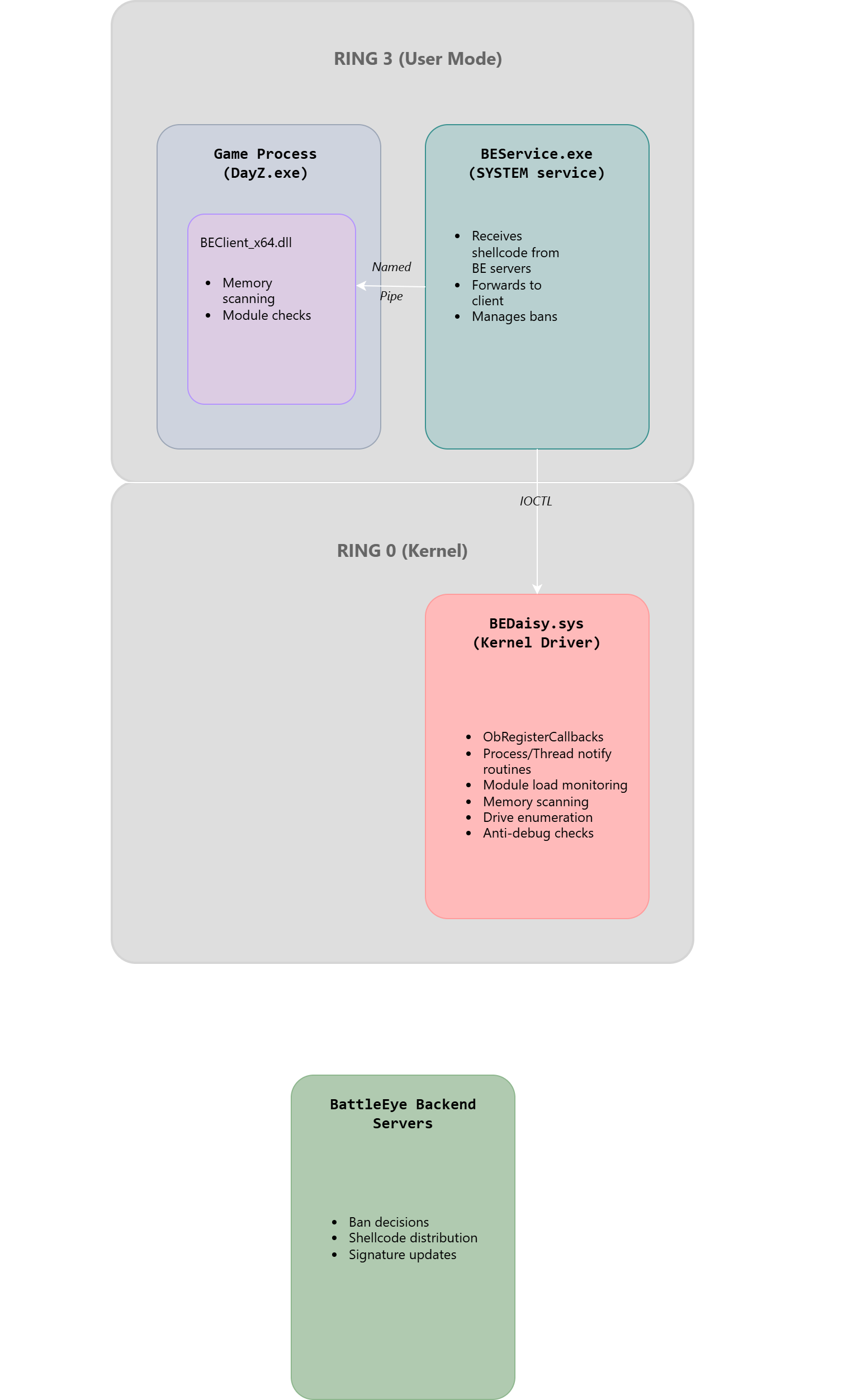

When a cheat calls OpenProcess(PROCESS_VM_READ | PROCESS_VM_WRITE, FALSE, gameProcessId), the anti-cheat’s ObRegisterCallbacks pre-operation callback fires. The callback checks whether the target process is the protected game process. If it is, it strips PROCESS_VM_READ, PROCESS_VM_WRITE, PROCESS_VM_OPERATION, and PROCESS_DUP_HANDLE from the desired access. The cheat receives a handle, but the handle is useless for reading or writing game memory. The cheat’s ReadProcessMemory call will fail with ERROR_ACCESS_DENIED.

The IRQL for ObRegisterCallbacks pre-operation callbacks is PASSIVE_LEVEL, which means the callback can call pageable code and perform blocking operations (within reason).

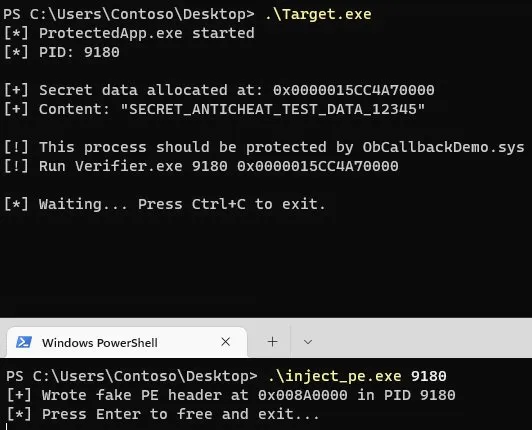

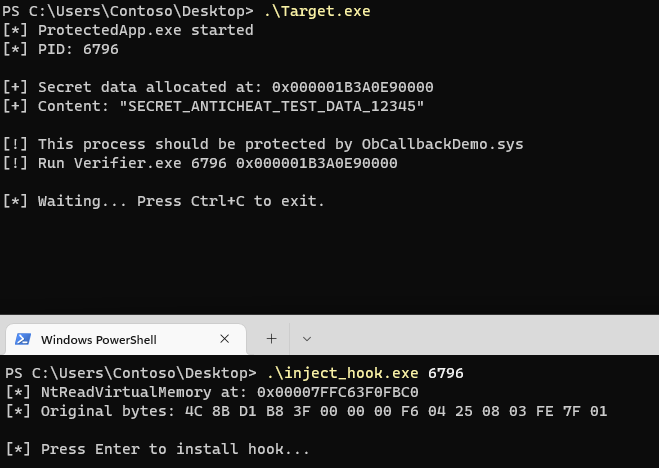

ObCallbackDemo.sys in action. DebugView shows the driver stripping handle access rights, Target.exe is running with secret data in memory, and Verifier.exe fails to read it with Access Denied.

ObCallbackDemo.sys in action. DebugView shows the driver stripping handle access rights, Target.exe is running with secret data in memory, and Verifier.exe fails to read it with Access Denied.

PsSetCreateProcessNotifyRoutineEx

PsSetCreateProcessNotifyRoutineEx allows a driver to register a callback that fires on every process creation and termination event system-wide. The callback receives a PEPROCESS for the process, the PID, and a PPS_CREATE_NOTIFY_INFO structure containing details about the process being created (image name, command line, parent PID).

<table><tbody><tr><td><pre>1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 </pre></td><td><pre><span>VOID</span> <span>ProcessNotifyCallback</span><span>(</span> <span>PEPROCESS</span> <span>Process</span><span>,</span> <span>HANDLE</span> <span>ProcessId</span><span>,</span> <span>PPS_CREATE_NOTIFY_INFO</span> <span>CreateInfo</span> <span>)</span> <span>{</span> <span>if</span> <span>(</span><span>CreateInfo</span> <span>==</span> <span>NULL</span><span>)</span> <span>{</span> <span>// Process is terminating</span> <span>HandleProcessTermination</span><span>(</span><span>Process</span><span>,</span> <span>ProcessId</span><span>);</span> <span>return</span><span>;</span> <span>}</span> <span>// Process is being created</span> <span>if</span> <span>(</span><span>CreateInfo</span><span>-></span><span>ImageFileName</span> <span>!=</span> <span>NULL</span><span>)</span> <span>{</span> <span>// Check if this is a known cheat process</span> <span>if</span> <span>(</span><span>IsKnownCheatProcess</span><span>(</span><span>CreateInfo</span><span>-></span><span>ImageFileName</span><span>))</span> <span>{</span> <span>// Set an error status to prevent the process from launching</span> <span>CreateInfo</span><span>-></span><span>CreationStatus</span> <span>=</span> <span>STATUS_ACCESS_DENIED</span><span>;</span> <span>}</span> <span>}</span> <span>}</span> <span>PsSetCreateProcessNotifyRoutineEx</span><span>(</span><span>ProcessNotifyCallback</span><span>,</span> <span>FALSE</span><span>);</span> </pre></td></tr></tbody></table>

Notably, the Ex variant (introduced in Windows Vista SP1) provides the image file name and command line, which the original PsSetCreateProcessNotifyRoutine does not. The callback is called at PASSIVE_LEVEL from a system thread context.

Anti-cheats use this callback to detect cheat tool processes spawning on the system. If a known cheat launcher or injector process is created while the game is running, the anti-cheat can immediately flag this. Some implementations also set CreateInfo->CreationStatus to a failure code to outright prevent the process from launching.

PsSetCreateThreadNotifyRoutine

PsSetCreateThreadNotifyRoutine fires on every thread creation and termination system-wide. Anti-cheats use it specifically to detect thread creation in the protected game process. When a new thread is created in the game process, the callback fires and the anti-cheat can inspect the thread’s start address.

<table><tbody><tr><td><pre>1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 </pre></td><td><pre><span>VOID</span> <span>ThreadNotifyCallback</span><span>(</span><span>HANDLE</span> <span>ProcessId</span><span>,</span> <span>HANDLE</span> <span>ThreadId</span><span>,</span> <span>BOOLEAN</span> <span>Create</span><span>)</span> <span>{</span> <span>if</span> <span>(</span><span>!</span><span>Create</span><span>)</span> <span>return</span><span>;</span> <span>if</span> <span>(</span><span>IsProtectedProcess</span><span>(</span><span>ProcessId</span><span>))</span> <span>{</span> <span>PETHREAD</span> <span>Thread</span><span>;</span> <span>PsLookupThreadByThreadId</span><span>(</span><span>ThreadId</span><span>,</span> <span>&</span><span>Thread</span><span>);</span> <span>// Get the thread start address - this is stored in ETHREAD</span> <span>PVOID</span> <span>StartAddress</span> <span>=</span> <span>PsGetThreadWin32StartAddress</span><span>(</span><span>Thread</span><span>);</span> <span>// Check if start address is within a known module</span> <span>if</span> <span>(</span><span>!</span><span>IsAddressInKnownModule</span><span>(</span><span>StartAddress</span><span>))</span> <span>{</span> <span>// Thread started at an address with no backing module - suspicious</span> <span>FlagSuspiciousThread</span><span>(</span><span>Thread</span><span>,</span> <span>StartAddress</span><span>);</span> <span>}</span> <span>ObDereferenceObject</span><span>(</span><span>Thread</span><span>);</span> <span>}</span> <span>}</span> </pre></td></tr></tbody></table>

The call to PsLookupThreadByThreadId retrieves the ETHREAD pointer for the new thread. PsGetThreadWin32StartAddress returns the Win32 start address as seen by the process, which is distinct from the kernel-internal start address. Once finished with the thread object, ObDereferenceObject releases the reference acquired by PsLookupThreadByThreadId.

A thread created in the game process whose start address does not fall within any loaded module’s address range is a strong indicator of injected code. Legitimate threads start inside module code. An injected thread typically starts in shellcode or manually mapped PE code that has no module backing.

PsSetLoadImageNotifyRoutine

PsSetLoadImageNotifyRoutine fires whenever an image (DLL or EXE) is mapped into any process. It provides the image file name and a PIMAGE_INFO structure containing the base address and size.

<table><tbody><tr><td><pre>1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 </pre></td><td><pre><span>VOID</span> <span>LoadImageCallback</span><span>(</span> <span>PUNICODE_STRING</span> <span>FullImageName</span><span>,</span> <span>HANDLE</span> <span>ProcessId</span><span>,</span> <span>PIMAGE_INFO</span> <span>ImageInfo</span> <span>)</span> <span>{</span> <span>if</span> <span>(</span><span>IsProtectedProcess</span><span>(</span><span>ProcessId</span><span>))</span> <span>{</span> <span>// A DLL was loaded into the protected game process</span> <span>// Verify it's on the allowlist or check its signature</span> <span>if</span> <span>(</span><span>!</span><span>IsAllowedModule</span><span>(</span><span>FullImageName</span><span>))</span> <span>{</span> <span>// Log and potentially act on this</span> <span>ReportSuspiciousModule</span><span>(</span><span>FullImageName</span><span>,</span> <span>ImageInfo</span><span>-></span><span>ImageBase</span><span>);</span> <span>}</span> <span>}</span> <span>}</span> </pre></td></tr></tbody></table>

This is IRQL PASSIVE_LEVEL. The callback fires after the image is mapped but before its entry point executes, which gives the anti-cheat an opportunity to scan the image before any of its code runs.

CmRegisterCallbackEx

CmRegisterCallbackEx registers a callback for registry operations. Anti-cheats use this to monitor for registry modifications that might indicate cheats configuring themselves or attempting to modify anti-cheat settings.

<table><tbody><tr><td><pre>1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 </pre></td><td><pre><span>NTSTATUS</span> <span>RegistryCallback</span><span>(</span> <span>PVOID</span> <span>CallbackContext</span><span>,</span> <span>PVOID</span> <span>Argument1</span><span>,</span> <span>// REG_NOTIFY_CLASS</span> <span>PVOID</span> <span>Argument2</span> <span>// Operation-specific data</span> <span>)</span> <span>{</span> <span>REG_NOTIFY_CLASS</span> <span>notifyClass</span> <span>=</span> <span>(</span><span>REG_NOTIFY_CLASS</span><span>)(</span><span>ULONG_PTR</span><span>)</span><span>Argument1</span><span>;</span> <span>if</span> <span>(</span><span>notifyClass</span> <span>==</span> <span>RegNtPreSetValueKey</span><span>)</span> <span>{</span> <span>PREG_SET_VALUE_KEY_INFORMATION</span> <span>info</span> <span>=</span> <span>(</span><span>PREG_SET_VALUE_KEY_INFORMATION</span><span>)</span><span>Argument2</span><span>;</span> <span>// Check if someone is modifying anti-cheat registry keys</span> <span>if</span> <span>(</span><span>IsProtectedRegistryKey</span><span>(</span><span>info</span><span>-></span><span>Object</span><span>))</span> <span>{</span> <span>return</span> <span>STATUS_ACCESS_DENIED</span><span>;</span> <span>}</span> <span>}</span> <span>return</span> <span>STATUS_SUCCESS</span><span>;</span> <span>}</span> </pre></td></tr></tbody></table>

MiniFilter Drivers for Filesystem Monitoring

A minifilter driver sits in the file system filter stack and intercepts IRP requests going to and from file system drivers. Anti-cheats use minifilters to monitor for cheat file drops (writing known cheat executables or DLLs to disk), to detect reads of their own driver files (which might indicate attempts to patch the on-disk driver binary before it is verified), and to enforce file access restrictions.

<table><tbody><tr><td><pre>1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 </pre></td><td><pre><span>FLT_PREOP_CALLBACK_STATUS</span> <span>PreOperationCallback</span><span>(</span> <span>PFLT_CALLBACK_DATA</span> <span>Data</span><span>,</span> <span>PCFLT_RELATED_OBJECTS</span> <span>FltObjects</span><span>,</span> <span>PVOID</span> <span>*</span><span>CompletionContext</span> <span>)</span> <span>{</span> <span>if</span> <span>(</span><span>Data</span><span>-></span><span>Iopb</span><span>-></span><span>MajorFunction</span> <span>==</span> <span>IRP_MJ_WRITE</span><span>)</span> <span>{</span> <span>// Check if the target file is a known cheat file name</span> <span>PFLT_FILE_NAME_INFORMATION</span> <span>nameInfo</span><span>;</span> <span>FltGetFileNameInformation</span><span>(</span><span>Data</span><span>,</span> <span>FLT_FILE_NAME_NORMALIZED</span><span>,</span> <span>&</span><span>nameInfo</span><span>);</span> <span>if</span> <span>(</span><span>IsKnownCheatFileName</span><span>(</span><span>&</span><span>nameInfo</span><span>-></span><span>Name</span><span>))</span> <span>{</span> <span>Data</span><span>-></span><span>IoStatus</span><span>.</span><span>Status</span> <span>=</span> <span>STATUS_ACCESS_DENIED</span><span>;</span> <span>FltReleaseFileNameInformation</span><span>(</span><span>nameInfo</span><span>);</span> <span>return</span> <span>FLT_PREOP_COMPLETE</span><span>;</span> <span>}</span> <span>FltReleaseFileNameInformation</span><span>(</span><span>nameInfo</span><span>);</span> <span>}</span> <span>return</span> <span>FLT_PREOP_SUCCESS_NO_CALLBACK</span><span>;</span> <span>}</span> </pre></td></tr></tbody></table>

FltGetFileNameInformation retrieves the normalized file name for the target of the operation. FltReleaseFileNameInformation must be called to release the reference when done. Minifilter callbacks typically run at APC_LEVEL or PASSIVE_LEVEL, depending on the operation and the file system. This is important because many operations (like allocating paged pool or calling pageable functions) are not safe at DISPATCH_LEVEL or above.

4. Memory Protection and Scanning

The kernel driver can do far more than just register callbacks. It can actively scan the game process’s memory and the system-wide memory pool for artifacts of cheats.

Blocking Handle Access

As covered in the ObRegisterCallbacks section, the primary mechanism for protecting game memory from external reads and writes is stripping PROCESS_VM_READ and PROCESS_VM_WRITE from handles opened to the game process. This is effective against any cheat that uses standard Win32 APIs (ReadProcessMemory, WriteProcessMemory) because these ultimately call NtReadVirtualMemory and NtWriteVirtualMemory, which require appropriate handle access rights.

However, a kernel-mode cheat can bypass this entirely. It can call MmCopyVirtualMemory directly (an unexported but locatable kernel function) or manipulate page table entries directly to access game memory without going through the handle-based access control system. This is why handle protection alone is insufficient and why kernel-level cheats require kernel-level anti-cheat responses.

Periodic Memory Integrity Hashing

Anti-cheats periodically hash the code sections (.text sections) of the game executable and its core DLLs. A baseline hash is computed at game start, and periodic re-hashes are compared against the baseline. If the hash changes, someone has written to game code, which is a strong indicator of code patching (commonly used to enable no-recoil, speed, or aimbot functionality by patching game logic).

<table><tbody><tr><td><pre>1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 </pre></td><td><pre><span>// Pseudocode for code section integrity checking</span> <span>BOOLEAN</span> <span>VerifyCodeSectionIntegrity</span><span>(</span><span>PEPROCESS</span> <span>Process</span><span>,</span> <span>PVOID</span> <span>ModuleBase</span><span>)</span> <span>{</span> <span>// Attach to process context to read its memory</span> <span>KAPC_STATE</span> <span>apcState</span><span>;</span> <span>KeStackAttachProcess</span><span>(</span><span>Process</span><span>,</span> <span>&</span><span>apcState</span><span>);</span> <span>// Parse PE headers to find .text section</span> <span>PIMAGE_NT_HEADERS</span> <span>ntHeaders</span> <span>=</span> <span>RtlImageNtHeader</span><span>(</span><span>ModuleBase</span><span>);</span> <span>PIMAGE_SECTION_HEADER</span> <span>section</span> <span>=</span> <span>IMAGE_FIRST_SECTION</span><span>(</span><span>ntHeaders</span><span>);</span> <span>for</span> <span>(</span><span>USHORT</span> <span>i</span> <span>=</span> <span>0</span><span>;</span> <span>i</span> <span><</span> <span>ntHeaders</span><span>-></span><span>FileHeader</span><span>.</span><span>NumberOfSections</span><span>;</span> <span>i</span><span>++</span><span>,</span> <span>section</span><span>++</span><span>)</span> <span>{</span> <span>if</span> <span>(</span><span>memcmp</span><span>(</span><span>section</span><span>-></span><span>Name</span><span>,</span> <span>".text"</span><span>,</span> <span>5</span><span>)</span> <span>==</span> <span>0</span><span>)</span> <span>{</span> <span>PVOID</span> <span>sectionBase</span> <span>=</span> <span>(</span><span>PVOID</span><span>)((</span><span>ULONG_PTR</span><span>)</span><span>ModuleBase</span> <span>+</span> <span>section</span><span>-></span><span>VirtualAddress</span><span>);</span> <span>ULONG</span> <span>sectionSize</span> <span>=</span> <span>section</span><span>-></span><span>Misc</span><span>.</span><span>VirtualSize</span><span>;</span> <span>// Compute hash of current code section contents</span> <span>UCHAR</span> <span>currentHash</span><span>[</span><span>32</span><span>];</span> <span>ComputeSHA256</span><span>(</span><span>sectionBase</span><span>,</span> <span>sectionSize</span><span>,</span> <span>currentHash</span><span>);</span> <span>// Compare against stored baseline hash</span> <span>if</span> <span>(</span><span>memcmp</span><span>(</span><span>currentHash</span><span>,</span> <span>gBaselineHash</span><span>,</span> <span>32</span><span>)</span> <span>!=</span> <span>0</span><span>)</span> <span>{</span> <span>KeUnstackDetachProcess</span><span>(</span><span>&</span><span>apcState</span><span>);</span> <span>return</span> <span>FALSE</span><span>;</span> <span>// Code modification detected</span> <span>}</span> <span>}</span> <span>}</span> <span>KeUnstackDetachProcess</span><span>(</span><span>&</span><span>apcState</span><span>);</span> <span>return</span> <span>TRUE</span><span>;</span> <span>}</span> </pre></td></tr></tbody></table>

The KeStackAttachProcess / KeUnstackDetachProcess pattern is used to temporarily attach the calling thread to the target process’s address space, allowing the driver to read memory that is mapped into the game process without going through handle-based access controls. RtlImageNtHeader parses the PE headers from the in-memory image base.

Heuristic Scanning: Detecting Manually Mapped Code

The most interesting memory scanning is the heuristic detection of manually mapped code. When a legitimate DLL loads, it appears in the process’s PEB module list, in the InLoadOrderModuleList, and has a corresponding VAD_NODE entry with a MemoryAreaType that indicates the mapping came from a file. Manual mapping bypasses the normal loader, so the mapped code appears in memory as an anonymous private mapping or as a file-backed mapping with suspicious characteristics.

The key heuristic is: find all executable memory regions in the process, then cross-reference each one against the list of loaded modules. Executable memory that does not correspond to any loaded module is suspicious.

<table><tbody><tr><td><pre>1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 </pre></td><td><pre><span>// Walk the VAD tree to find executable anonymous mappings</span> <span>VOID</span> <span>ScanForManuallyMappedCode</span><span>(</span><span>PEPROCESS</span> <span>Process</span><span>)</span> <span>{</span> <span>KAPC_STATE</span> <span>apcState</span><span>;</span> <span>KeStackAttachProcess</span><span>(</span><span>Process</span><span>,</span> <span>&</span><span>apcState</span><span>);</span> <span>PVOID</span> <span>baseAddress</span> <span>=</span> <span>NULL</span><span>;</span> <span>MEMORY_BASIC_INFORMATION</span> <span>mbi</span><span>;</span> <span>while</span> <span>(</span><span>NT_SUCCESS</span><span>(</span><span>ZwQueryVirtualMemory</span><span>(</span> <span>ZwCurrentProcess</span><span>(),</span> <span>baseAddress</span><span>,</span> <span>MemoryBasicInformation</span><span>,</span> <span>&</span><span>mbi</span><span>,</span> <span>sizeof</span><span>(</span><span>mbi</span><span>),</span> <span>NULL</span><span>)))</span> <span>{</span> <span>if</span> <span>(</span><span>mbi</span><span>.</span><span>State</span> <span>==</span> <span>MEM_COMMIT</span> <span>&&</span> <span>(</span><span>mbi</span><span>.</span><span>Protect</span> <span>&</span> <span>PAGE_EXECUTE_READ</span> <span>||</span> <span>mbi</span><span>.</span><span>Protect</span> <span>&</span> <span>PAGE_EXECUTE_READWRITE</span> <span>||</span> <span>mbi</span><span>.</span><span>Protect</span> <span>&</span> <span>PAGE_EXECUTE_WRITECOPY</span><span>)</span> <span>&&</span> <span>mbi</span><span>.</span><span>Type</span> <span>==</span> <span>MEM_PRIVATE</span><span>)</span> <span>// Private, not file-backed</span> <span>{</span> <span>// Executable private memory - not associated with any file mapping</span> <span>// This is a strong indicator of manually mapped or shellcode</span> <span>ReportSuspiciousRegion</span><span>(</span><span>mbi</span><span>.</span><span>BaseAddress</span><span>,</span> <span>mbi</span><span>.</span><span>RegionSize</span><span>,</span> <span>"Executable private memory without file backing"</span><span>);</span> <span>}</span> <span>baseAddress</span> <span>=</span> <span>(</span><span>PVOID</span><span>)((</span><span>ULONG_PTR</span><span>)</span><span>mbi</span><span>.</span><span>BaseAddress</span> <span>+</span> <span>mbi</span><span>.</span><span>RegionSize</span><span>);</span> <span>if</span> <span>((</span><span>ULONG_PTR</span><span>)</span><span>baseAddress</span> <span>>=</span> <span>0x7FFFFFFFFFFF</span><span>)</span> <span>break</span><span>;</span> <span>// User space limit</span> <span>}</span> <span>KeUnstackDetachProcess</span><span>(</span><span>&</span><span>apcState</span><span>);</span> <span>}</span> </pre></td></tr></tbody></table>

ZwQueryVirtualMemory iterates through committed memory regions, returning a MEMORY_BASIC_INFORMATION structure for each. The Type field distinguishes private allocations (MEM_PRIVATE) from file-backed mappings (MEM_IMAGE, MEM_MAPPED). BattlEye’s scanning approach, as documented by the secret.club and back.engineering analyses, involves scanning all memory regions of the protected process and specifically flagging executable regions without file backing. It also scans external processes’ memory pages looking for execution bit anomalies, specifically targeting cases where page protection flags have been changed programmatically to make otherwise non-executable memory executable (a common technique when shellcode is staged).

VAD Tree Walking

The VAD (Virtual Address Descriptor) tree is a kernel-internal structure that the memory manager uses to track all memory regions allocated in a process. Each VAD_NODE (which is actually a MMVAD structure in kernel terms) contains information about the region: its base address and size, its protection, whether it is file-backed (and if so, which file), and various flags.

Anti-cheats walk the VAD tree directly rather than relying on ZwQueryVirtualMemory, because the VAD tree cannot be trivially hidden from kernel mode in the same way that module lists can be manipulated. Walking the VAD:

<table><tbody><tr><td><pre>1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 </pre></td><td><pre><span>// Simplified VAD walker - actual offsets are version-specific</span> <span>VOID</span> <span>WalkVAD</span><span>(</span><span>PEPROCESS</span> <span>Process</span><span>)</span> <span>{</span> <span>// VadRoot is at a version-specific offset in EPROCESS</span> <span>// On Windows 11 23H2, this is at EPROCESS+0x7D8 (https://www.vergiliusproject.com/kernels/x64/windows-11/23h2/_EPROCESS)</span> <span>PMM_AVL_TABLE</span> <span>vadRoot</span> <span>=</span> <span>(</span><span>PMM_AVL_TABLE</span><span>)((</span><span>ULONG_PTR</span><span>)</span><span>Process</span> <span>+</span> <span>EPROCESS_VAD_ROOT_OFFSET</span><span>);</span> <span>WalkAVLTree</span><span>(</span><span>vadRoot</span><span>-></span><span>BalancedRoot</span><span>.</span><span>RightChild</span><span>);</span> <span>}</span> <span>VOID</span> <span>WalkAVLTree</span><span>(</span><span>PMMADDRESS_NODE</span> <span>node</span><span>)</span> <span>{</span> <span>if</span> <span>(</span><span>node</span> <span>==</span> <span>NULL</span><span>)</span> <span>return</span><span>;</span> <span>PMMVAD</span> <span>vad</span> <span>=</span> <span>(</span><span>PMMVAD</span><span>)</span><span>node</span><span>;</span> <span>// Check the VAD flags for suspicious characteristics</span> <span>// u.VadFlags.PrivateMemory = 1 and executable protection = suspicious</span> <span>if</span> <span>(</span><span>vad</span><span>-></span><span>u</span><span>.</span><span>VadFlags</span><span>.</span><span>PrivateMemory</span> <span>&&</span> <span>IsExecutableProtection</span><span>(</span><span>vad</span><span>-></span><span>u</span><span>.</span><span>VadFlags</span><span>.</span><span>Protection</span><span>))</span> <span>{</span> <span>// Check for file-backed backing</span> <span>if</span> <span>(</span><span>vad</span><span>-></span><span>Subsection</span> <span>==</span> <span>NULL</span><span>)</span> <span>{</span> <span>ReportSuspiciousVAD</span><span>(</span><span>vad</span><span>);</span> <span>}</span> <span>}</span> <span>WalkAVLTree</span><span>(</span><span>node</span><span>-></span><span>LeftChild</span><span>);</span> <span>WalkAVLTree</span><span>(</span><span>node</span><span>-></span><span>RightChild</span><span>);</span> <span>}</span> </pre></td></tr></tbody></table>

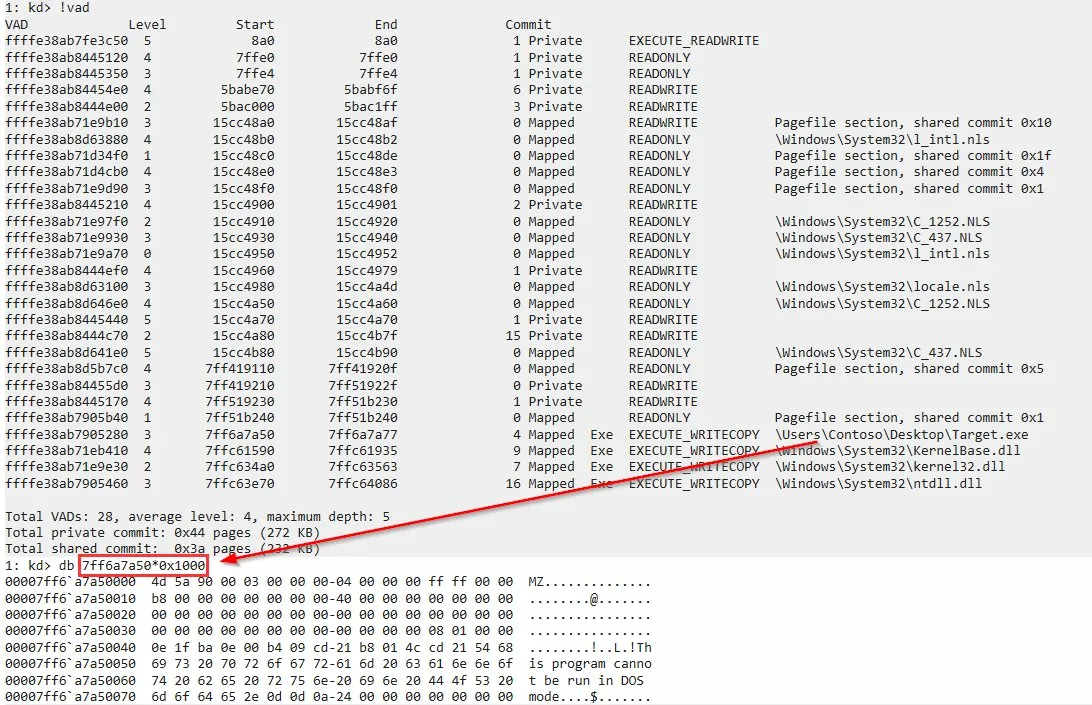

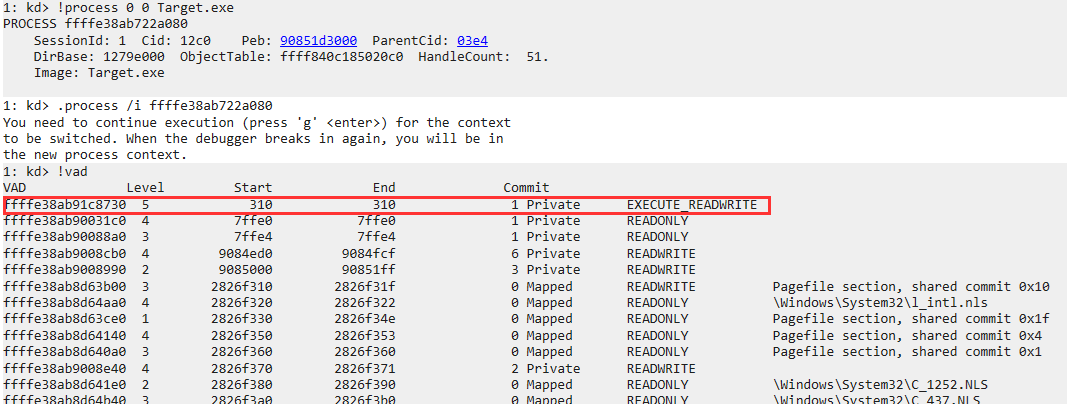

We can observe this detection in practice using WinDbg’s !vad command on a process with injected code.

The first entry is a Private EXECUTE_READWRITE region with no backing file, injected by our test tool. Every legitimate module shows as Mapped Exe with a full file path.

The first entry is a Private EXECUTE_READWRITE region with no backing file, injected by our test tool. Every legitimate module shows as Mapped Exe with a full file path.

The power of VAD walking is that it catches manually mapped code even if the cheat has manipulated the PEB module list or the LDR_DATA_TABLE_ENTRY chain to hide itself. The VAD is a kernel structure that usermode code cannot modify directly.

5. Anti-Injection Detection

CreateRemoteThread Injection

The classic injection technique: call CreateRemoteThread in the target process with LoadLibraryA as the thread start address and the DLL path as the argument. This is trivially detectable via PsSetCreateThreadNotifyRoutine: the new thread’s start address will be LoadLibraryA (or rather its address in kernel32.dll), and the caller process is not the game itself.

A more subtle check is the CLIENT_ID of the creating thread. When CreateRemoteThread is called, the kernel records which process created the thread. The anti-cheat can check whether a thread in the game process was created by an external process, which is a reliable indicator of injection.

APC Injection

QueueUserAPC and the underlying NtQueueApcThread allow queuing an Asynchronous Procedure Call to a thread in any process for which the caller has THREAD_SET_CONTEXT access. When the target thread enters an alertable wait, the APC fires and executes arbitrary code in the target thread’s context.

Detection at the kernel level leverages the KAPC structure. Each thread has a kernel APC queue and a user APC queue. Anti-cheats can inspect the pending APC queue of game process threads to detect suspicious APC targets:

<table><tbody><tr><td><pre>1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 </pre></td><td><pre><span>// Check for suspicious pending APCs on a thread</span> <span>VOID</span> <span>InspectThreadAPCQueue</span><span>(</span><span>PETHREAD</span> <span>Thread</span><span>)</span> <span>{</span> <span>// The user APC queue is at a version-specific offset in ETHREAD</span> <span>// ETHREAD::ApcState::ApcListHead[1] (index 1 = user APC list)</span> <span>PLIST_ENTRY</span> <span>apcList</span> <span>=</span> <span>(</span><span>PLIST_ENTRY</span><span>)((</span><span>ULONG_PTR</span><span>)</span><span>Thread</span> <span>+</span> <span>ETHREAD_APC_STATE_OFFSET</span> <span>+</span> <span>KAPC_STATE_USER_APC_LIST_OFFSET</span><span>);</span> <span>PLIST_ENTRY</span> <span>entry</span> <span>=</span> <span>apcList</span><span>-></span><span>Flink</span><span>;</span> <span>while</span> <span>(</span><span>entry</span> <span>!=</span> <span>apcList</span><span>)</span> <span>{</span> <span>PKAPC</span> <span>apc</span> <span>=</span> <span>CONTAINING_RECORD</span><span>(</span><span>entry</span><span>,</span> <span>KAPC</span><span>,</span> <span>ApcListEntry</span><span>);</span> <span>// Check if the normal routine (user APC function)</span> <span>// points to an address without module backing</span> <span>if</span> <span>(</span><span>apc</span><span>-></span><span>NormalRoutine</span> <span>!=</span> <span>NULL</span> <span>&&</span> <span>!</span><span>IsAddressInLoadedModule</span><span>((</span><span>PVOID</span><span>)</span><span>apc</span><span>-></span><span>NormalRoutine</span><span>))</span> <span>{</span> <span>ReportSuspiciousAPC</span><span>(</span><span>Thread</span><span>,</span> <span>apc</span><span>-></span><span>NormalRoutine</span><span>);</span> <span>}</span> <span>entry</span> <span>=</span> <span>entry</span><span>-></span><span>Flink</span><span>;</span> <span>}</span> <span>}</span> </pre></td></tr></tbody></table>

NtMapViewOfSection-Based Injection

A sophisticated injection technique maps a shared section object (backed by a file or created with NtCreateSection) into the target process using NtMapViewOfSection. This bypasses CreateRemoteThread-based detection heuristics because no remote thread is created initially. The injected code is then typically triggered via APC or by modifying an existing thread’s context.

Detection is via the VAD: a section mapping that appears in the game process but was created by an external process will have a distinct pattern in the VAD. Specifically, the MMVAD::u.VadFlags.NoChange and related flags, combined with the file object backing the section (or lack thereof), can reveal this technique.

Reflective DLL Injection and Manual Mapping

Reflective DLL injection embeds a reflective loader inside the DLL that, when executed, maps the DLL into memory without using LoadLibrary. The DLL parses its own PE headers, resolves imports, applies relocations, and calls DllMain. The result is a fully functional DLL in memory that never appears in the InLoadOrderModuleList.

Detection: executable memory with a valid PE header (check for the MZ magic bytes and the PE\0\0 signature at the offset specified by e_lfanew) but no corresponding module list entry. This is a reliable indicator.

<table><tbody><tr><td><pre>1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 </pre></td><td><pre><span>// Check for PE headers in executable private memory</span> <span>BOOLEAN</span> <span>HasValidPEHeader</span><span>(</span><span>PVOID</span> <span>base</span><span>,</span> <span>SIZE_T</span> <span>size</span><span>)</span> <span>{</span> <span>if</span> <span>(</span><span>size</span> <span><</span> <span>sizeof</span><span>(</span><span>IMAGE_DOS_HEADER</span><span>))</span> <span>return</span> <span>FALSE</span><span>;</span> <span>PIMAGE_DOS_HEADER</span> <span>dosHeader</span> <span>=</span> <span>(</span><span>PIMAGE_DOS_HEADER</span><span>)</span><span>base</span><span>;</span> <span>if</span> <span>(</span><span>dosHeader</span><span>-></span><span>e_magic</span> <span>!=</span> <span>IMAGE_DOS_SIGNATURE</span><span>)</span> <span>return</span> <span>FALSE</span><span>;</span> <span>if</span> <span>(</span><span>dosHeader</span><span>-></span><span>e_lfanew</span> <span>>=</span> <span>size</span> <span>-</span> <span>sizeof</span><span>(</span><span>IMAGE_NT_HEADERS</span><span>))</span> <span>return</span> <span>FALSE</span><span>;</span> <span>PIMAGE_NT_HEADERS</span> <span>ntHeaders</span> <span>=</span> <span>(</span><span>PIMAGE_NT_HEADERS</span><span>)</span> <span>((</span><span>ULONG_PTR</span><span>)</span><span>base</span> <span>+</span> <span>dosHeader</span><span>-></span><span>e_lfanew</span><span>);</span> <span>if</span> <span>(</span><span>ntHeaders</span><span>-></span><span>Signature</span> <span>!=</span> <span>IMAGE_NT_SIGNATURE</span><span>)</span> <span>return</span> <span>FALSE</span><span>;</span> <span>return</span> <span>TRUE</span><span>;</span> <span>}</span> </pre></td></tr></tbody></table>

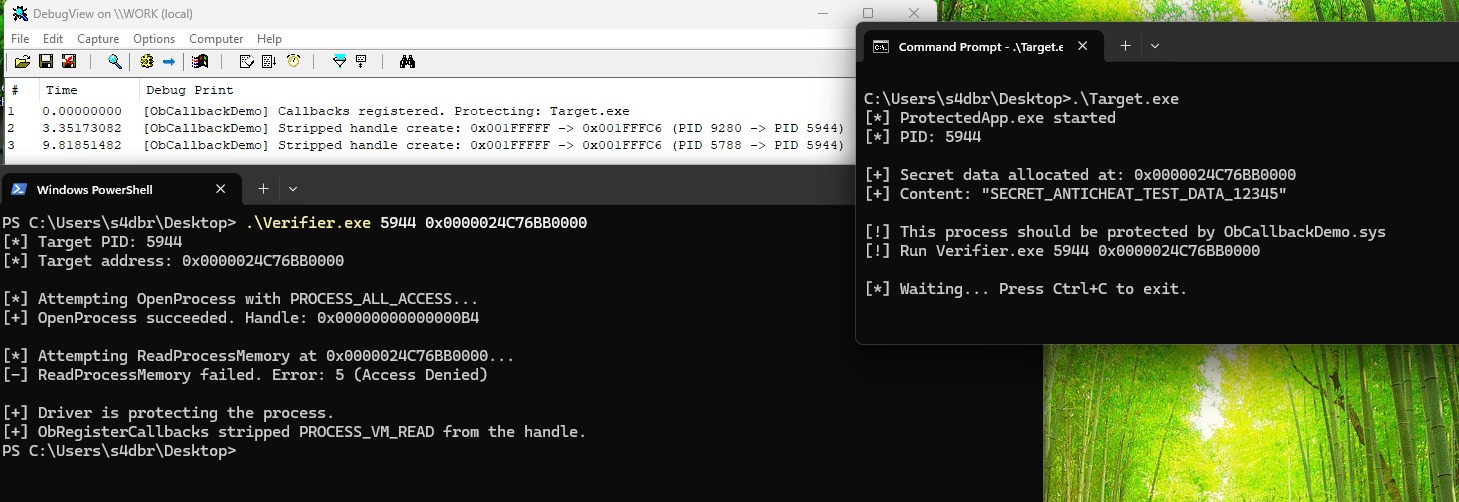

We can observe this in practice using a simple test tool that allocates an RWX region and writes a minimal PE header into a running process:

Walking the VAD tree with !vad reveals the injected region immediately. The first entry at 0x8A0 is a Private EXECUTE_READWRITE region with no backing file. Compare this to the legitimate Target.exe image at the bottom, which is Mapped Exe EXECUTE_WRITECOPY with a full file path. Dumping the legitimate module’s base with db confirms a complete PE header with the DOS stub:

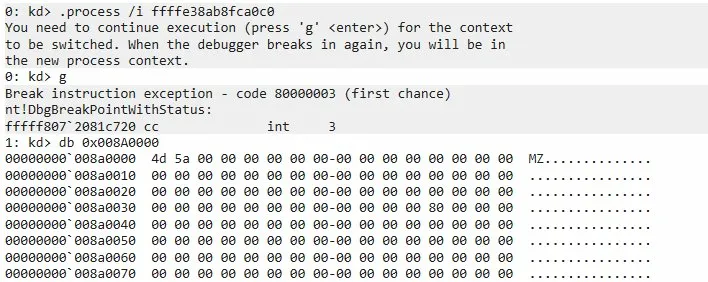

Dumping the injected region at 0x008A0000 also shows a valid MZ signature, but the rest of the header is mostly zeroes with no DOS stub. This is characteristic of manually mapped code:

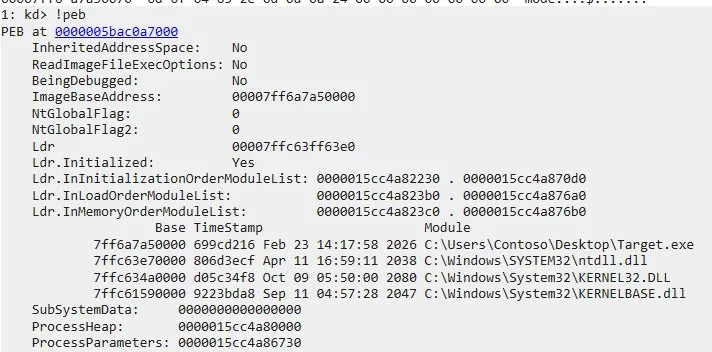

Finally, !peb confirms that the injected region does not appear in any of the module lists. The PEB only contains Target.exe, ntdll.dll, kernel32.dll, and KernelBase.dll. The region at 0x008A0000 is completely invisible to any usermode API that enumerates loaded modules:

Stack Walking with RtlWalkFrameChain

When BEDaisy wants to inspect a thread’s call stack, it uses an APC mechanism to capture the stack frames while the thread is in user mode. The APC fires in the game thread’s context and calls RtlWalkFrameChain or RtlCaptureStackBackTrace to capture the return address chain.

The back.engineering analysis of BEDaisy (and the Aki2k/BEDaisy GitHub research) documents this specifically: BEDaisy queues kernel APCs to threads in the protected process. The APC kernel routine runs at APC_LEVEL, captures the thread’s stack, and then analyzes each return address against the list of loaded modules. A return address pointing outside any loaded module is a strong indicator of injected code on the stack, which suggests the thread is currently executing injected code or returned from it.

<table><tbody><tr><td><pre>1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 </pre></td><td><pre><span>// Pseudocode for APC-based stack inspection</span> <span>VOID</span> <span>KernelApcRoutine</span><span>(</span> <span>PKAPC</span> <span>Apc</span><span>,</span> <span>PKNORMAL_ROUTINE</span> <span>*</span><span>NormalRoutine</span><span>,</span> <span>PVOID</span> <span>*</span><span>NormalContext</span><span>,</span> <span>PVOID</span> <span>*</span><span>SystemArgument1</span><span>,</span> <span>PVOID</span> <span>*</span><span>SystemArgument2</span> <span>)</span> <span>{</span> <span>// We're now running at APC_LEVEL in the context of the inspected thread</span> <span>PVOID</span> <span>frames</span><span>[</span><span>64</span><span>];</span> <span>ULONG</span> <span>capturedFrames</span> <span>=</span> <span>RtlWalkFrameChain</span><span>(</span><span>frames</span><span>,</span> <span>64</span><span>,</span> <span>0</span><span>);</span> <span>for</span> <span>(</span><span>ULONG</span> <span>i</span> <span>=</span> <span>0</span><span>;</span> <span>i</span> <span><</span> <span>capturedFrames</span><span>;</span> <span>i</span><span>++</span><span>)</span> <span>{</span> <span>if</span> <span>(</span><span>!</span><span>IsAddressInKnownModule</span><span>(</span><span>frames</span><span>[</span><span>i</span><span>]))</span> <span>{</span> <span>// Stack frame points outside any loaded module</span> <span>ReportSuspiciousStackFrame</span><span>(</span><span>frames</span><span>[</span><span>i</span><span>]);</span> <span>}</span> <span>}</span> <span>}</span> </pre></td></tr></tbody></table>

6. Hook Detection

Hooks are the primary mechanism by which usermode cheats intercept and manipulate the game’s interaction with the OS. Detecting them is a core anti-cheat function.

IAT Hook Detection

The Import Address Table (IAT) of a PE file contains the addresses of imported functions. When a process loads, the loader resolves these addresses by looking up each imported function in the exporting DLL and writing the function’s address into the IAT. An IAT hook overwrites one of these entries with a pointer to attacker-controlled code.

Detection is straightforward: for each IAT entry, compare the resolved address against what the on-disk export of the correct DLL says the address should be.

<table><tbody><tr><td><pre>1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 </pre></td><td><pre><span>VOID</span> <span>DetectIATHooks</span><span>(</span><span>PVOID</span> <span>moduleBase</span><span>)</span> <span>{</span> <span>PIMAGE_IMPORT_DESCRIPTOR</span> <span>importDesc</span> <span>=</span> <span>RtlImageDirectoryEntryToData</span><span>(</span><span>moduleBase</span><span>,</span> <span>TRUE</span><span>,</span> <span>IMAGE_DIRECTORY_ENTRY_IMPORT</span><span>,</span> <span>NULL</span><span>);</span> <span>while</span> <span>(</span><span>importDesc</span><span>-></span><span>Name</span> <span>!=</span> <span>0</span><span>)</span> <span>{</span> <span>PCHAR</span> <span>dllName</span> <span>=</span> <span>(</span><span>PCHAR</span><span>)((</span><span>ULONG_PTR</span><span>)</span><span>moduleBase</span> <span>+</span> <span>importDesc</span><span>-></span><span>Name</span><span>);</span> <span>PVOID</span> <span>importedDllBase</span> <span>=</span> <span>GetLoadedModuleBase</span><span>(</span><span>dllName</span><span>);</span> <span>PULONG_PTR</span> <span>iat</span> <span>=</span> <span>(</span><span>PULONG_PTR</span><span>)((</span><span>ULONG_PTR</span><span>)</span><span>moduleBase</span> <span>+</span> <span>importDesc</span><span>-></span><span>FirstThunk</span><span>);</span> <span>PIMAGE_THUNK_DATA</span> <span>originalFirstThunk</span> <span>=</span> <span>(</span><span>PIMAGE_THUNK_DATA</span><span>)((</span><span>ULONG_PTR</span><span>)</span><span>moduleBase</span> <span>+</span> <span>importDesc</span><span>-></span><span>OriginalFirstThunk</span><span>);</span> <span>while</span> <span>(</span><span>originalFirstThunk</span><span>-></span><span>u1</span><span>.</span><span>AddressOfData</span> <span>!=</span> <span>0</span><span>)</span> <span>{</span> <span>PIMAGE_IMPORT_BY_NAME</span> <span>importByName</span> <span>=</span> <span>(</span><span>PIMAGE_IMPORT_BY_NAME</span><span>)((</span><span>ULONG_PTR</span><span>)</span><span>moduleBase</span> <span>+</span> <span>originalFirstThunk</span><span>-></span><span>u1</span><span>.</span><span>AddressOfData</span><span>);</span> <span>// Get the expected address from the exporting DLL</span> <span>PVOID</span> <span>expectedAddr</span> <span>=</span> <span>GetExportedFunctionAddress</span><span>(</span><span>importedDllBase</span><span>,</span> <span>importByName</span><span>-></span><span>Name</span><span>);</span> <span>PVOID</span> <span>actualAddr</span> <span>=</span> <span>(</span><span>PVOID</span><span>)</span><span>*</span><span>iat</span><span>;</span> <span>if</span> <span>(</span><span>expectedAddr</span> <span>!=</span> <span>actualAddr</span><span>)</span> <span>{</span> <span>ReportIATHook</span><span>(</span><span>dllName</span><span>,</span> <span>importByName</span><span>-></span><span>Name</span><span>,</span> <span>expectedAddr</span><span>,</span> <span>actualAddr</span><span>);</span> <span>}</span> <span>iat</span><span>++</span><span>;</span> <span>originalFirstThunk</span><span>++</span><span>;</span> <span>}</span> <span>importDesc</span><span>++</span><span>;</span> <span>}</span> <span>}</span> </pre></td></tr></tbody></table>

RtlImageDirectoryEntryToData locates the import directory from the PE headers. The TRUE parameter specifies that the image is mapped (as opposed to a raw file on disk), which is correct when working with in-memory modules. The outer loop walks the IMAGE_IMPORT_DESCRIPTOR array, terminating on a zero Name field. The inner loop compares each resolved IAT entry against the expected export address.

Inline Hook Detection

Inline hooks patch the first few bytes of a function with a JMP (opcode 0xE9 for relative near jump, or 0xFF 0x25 for indirect jump through a memory pointer) to redirect execution to attacker code, which typically performs its modifications and then jumps back to the original code (a “trampoline” pattern).

Detection involves reading the first 16-32 bytes of each monitored function and checking for:

0xE9(JMP rel32)0xFF 0x25(JMP [rip+disp32]) - common for 64-bit hooks0x48 0xB8 ... 0xFF 0xE0(MOV RAX, imm64; JMP RAX) - an absolute 64-bit jump sequence0xCC(INT 3) - a software breakpoint, which can also be a hook point

The anti-cheat reads the on-disk PE file and compares the on-disk bytes of function prologues against what is currently in memory. Any discrepancy indicates patching.

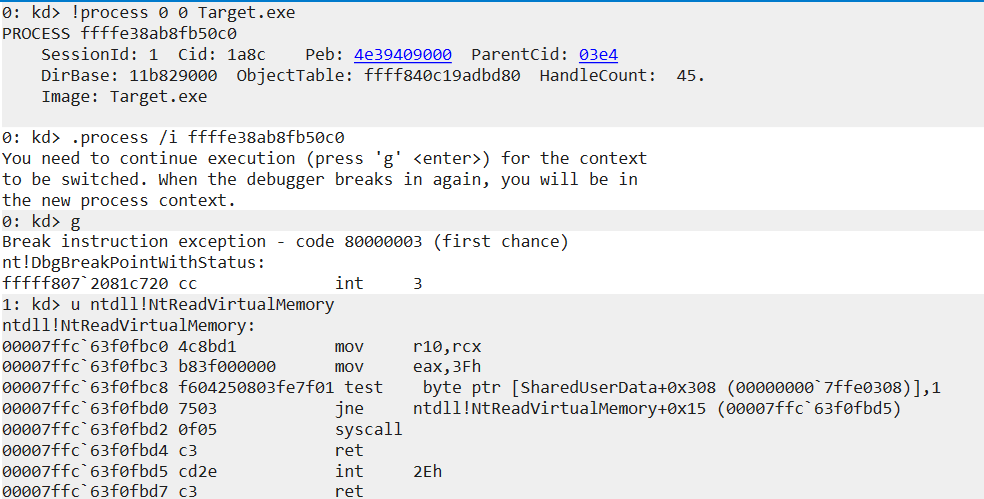

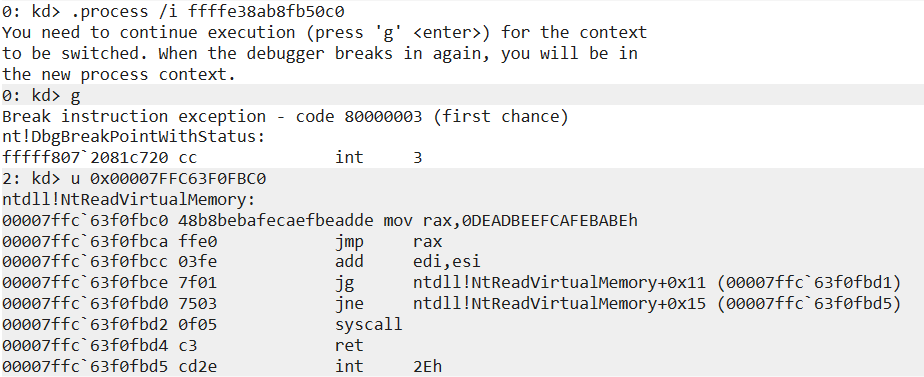

To demonstrate inline hook detection, we use a test tool that patches NtReadVirtualMemory in a running process with a MOV RAX; JMP RAX hook:

Before patching, the function prologue shows a clean syscall stub. mov r10, rcx saves the first argument, mov eax, 3Fh loads the syscall number, and syscall transitions to kernel mode:

After the hook is installed, the first 12 bytes are overwritten with mov rax, 0xDEADBEEFCAFEBABE; jmp rax, redirecting execution to an attacker-controlled address. An anti-cheat comparing these bytes against the on-disk copy of ntdll would immediately flag the mismatch:

SSDT Integrity Checking

The System Service Descriptor Table (SSDT) is the kernel’s dispatch table for syscalls. When a usermode process executes a syscall instruction, the kernel uses the syscall number (placed in EAX) to index into the SSDT and invoke the corresponding kernel function. Patching the SSDT redirects syscalls to attacker-controlled code.

SSDT hooking is a classic technique that became significantly harder after the introduction of PatchGuard (Kernel Patch Protection, KPP) in 64-bit Windows. PatchGuard monitors the SSDT (among many other structures) and triggers a CRITICAL_STRUCTURE_CORRUPTION bug check (0x109) if it detects modification. As a result, SSDT hooking is essentially dead in 64-bit Windows. However, anti-cheats still verify SSDT integrity as a defense in depth measure.

IDT and GDT Monitoring

The Interrupt Descriptor Table (IDT) maps interrupt vectors to their handler routines. The Global Descriptor Table (GDT) defines memory segments. Both are processor-level structures that cannot be easily protected by PatchGuard alone on all configurations.

A cheat operating at kernel level can attempt to replace IDT entries to intercept specific interrupts, which can be used for control flow interception or as a covert channel. Anti-cheats verify that IDT entries point to expected kernel locations:

<table><tbody><tr><td><pre>1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 </pre></td><td><pre><span>VOID</span> <span>VerifyIDTIntegrity</span><span>(</span><span>void</span><span>)</span> <span>{</span> <span>IDTR</span> <span>idtr</span><span>;</span> <span>__sidt</span><span>(</span><span>&</span><span>idtr</span><span>);</span> <span>// Read IDTR register</span> <span>PIDT_ENTRY64</span> <span>idt</span> <span>=</span> <span>(</span><span>PIDT_ENTRY64</span><span>)</span><span>idtr</span><span>.</span><span>Base</span><span>;</span> <span>for</span> <span>(</span><span>int</span> <span>i</span> <span>=</span> <span>0</span><span>;</span> <span>i</span> <span><</span> <span>256</span><span>;</span> <span>i</span><span>++</span><span>)</span> <span>{</span> <span>ULONG_PTR</span> <span>handler</span> <span>=</span> <span>GetIDTHandlerAddress</span><span>(</span><span>&</span><span>idt</span><span>[</span><span>i</span><span>]);</span> <span>// Verify handler is in ntoskrnl or a known driver address range</span> <span>if</span> <span>(</span><span>!</span><span>IsKernelCodeAddress</span><span>(</span><span>handler</span><span>))</span> <span>{</span> <span>ReportIDTModification</span><span>(</span><span>i</span><span>,</span> <span>handler</span><span>);</span> <span>}</span> <span>}</span> <span>}</span> </pre></td></tr></tbody></table>

Detecting Direct Syscall Usage by Cheats

A common evasion technique is for cheats to perform syscalls directly (using the syscall instruction with the appropriate syscall number) rather than going through ntdll.dll functions. This bypasses usermode hooks placed in ntdll. Anti-cheats detect this by monitoring threads within the game process for syscall instruction execution from unexpected code locations, and by checking whether ntdll functions that should be called are actually being called with expected frequency and patterns.

7. Driver-Level Protections

Detecting Unsigned and Test-Signed Drivers

On a properly configured Windows system with Secure Boot enabled, all kernel drivers must be signed by a certificate trusted by Microsoft. Test signing mode (enabled with bcdedit /set testsigning on) allows loading self-signed drivers and is a common development and cheat-deployment technique.

Anti-cheats detect test signing mode by reading the Windows boot configuration and by checking the kernel variable that reflects whether DSE is currently enforced. Some anti-cheats refuse to launch if test signing is enabled.

The SeValidateImageHeader and SeValidateImageData functions in the kernel validate driver signatures. Anti-cheats can inspect loaded driver objects and verify that their IMAGE_INFO_EX ImageSignatureType and ImageSignatureLevel fields reflect proper signing.

BYOVD Attacks

Bring Your Own Vulnerable Driver is the dominant technique for loading unsigned kernel code in 2024-2026. The attack works as follows:

- The attacker finds a legitimate, signed driver with a vulnerability (typically a dangerous IOCTL handler that allows arbitrary kernel memory reads/writes, or that calls

MmMapIoSpacewith attacker-controlled parameters). - The attacker loads this legitimate driver (which passes DSE because it has a valid signature).

- The attacker exploits the vulnerability in the legitimate driver to achieve arbitrary kernel code execution.

- Using that kernel execution, the attacker disables DSE or directly maps their unsigned cheat driver.

Common BYOVD targets have included drivers from MSI, Gigabyte, ASUS, and various hardware vendors. These drivers often have IOCTL handlers that expose direct physical memory read/write capability, which is all an attacker needs.

Anti-Cheat Driver Blocklists

The primary defense against BYOVD is a blocklist of known-vulnerable drivers. The Microsoft Vulnerable Driver Blocklist (maintained in DriverSiPolicy.p7b) is built into Windows and distributed via Windows Update. Anti-cheats maintain their own, more aggressive blocklists.

Vanguard in particular is known for actively comparing the set of loaded drivers against its blocklist and refusing to allow the protected game to launch if a blocklisted driver is present. This is enforced because some BYOVD attacks involve loading the vulnerable driver and immediately using it before unloading it, so checking only at game launch with a pre-scan covers most cases.

PiDDBCache and PiDDBLock

This is one of the more interesting internals that kernel cheat developers and anti-cheat engineers both care deeply about.

PiDDBCacheTable is a kernel-internal AVL tree that caches information about previously loaded drivers. When a driver is loaded, the kernel stores an entry keyed by the driver’s TimeDateStamp (from the PE header) and SizeOfImage. This cache is used to quickly look up whether a driver has been seen before. The structure is a RTL_AVL_TABLE protected by PiDDBLock (an ERESOURCE lock).

Cheat developers who manually map a driver without going through the normal load path try to erase or modify the corresponding PiDDBCacheTable entry to conceal that their driver was ever loaded. Anti-cheats detect this by:

- Verifying the consistency of the

PiDDBCacheTable- if a driver is in memory (found via pool tag scanning or other means) but has noPiDDBCacheTableentry, the entry was probably scrubbed. - Monitoring the

PiDDBLockfor unexpected acquisitions from non-kernel threads. - Comparing the timestamp/size combinations of all known loaded drivers against the

PiDDBCacheTableentries.

<table><tbody><tr><td><pre>1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 </pre></td><td><pre><span>// Locating PiDDBCacheTable (must locate via signature scan since not exported)</span> <span>// This is version-specific and fragile; anti-cheats maintain multiple signatures</span> <span>BOOLEAN</span> <span>FindPiDDBCacheTable</span><span>(</span><span>PVOID</span> <span>*</span><span>TableAddress</span><span>)</span> <span>{</span> <span>// Pattern to locate PiDDBCacheTable in ntoskrnl</span> <span>// This is a simplified illustration - real implementations use robust pattern matching</span> <span>PVOID</span> <span>ntoskrnl</span> <span>=</span> <span>GetKernelModuleBase</span><span>(</span><span>"ntoskrnl.exe"</span><span>);</span> <span>PUCHAR</span> <span>pattern</span> <span>=</span> <span>"</span><span>\x48\x8D\x0D</span><span>"</span><span>;</span> <span>// LEA RCX, [RIP+...]</span> <span>PVOID</span> <span>match</span> <span>=</span> <span>FindPattern</span><span>(</span><span>ntoskrnl</span><span>,</span> <span>pattern</span><span>,</span> <span>3</span><span>,</span> <span>NTOSKRNL_TEXT_RANGE</span><span>);</span> <span>if</span> <span>(</span><span>match</span><span>)</span> <span>{</span> <span>// Extract the RIP-relative offset</span> <span>INT32</span> <span>offset</span> <span>=</span> <span>*</span><span>(</span><span>INT32</span><span>*</span><span>)((</span><span>ULONG_PTR</span><span>)</span><span>match</span> <span>+</span> <span>3</span><span>);</span> <span>*</span><span>TableAddress</span> <span>=</span> <span>(</span><span>PVOID</span><span>)((</span><span>ULONG_PTR</span><span>)</span><span>match</span> <span>+</span> <span>7</span> <span>+</span> <span>offset</span><span>);</span> <span>return</span> <span>TRUE</span><span>;</span> <span>}</span> <span>return</span> <span>FALSE</span><span>;</span> <span>}</span> </pre></td></tr></tbody></table>

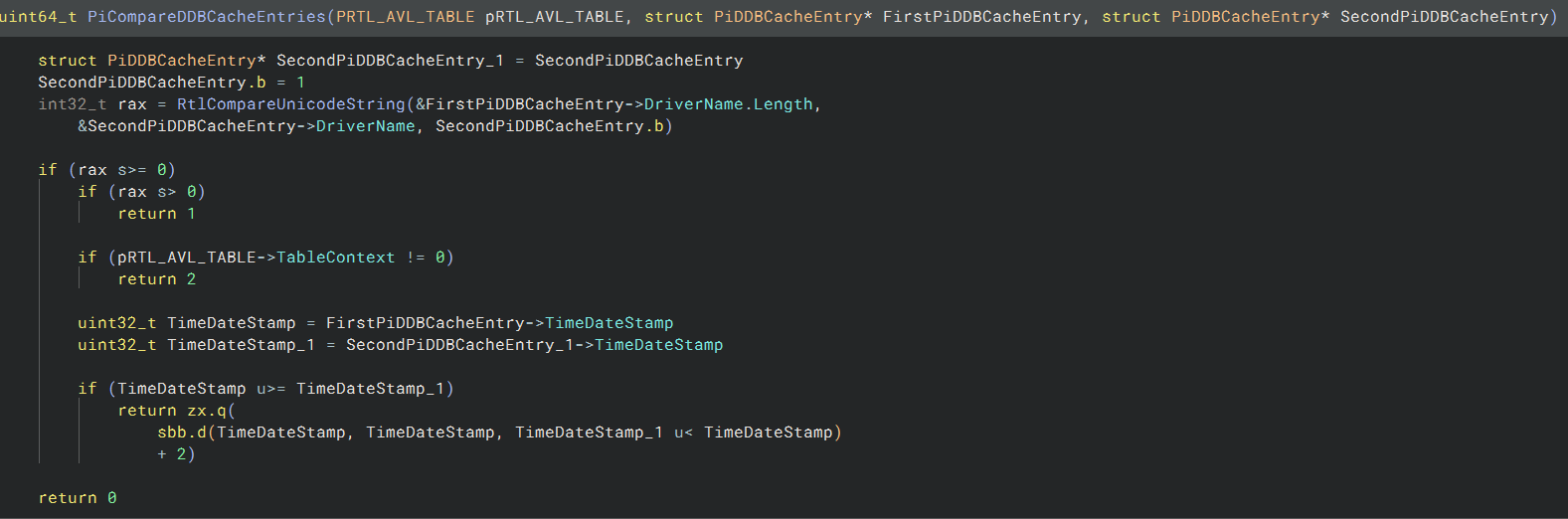

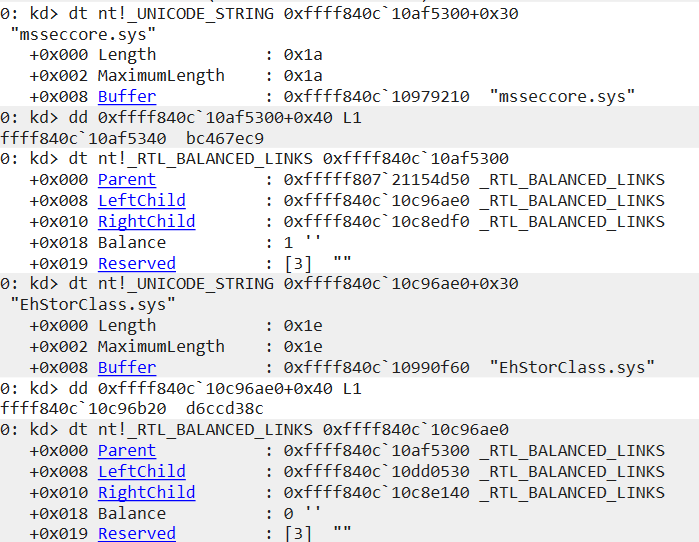

Reversing PiCompareDDBCacheEntries

PiDDBCacheTable is not exported and PiDDBCacheEntry is not in public symbols. To interact with the cache, we need to reverse the entry structure. The compare routine is the best starting point since it directly accesses the fields used for ordering.

The decompiled output reveals the structure layout. The function receives two PiDDBCacheEntry pointers and first compares their DriverName fields (a UNICODE_STRING at offset 0x10) using RtlCompareUnicodeString. If the names are equal and TableContext is non-zero, the entries are considered equal. Otherwise, it falls through to comparing the TimeDateStamp field (a ULONG at offset 0x20). This gives us the recovered structure:

<table><tbody><tr><td><pre>1 2 3 4 5 6 </pre></td><td><pre><span>struct</span> <span>PiDDBCacheEntry</span> <span>{</span> <span>RTL_BALANCED_LINKS</span> <span>Links</span><span>;</span> <span>// 0x00 - AVL tree node pointers (0x20 bytes)</span> <span>UNICODE_STRING</span> <span>DriverName</span><span>;</span> <span>// 0x10 - driver filename (from compare routine offset)</span> <span>ULONG</span> <span>TimeDateStamp</span><span>;</span> <span>// 0x20 - PE header timestamp (secondary sort key)</span> <span>};</span> </pre></td></tr></tbody></table>

Walking the AVL Tree

PiDDBCacheTable is an RTL_AVL_TABLE, a self-balancing binary search tree. Each node in the tree starts with an _RTL_BALANCED_LINKS header containing Parent, LeftChild, and RightChild pointers. The actual PiDDBCacheEntry data sits immediately after this header.

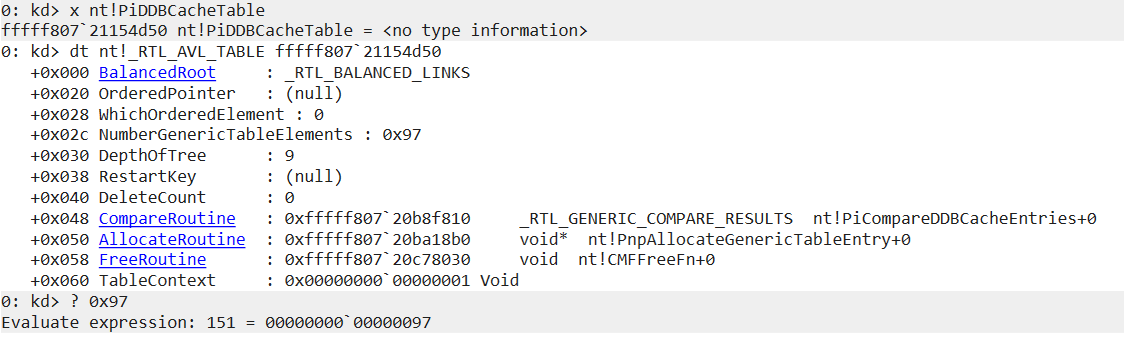

To enumerate entries, we start by resolving the table address. PiDDBCacheTable is not exported, so anti-cheats locate it via signature scanning in ntoskrnl.exe. In WinDbg with symbols loaded, we can resolve it directly:

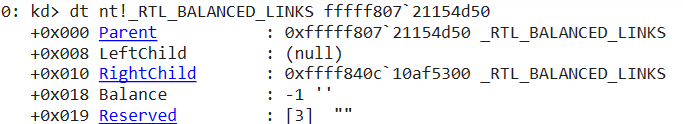

The table contains 151 cached driver entries with a tree depth of 9. The CompareRoutine points to PiCompareDDBCacheEntries, confirming this is the right table. The BalancedRoot is the entry point into the tree. Its RightChild gives us the first real node:

From each node, the entry data starts at offset 0x20 (past the _RTL_BALANCED_LINKS header). Adding our recovered offsets: DriverName at node+0x30, TimeDateStamp at node+0x40. Following the LeftChild and RightChild pointers lets us walk the entire tree:

A cheat developer who manually maps a kernel driver would try to find and remove their entry from this tree to avoid detection. An anti-cheat that detects a driver in memory (via pool tag scanning or other means) but finds no corresponding PiDDBCacheTable entry knows the entry was scrubbed.

MmUnloadedDrivers

MmUnloadedDrivers is a kernel array (also not exported) that maintains a circular buffer of the last 50 unloaded drivers, storing their name, start address, end address, and unload timestamp. This structure allows debugging and forensics of driver activity.

Cheat developers who successfully load and then unload a kernel driver often try to zero out or corrupt their entry in MmUnloadedDrivers to hide traces. Anti-cheats detect this by:

- Maintaining their own shadow copy of expected

MmUnloadedDriversentries. - Detecting anomalous zero-filled entries in the middle of the circular buffer (a signature of deliberate erasure).

- Cross-referencing

MmUnloadedDriversagainst other kernel timestamps and logs.

BigPool Allocations

When a kernel allocation exceeds approximately 4KB (more precisely, when it exceeds a threshold managed by the pool allocator), it is managed as a “big pool allocation” tracked in the PoolBigPageTable. Anti-cheats scan this table to find memory allocations that were made by manually mapped drivers. A manually mapped driver typically makes large allocations for its code and data sections; these show up in the big pool table with the allocation address but without a corresponding loaded driver.

The technique is to enumerate all big pool entries, then cross-reference each allocation’s address against the list of loaded driver address ranges. Allocations in no driver’s range that are the right size to be driver code sections are suspicious.

8. Anti-Debug Protections

Anti-cheat code itself is a high-value target for reverse engineering. Reverse engineers analyzing the anti-cheat driver need to use kernel debuggers, which anti-cheats aggressively detect.

NtQueryInformationProcess Checks

At the usermode level (in the game-injected DLL), the anti-cheat uses NtQueryInformationProcess with multiple information classes:

ProcessDebugPort(7): Returns a non-zero value if a debugger is attached viaDebugActiveProcess. A kernel driver can spoof this by hookingNtQueryInformationProcess, but the check is done in the kernel driver itself as well.ProcessDebugObjectHandle(30): Returns a handle to the debug object if one exists.ProcessDebugFlags(31): TheNoDebugInheritflag; checking for its inverse reveals debugger presence.

Kernel Debugger Detection

The kernel driver checks the kernel-exported variables KdDebuggerEnabled and KdDebuggerNotPresent. On a system with WinDbg (or any kernel debugger) attached, KdDebuggerEnabled is TRUE and KdDebuggerNotPresent is FALSE.

<table><tbody><tr><td><pre>1 2 3 4 5 6 7 8 9 10 11 12 </pre></td><td><pre><span>BOOLEAN</span> <span>IsKernelDebuggerPresent</span><span>(</span><span>void</span><span>)</span> <span>{</span> <span>// KD_DEBUGGER_ENABLED is a kernel export</span> <span>if</span> <span>(</span><span>*</span><span>KdDebuggerEnabled</span> <span>&&</span> <span>!*</span><span>KdDebuggerNotPresent</span><span>)</span> <span>{</span> <span>return</span> <span>TRUE</span><span>;</span> <span>}</span> <span>// Additional check: attempt a debug break and see if it's handled</span> <span>// More sophisticated: check specific kernel structures</span> <span>return</span> <span>FALSE</span><span>;</span> <span>}</span> </pre></td></tr></tbody></table>

Some anti-cheats go further and directly inspect the KDDEBUGGER_DATA64 structure and the shared kernel data page (KUSER_SHARED_DATA) for debugger-related flags.

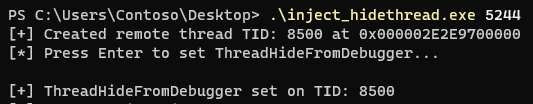

Thread Hiding Detection

NtSetInformationThread with ThreadHideFromDebugger (17) sets a flag in the thread’s ETHREAD structure (CrossThreadFlags.HideFromDebugger). Once set, the kernel will not deliver debug events for that thread to any attached debugger. The thread becomes essentially invisible to WinDbg: breakpoints in the thread do not trigger debugger notification, exceptions are not forwarded.

Anti-cheats use this to protect their own threads. However, they also detect if cheats are using it to hide their own injected threads. The detection method is to enumerate all threads in the system via a kernel enumeration (not via usermode APIs that could be hooked) and check the HideFromDebugger bit in CrossThreadFlags for each thread. A hidden thread in the game process that the anti-cheat did not itself hide is a red flag.

<table><tbody><tr><td><pre>1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 </pre></td><td><pre><span>// Check CrossThreadFlags for HideFromDebugger</span> <span>#define PS_CROSS_THREAD_FLAGS_HIDEFROMDEBUGGER 0x4 </span> <span>VOID</span> <span>CheckThreadDebugVisibility</span><span>(</span><span>PETHREAD</span> <span>Thread</span><span>)</span> <span>{</span> <span>// CrossThreadFlags is at a version-specific offset in ETHREAD</span> <span>ULONG</span> <span>crossFlags</span> <span>=</span> <span>*</span><span>(</span><span>ULONG</span><span>*</span><span>)((</span><span>ULONG_PTR</span><span>)</span><span>Thread</span> <span>+</span> <span>ETHREAD_CROSS_THREAD_FLAGS_OFFSET</span><span>);</span> <span>if</span> <span>(</span><span>crossFlags</span> <span>&</span> <span>PS_CROSS_THREAD_FLAGS_HIDEFROMDEBUGGER</span><span>)</span> <span>{</span> <span>// Thread is hidden from debuggers</span> <span>// If we didn't hide it, flag it</span> <span>if</span> <span>(</span><span>!</span><span>IsAntiCheatOwnedThread</span><span>(</span><span>Thread</span><span>))</span> <span>{</span> <span>ReportHiddenThread</span><span>(</span><span>Thread</span><span>);</span> <span>}</span> <span>}</span> <span>}</span> </pre></td></tr></tbody></table>

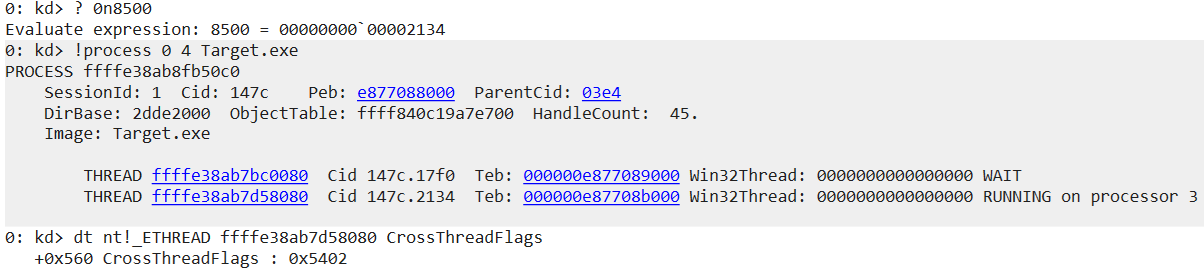

To demonstrate this detection, we use a test tool that creates a remote thread in Target.exe and then sets ThreadHideFromDebugger on it:

In WinDbg, we convert the decimal TID to hex, locate the thread in the process, and inspect its CrossThreadFlags. Before setting the flag, the value is 0x5402 with bit 2 (HideFromDebugger) clear:

After calling NtSetInformationThread with ThreadHideFromDebugger, the value changes to 0x5406. Bit 2 is now set, making this thread invisible to any attached debugger:

An anti-cheat enumerating threads in the game process would check this bit on every thread. A hidden thread that the anti-cheat did not create itself is a strong indicator of injected cheat code.

Timing-Based Anti-Debug

Single-step debugging (via the TF flag in EFLAGS) and hardware breakpoints dramatically increase the time between instruction executions. Anti-cheats use RDTSC instruction-based timing to detect this:

<table><tbody><tr><td><pre>1 2 3 4 5 6 7 8 9 10 11 </pre></td><td><pre><span>UINT64</span> <span>before</span> <span>=</span> <span>__rdtsc</span><span>();</span> <span>// Execute a fixed number of operations</span> <span>volatile</span> <span>ULONG</span> <span>dummy</span> <span>=</span> <span>0</span><span>;</span> <span>for</span> <span>(</span><span>int</span> <span>i</span> <span>=</span> <span>0</span><span>;</span> <span>i</span> <span><</span> <span>1000</span><span>;</span> <span>i</span><span>++</span><span>)</span> <span>dummy</span> <span>+=</span> <span>i</span><span>;</span> <span>UINT64</span> <span>after</span> <span>=</span> <span>__rdtsc</span><span>();</span> <span>UINT64</span> <span>elapsed</span> <span>=</span> <span>after</span> <span>-</span> <span>before</span><span>;</span> <span>if</span> <span>(</span><span>elapsed</span> <span>></span> <span>EXPECTED_MAXIMUM_CYCLES</span><span>)</span> <span>{</span> <span>// Execution was slowed - likely single-stepping or a breakpoint</span> <span>ReportDebuggerDetected</span><span>();</span> <span>}</span> </pre></td></tr></tbody></table>

The threshold EXPECTED_MAXIMUM_CYCLES is calibrated based on known CPU behavior. Single-stepping can add thousands of cycles per instruction (due to debug exception handling), making the timing discrepancy obvious.

Hardware Breakpoint Detection

The x86-64 debug registers (DR0-DR3 for breakpoint addresses, DR6 for status, DR7 for control) are accessible in kernel mode. Reading them allows detection of hardware breakpoints set by a debugger:

<table><tbody><tr><td><pre>1 2 3 4 5 6 7 8 9 10 11 </pre></td><td><pre><span>BOOLEAN</span> <span>HasHardwareBreakpoints</span><span>(</span><span>void</span><span>)</span> <span>{</span> <span>ULONG_PTR</span> <span>dr7</span> <span>=</span> <span>__readdr</span><span>(</span><span>7</span><span>);</span> <span>// Read DR7 (debug control register)</span> <span>// Check Local Enable bits (L0, L1, L2, L3) for each breakpoint</span> <span>// Bits 0, 2, 4, 6 of DR7 are the local enable bits for BP 0-3</span> <span>if</span> <span>(</span><span>dr7</span> <span>&</span> <span>0x55</span><span>)</span> <span>{</span> <span>// 0x55 = 01010101b - all four local enable bits</span> <span>return</span> <span>TRUE</span><span>;</span> <span>}</span> <span>return</span> <span>FALSE</span><span>;</span> <span>}</span> </pre></td></tr></tbody></table>

Anti-cheats scan all threads’ saved debug register state (accessible via CONTEXT structure obtained with KeGetContextThread or directly from KTHREAD::TrapFrame) for active hardware breakpoints not set by the anti-cheat itself.

Hypervisor-Based Debugger Detection

Type-1 hypervisor-based debuggers (like a custom hypervisor running a Windows VM for isolated debugging) are significantly harder to detect. The primary detection vectors are:

CPUID checks: The hypervisor present bit (bit 31 of ECX when CPUID leaf 1 is executed) indicates a hypervisor is present. The hypervisor vendor can be queried with CPUID leaf 0x40000000. VMware returns “VMwareVMware”, VirtualBox returns “VBoxVBoxVBox”. An unknown vendor string is suspicious.

MSR timing: Executing RDMSR in a VM introduces additional overhead compared to native execution. Anti-cheats time MSR reads and flag anomalies.

CPUID instruction timing: The CPUID instruction itself is a privileged instruction in virtualized environments and must be handled by the hypervisor, introducing measurable latency.

9. DMA Cheats and Detection

DMA cheats represent the current frontier of the anti-cheat arms race, and they are genuinely hard to address with software alone.

What DMA Cheats Are