Why Grafeo?¶

High Performance

Fastest graph database tested on the LDBC Social Network Benchmark, both embedded and as a server, with a lower memory footprint than other in-memory databases. Built in Rust with vectorized execution, adaptive chunking and SIMD-optimized operations.

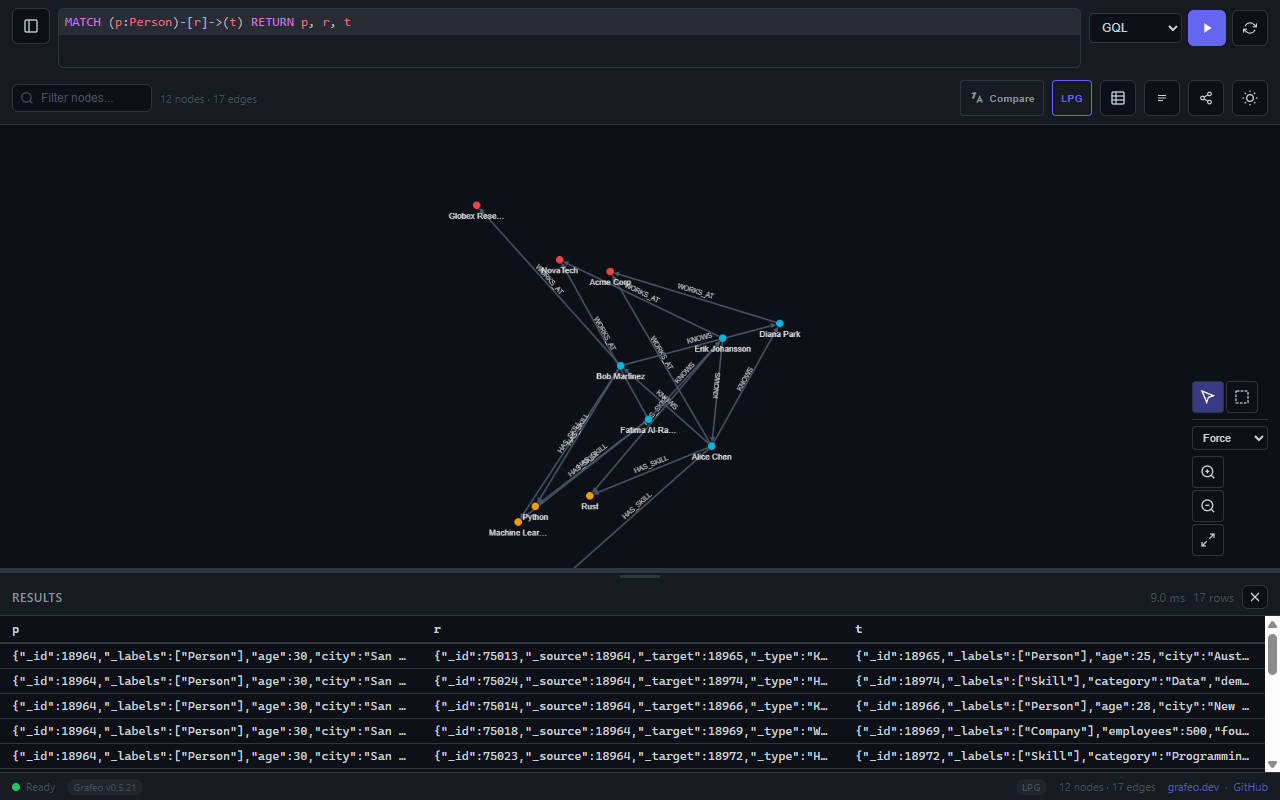

Multi-Language Queries

GQL, Cypher, Gremlin, GraphQL, SPARQL and SQL/PGQ. Choose the query language that fits the project and expertise level.

LPG & RDF Support

Dual data model support for both Labeled Property Graphs and RDF triples. Choose the model that fits the domain.

Vector Search

HNSW-based similarity search with quantization (Scalar, Binary, Product). Combine graph traversal with semantic similarity.

Embedded or Standalone

Embed directly into applications with zero external dependencies, or run as a standalone server with REST API and web UI. From edge devices to production clusters.

Rust Core

Core database engine written in Rust with no required C dependencies. Optional allocators (jemalloc/mimalloc) and TLS use C libraries for performance. Memory-safe by design with fearless concurrency.

ACID Transactions

Full ACID compliance with MVCC-based snapshot isolation. Reliable transactions for production workloads.

Multi-Language Bindings

Python (PyO3), Node.js/TypeScript (napi-rs), Go (CGO), C (FFI), C# (.NET 8 P/Invoke), Dart (dart:ffi) and WebAssembly (wasm-bindgen). Use Grafeo from the language of choice.

Ecosystem

AI integrations (LangChain, LlamaIndex, MCP), interactive notebook widgets, browser-based graphs via WebAssembly, standalone server with web UI and benchmarking tools.

Quick Start¶

PythonRust

[](#__codelineno-0-1)uv add grafeo

[](#__codelineno-1-1)import grafeo [](#__codelineno-1-2)[](#__codelineno-1-3)# Create an in-memory database [](#__codelineno-1-4)db = grafeo.GrafeoDB() [](#__codelineno-1-5)[](#__codelineno-1-6)# Create nodes and edges [](#__codelineno-1-7)db.execute(""" [](#__codelineno-1-8) INSERT (:Person {name: 'Alix', age: 30}) [](#__codelineno-1-9) INSERT (:Person {name: 'Gus', age: 25}) [](#__codelineno-1-10)""") [](#__codelineno-1-11)[](#__codelineno-1-12)db.execute(""" [](#__codelineno-1-13) MATCH (a:Person {name: 'Alix'}), (b:Person {name: 'Gus'}) [](#__codelineno-1-14) INSERT (a)-[:KNOWS {since: 2024}]->(b) [](#__codelineno-1-15)""") [](#__codelineno-1-16)[](#__codelineno-1-17)# Query the graph [](#__codelineno-1-18)result = db.execute(""" [](#__codelineno-1-19) MATCH (p:Person)-[:KNOWS]->(friend) [](#__codelineno-1-20) RETURN p.name, friend.name [](#__codelineno-1-21)""") [](#__codelineno-1-22)[](#__codelineno-1-23)for row in result: [](#__codelineno-1-24) print(f"{row['p.name']} knows {row['friend.name']}")

[](#__codelineno-2-1)cargo add grafeo

[](#__codelineno-3-1)use grafeo::GrafeoDB; [](#__codelineno-3-2)[](#__codelineno-3-3)fn main() -> Result<(), grafeo_common::utils::error::Error> { [](#__codelineno-3-4) // Create an in-memory database [](#__codelineno-3-5) let db = GrafeoDB::new_in_memory(); [](#__codelineno-3-6) [](#__codelineno-3-7) // Create a session and execute queries [](#__codelineno-3-8) let mut session = db.session(); [](#__codelineno-3-9) [](#__codelineno-3-10) session.execute(r#" [](#__codelineno-3-11) INSERT (:Person {name: 'Alix', age: 30}) [](#__codelineno-3-12) INSERT (:Person {name: 'Gus', age: 25}) [](#__codelineno-3-13) "#)?; [](#__codelineno-3-14) [](#__codelineno-3-15) session.execute(r#" [](#__codelineno-3-16) MATCH (a:Person {name: 'Alix'}), (b:Person {name: 'Gus'}) [](#__codelineno-3-17) INSERT (a)-[:KNOWS {since: 2024}]->(b) [](#__codelineno-3-18) "#)?; [](#__codelineno-3-19) [](#__codelineno-3-20) // Query the graph [](#__codelineno-3-21) let result = session.execute(r#" [](#__codelineno-3-22) MATCH (p:Person)-[:KNOWS]->(friend) [](#__codelineno-3-23) RETURN p.name, friend.name [](#__codelineno-3-24) "#)?; [](#__codelineno-3-25) [](#__codelineno-3-26) for row in result.rows { [](#__codelineno-3-27) println!("{:?}", row); [](#__codelineno-3-28) } [](#__codelineno-3-29) [](#__codelineno-3-30) Ok(()) [](#__codelineno-3-31)}

Features¶

Dual Data Model Support¶

Grafeo supports both major graph data models with optimized storage for each:

LPG (Labeled Property Graph)RDF (Resource Description Framework)

Nodes with labels and properties

Edges with types and properties

Properties supporting rich data types

Ideal for social networks, knowledge graphs, application data

Triples: subject-predicate-object statements

SPO/POS/OSP indexes for efficient querying

W3C standard compliance

Ideal for semantic web, linked data, ontologies

Query Languages¶

Choose the query language that fits the project:

| Language | Data Model | Style |

|---|---|---|

| GQL (default) | LPG | ISO standard, declarative pattern matching |

| Cypher | LPG | Neo4j-compatible, ASCII-art patterns |

| Gremlin | LPG | Apache TinkerPop, traversal-based |

| GraphQL | LPG, RDF | Schema-driven, familiar to web developers |

| SPARQL | RDF | W3C standard for RDF queries |

| SQL/PGQ | LPG | SQL:2023 GRAPH_TABLE for SQL-native graph queries |

GQLCypherGremlinGraphQLSPARQL

[](#__codelineno-4-1)MATCH (me:Person {name: 'Alix'})-[:KNOWS]->(friend)-[:KNOWS]->(fof) [](#__codelineno-4-2)WHERE fof <> me [](#__codelineno-4-3)RETURN DISTINCT fof.name

[](#__codelineno-5-1)MATCH (me:Person {name: 'Alix'})-[:KNOWS]->(friend)-[:KNOWS]->(fof) [](#__codelineno-5-2)WHERE fof <> me [](#__codelineno-5-3)RETURN DISTINCT fof.name

[](#__codelineno-6-1)g.V().has('name', 'Alix').out('KNOWS').out('KNOWS'). [](#__codelineno-6-2) where(neq('me')).values('name').dedup()

[](#__codelineno-7-1){ [](#__codelineno-7-2) Person(name: "Alix") { [](#__codelineno-7-3) friends { friends { name } } [](#__codelineno-7-4) } [](#__codelineno-7-5)}

[](#__codelineno-8-1)SELECT DISTINCT ?fofName WHERE { [](#__codelineno-8-2) ?me foaf:name "Alix" . [](#__codelineno-8-3) ?me foaf:knows ?friend . [](#__codelineno-8-4) ?friend foaf:knows ?fof . [](#__codelineno-8-5) ?fof foaf:name ?fofName . [](#__codelineno-8-6) FILTER(?fof != ?me) [](#__codelineno-8-7)}

Architecture Highlights¶

- Push-based execution engine with morsel-driven parallelism

- Columnar storage with type-specific compression

- Cost-based query optimizer with cardinality estimation

- MVCC transactions with snapshot isolation

- Zone maps for intelligent data skipping

Installation¶

PythonNode.jsGoRustC#DartWASM

[](#__codelineno-9-1)uv add grafeo

[](#__codelineno-10-1)npm install @grafeo-db/js

[](#__codelineno-11-1)go get github.com/GrafeoDB/grafeo/crates/bindings/go

[](#__codelineno-12-1)cargo add grafeo

[](#__codelineno-13-1)dotnet add package GrafeoDB

[](#__codelineno-14-1)# pubspec.yaml [](#__codelineno-14-2)dependencies: [](#__codelineno-14-3) grafeo: ^0.5.21

[](#__codelineno-15-1)npm install @grafeo-db/wasm

License¶

Grafeo is licensed under the Apache-2.0 License.