[ GENEVA, SWITZERLAND — March 28, 2026 ] — CERN is using extremely small, custom artificial intelligence models physically burned into silicon chips to perform real-time filtering of the enormous data generated by the Large Hadron Collider (LHC).

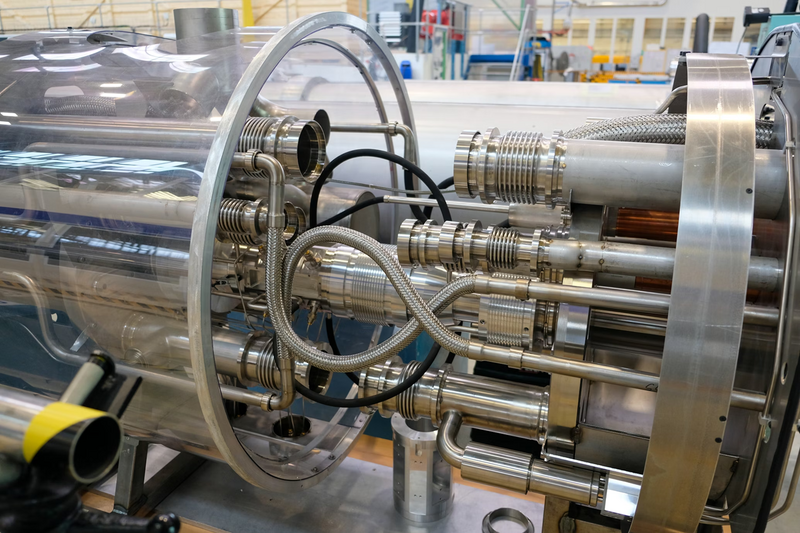

LHC tunnel and detectors

OVERVIEW

Proton collision in LHC detector

The Large Hadron Collider (LHC) generates an extraordinary volume of raw data — approximately 40,000 exabytes per year, equivalent to roughly one quarter of the entire current internet. During peak operation, the data stream can reach hundreds of terabytes per second, far exceeding the capacity of any feasible storage or conventional computing system.

Because it is physically impossible to store or process the full dataset, CERN must make split-second decisions at the detector level: which collision events contain potentially groundbreaking scientific value, and which should be discarded forever. This real-time selection process is one of the most demanding computational challenges in modern science.

To meet these extreme requirements, CERN has deliberately moved away from conventional GPU or TPU-based artificial intelligence architectures. Instead, the laboratory develops highly optimized, ultra-compact AI models that are compiled and physically implemented directly into custom silicon — primarily field-programmable gate arrays (FPGAs) and application-specific integrated circuits (ASICs). These hardware-embedded models enable ultra-low-latency inference at the very edge of the detector system, where decisions must be made in microseconds or even nanoseconds.

THE DATA CHALLENGE

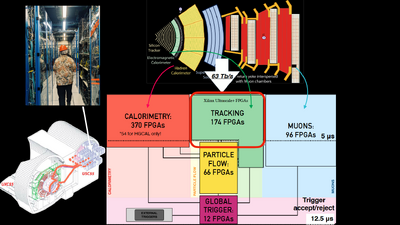

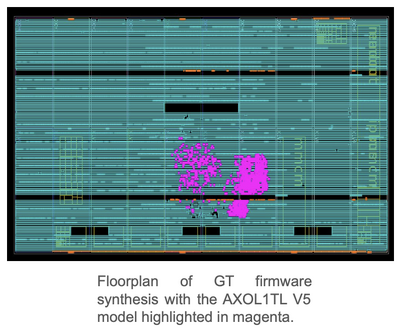

AXOL1TL algorithm floorplan on FPGA/ASIC

Inside the 27-kilometre ring of the Large Hadron Collider, proton bunches travel at velocities approaching the speed of light and cross paths roughly every 25 nanoseconds. Although billions of protons pass through one another during each crossing, actual hard collisions between protons remain relatively rare events.

When a collision does occur, the detectors surrounding the interaction point capture several megabytes of raw data from the resulting particle shower. This creates an overwhelming data stream: the LHC can generate up to hundreds of terabytes per second at peak luminosity. Storing or processing the full volume is physically impossible with current technology.

As a result, only about 0.02 % of all collision events are ultimately retained for further analysis. The first and most critical filtering stage, known as the Level-1 Trigger, is responsible for making these split-second decisions. It consists of approximately 1,000 field-programmable gate arrays (FPGAs) that evaluate incoming data in less than 50 nanoseconds. A highly specialized algorithm called AXOL1TL runs directly on these chips, analysing detector signals in real time and determining which events are scientifically promising enough to be preserved. All other data is discarded immediately and permanently.

AI APPROACH AND TECHNICAL STACK

High Level Trigger computing farm at CERN

CERN’s artificial intelligence models are deliberately designed to be extremely small and highly optimised for the unique constraints of the LHC environment. Unlike the large-scale language models and general-purpose AI systems commonly used in industry, these models are tailored specifically for ultra-low-latency, real-time inference at the detector level, where decisions must be made in nanoseconds.

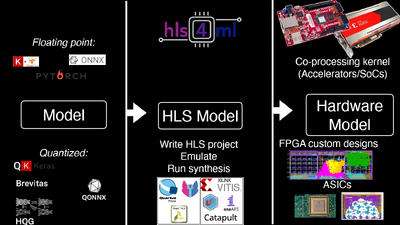

The models are compiled using the open-source tool **HLS4ML**, which translates machine-learning models written in frameworks such as PyTorch or TensorFlow into synthesizable C++ code. This code can then be deployed directly onto field-programmable gate arrays (FPGAs), systems-on-chip (SoCs), or custom application-specific integrated circuits (ASICs). The resulting hardware implementations achieve the extreme speed required while consuming significantly less power and silicon area than conventional GPU- or TPU-based solutions.

A distinctive feature of CERN’s approach is that a substantial portion of the available chip resources is not allocated to the neural network layers themselves. Instead, these resources are used to implement extensive precomputed lookup tables. These tables store the results of common input patterns in advance, allowing the hardware to deliver near-instantaneous outputs for the vast majority of typical detector signals without performing full floating-point calculations. This hardware-first design philosophy is what enables the system to operate at the required nanosecond-scale latency.

The second filtering stage, known as the High-Level Trigger, runs on a large surface-level computing farm consisting of 25,600 CPUs and 400 GPUs. Even after the aggressive Level-1 Trigger has reduced the data volume, this farm must still process terabytes of data per second before further reducing it to approximately one petabyte of scientifically valuable data per day.

FUTURE PLANS

The current Large Hadron Collider is scheduled for a major upgrade known as the High-Luminosity LHC (HL-LHC), which is expected to begin operations in 2031. This upgrade will dramatically increase the collider’s luminosity, producing roughly ten times more data per collision and generating significantly larger event sizes than the present LHC.

CERN is already actively preparing its AI hardware pipeline to handle this anticipated surge in data volume. The laboratory is developing next-generation versions of its ultra-compact AI models, further optimizing FPGA and ASIC implementations, and enhancing the entire real-time triggering system to maintain the extreme low-latency performance required for effective event selection at much higher data rates.

This forward-looking work is considered essential to ensure that the High-Luminosity LHC can continue delivering groundbreaking scientific discoveries in particle physics over the coming decades, even as the volume of raw data grows by an order of magnitude.

IMPLICATIONS

While the wider artificial intelligence industry continues to pursue ever-larger language models that demand massive computational resources and energy, CERN is deliberately moving in the opposite direction. The laboratory is developing some of the smallest, fastest, and most efficient AI models currently in existence, optimised specifically for direct hardware implementation in FPGAs and ASICs.

This work represents a compelling real-world demonstration of “tiny AI” — highly specialised, minimal-footprint neural networks — deployed in one of the most extreme scientific environments on the planet. In the LHC’s trigger systems, where decisions must be made in nanoseconds on enormous data streams, these compact models achieve performance levels that would be unattainable with conventional general-purpose AI accelerators.

Beyond particle physics, CERN’s approach may influence the future design of high-performance computing systems in other domains that require real-time, ultra-low-latency inference under extreme data rates. Applications in autonomous systems, high-frequency trading, medical imaging, and aerospace could benefit from similar hardware-embedded, resource-efficient AI techniques. As global demand for both computing power and energy efficiency continues to grow, the CERN model offers a practical alternative to the current trend of scaling up model size, highlighting the value of extreme specialisation and hardware-level optimisation.

PRIMARY SOURCES

| Source | Description | Verification Link |

|---|---|---|

| CERN Twiki | AXOL1TL V5 architecture and deployment details (VICReg-trained feature extractor + VAE for anomaly detection) | https://twiki.cern.ch/twiki/bin/view/CMSPublic/AXOL1TL2025 |

| arXiv Paper | Real-time Anomaly Detection at the L1 Trigger of CMS Experiment (introduces AXOL1TL and CICADA algorithms) | https://arxiv.org/abs/2411.19506 (or html version: https://arxiv.org/html/2411.19506v1) |

| CERN Official | General LHC data processing and trigger systems | https://home.cern/science/computing |

| Thea Aarrestad Talks | Overview of tiny AI / ML for LHC triggers (highly recommended for context) | |

| https://www.youtube.com/watch?v=T8HT_XBGQUI (Big Data and AI at the CERN LHC) | ||

| https://www.youtube.com/watch?v=8IZwhbsjhvE (From Zettabytes to a Few Precious Events: Nanosecond AI at the LHC) |

|

NEWS FILED BY: John