Key takeaways

- A compromised third‑party OAuth application enabled long‑lived, password‑independent access to Vercel’s internal systems, demonstrating how OAuth trust relationships can bypass traditional perimeter defenses.

- The impact was amplified by Vercel’s environment variable model, where credentials not explicitly marked as sensitive were readable with internal access - meaning that for any team whose access was compromised, non-sensitive environment variables were exposed without additional controls.

- A publicly reported leaked‑credential alert predating disclosure highlights detection‑to‑notification latency as a critical risk factor in platform breaches.

- This incident fits a broader 2026 convergence pattern (LiteLLM, Axios) in which attackers consistently target developer‑stored credentials across CI/CD, package registries, OAuth integrations, and deployment platforms.

- Effective defense requires architectural change: treating OAuth apps as third‑party vendors, eliminating long‑lived platform secrets, and designing for the assumption of provider‑side compromise.

Developing situation — last updated Tuesday, April 21, 2026

This entry was updated on April 21 to correct the incident timeline and scope characterization based on post-publication reporting from Context.ai's security bulletin.

Key corrections: the initial compromise occurred in February 2026 (not June 2024), the initial access vector was Lumma Stealer malware (not an unknown mechanism), the dwell time was approximately two months (not 22 months), and the impact was scoped to teams whose access was directly compromised — not a blanket platform-wide exposure of customer secrets. Environment variables not explicitly marked as "sensitive" were readable within compromised team scopes, but this required per-team access, not a single point of platform-wide credential exposure. The original language overstated the blast radius; we regret the error.

This analysis reflects what is publicly known about the Vercel OAuth supply chain compromise as of the date above. The incident remains under active investigation by Vercel and affected parties, and key details — including the full scope of downstream impact and attribution — may evolve as additional information becomes available. Where gaps exist, we have noted them explicitly rather than speculating. Defensive recommendations and detection guidance are based on the confirmed attack chain and established supply chain compromise patterns; organizations should act on these now rather than waiting for a complete picture. We will update this analysis as new technical details, vendor disclosures, or third-party research emerge.

In an intrusion that began with a Lumma Stealer malware infection at Context.ai in approximately February 2026 and was disclosed in April 2026, attackers leveraged a compromise of Context.ai’s Google Workspace OAuth tokens to gain a foothold into Vercel’s internal systems, exposing environment variables for an undisclosed but reportedly limited subset of customer projects. Vercel is a cloud deployment and hosting platform widely used for front‑end and serverless applications.

On April 19, 2026, Vercel published its security bulletin and CEO Guillermo Rauch posted a detailed thread on X confirming the attack chain and naming Context.ai as the compromised third party.

The incident is significant because it demonstrates how OAuth supply-chain trust relationships create lateral movement paths that bypass traditional perimeter defenses, and because Vercel's environment variable sensitivity model left non-sensitive credentials not encrypted at rest, making it readable to an attacker with internal access.

This analysis examines the attack chain, evaluates the platform design decisions that amplified blast radius, contextualizes the breach against a rising wave of supply chain compromises (LiteLLM, Axios, Codecov, CircleCI), and provides actionable detection and hardening guidance for organizations operating on Vercel and similar PaaS platforms.

What this incident reveals

What makes this incident notable is not its sophistication, the techniques used are well-established, but for three broader implications that make it especially significant:

- OAuth amplification. A single OAuth trust relationship cascaded from a compromised vendor into Vercel’s internal systems, exposing environment variables for a limited subset of customer projects — customers who had no direct relationship with the compromised vendor.

- AI-accelerated tradecraft. The CEO publicly attributed the attacker's unusual velocity to AI augmentation — an early, high-profile data point in the 2026 discourse around AI-accelerated adversary tradecraft.

- Detection-to-disclosure latency. At least one public customer report suggests credentials were being flagged as leaked in the wild nine days before Vercel's disclosure — raising questions about detection-to-disclosure latency in platform breaches.

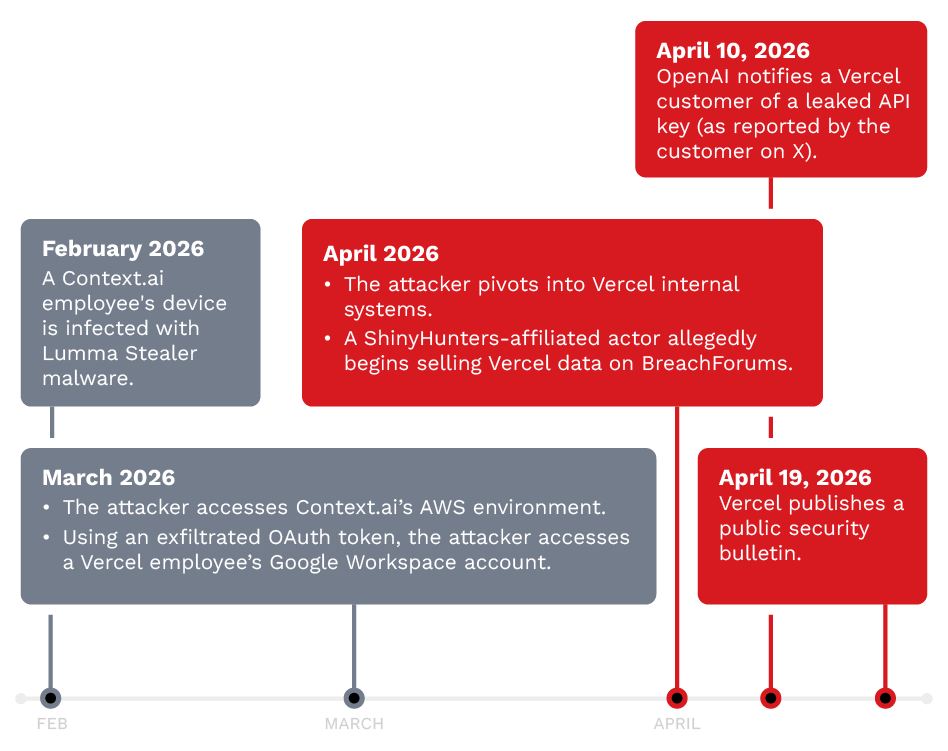

Incident timeline

Based on currently available reporting, the attack spanned approximately two months from the initial Lumma Stealer infection at Context.ai to Vercel’s public disclosure. While the dwell time is shorter than initially assessed, the attack demonstrates how OAuth-based intrusions leverage legitimate application permissions that rarely trigger standard detection controls.

Figure 1. Incident timeline illustrating the attack progression from initial Lumma Stealer infection to public disclosure.

| Data | Event | Verification status |

|---|---|---|

| ~February 2026 |

|

Context.ai employee infected with Lumma Stealer malware; corporate credentials, session tokens, and OAuth tokens exfiltrated

|

CONFIRMED — Hudson Rock, CyberScoop, Context.ai bulletin

| |

~March 2026

|

Attacker accesses Context.ai’s AWS environment; exfiltrates OAuth tokens for consumer users including a Vercel employee’s Google Workspace token

|

CONFIRMED — Context.ai bulletin

| |

March 2026

|

Attacker uses exfiltrated OAuth token to access Vercel employee’s Google Workspace account

|

CONFIRMED — Vercel bulletin, Context.ai bulletin, Rauch statement

| |

March-April 2026

|

Attacker pivots into Vercel internal systems; customer environment variable enumeration begins

|

CONFIRMED — Vercel bulletin

| |

~April 2026

|

ShinyHunters-affiliated actor allegedly begins selling Vercel data on BreachForums

|

UNVERIFIED — threat actor claims only

| |

April 10, 2026

|

OpenAI notifies a Vercel customer of a leaked API key (per customer account on X)

|

REPORTED — single source

| |

April 19, 2026

|

Vercel publishes security bulletin; Rauch posts detailed thread on X naming Context.ai

|

CONFIRMED

| |

April 19, 2026 onward

|

Customer notification, credential rotation guidance, and dashboard changes rolled out

|

CONFIRMED

|

Table 1. Summary of key events and their confirmation status

A key observation from the timeline is that even with a relatively short dwell time of approximately two months, the attacker was able to progress from a Lumma Stealer infection at a third-party vendor to customer environment variable exfiltration at Vercel. This speed of lateral movement underscores the difficulty of detecting OAuth-based pivots that use legitimate application permissions.

It is worth noting that Google Workspace OAuth audit logs are retained six months by default on many subscription tiers. In this case, the approximately two-month dwell time means logs should still be within the retention window, but a longer-running compromise of this type could easily outlast default retention — a factor investigators should consider when setting retention policies.

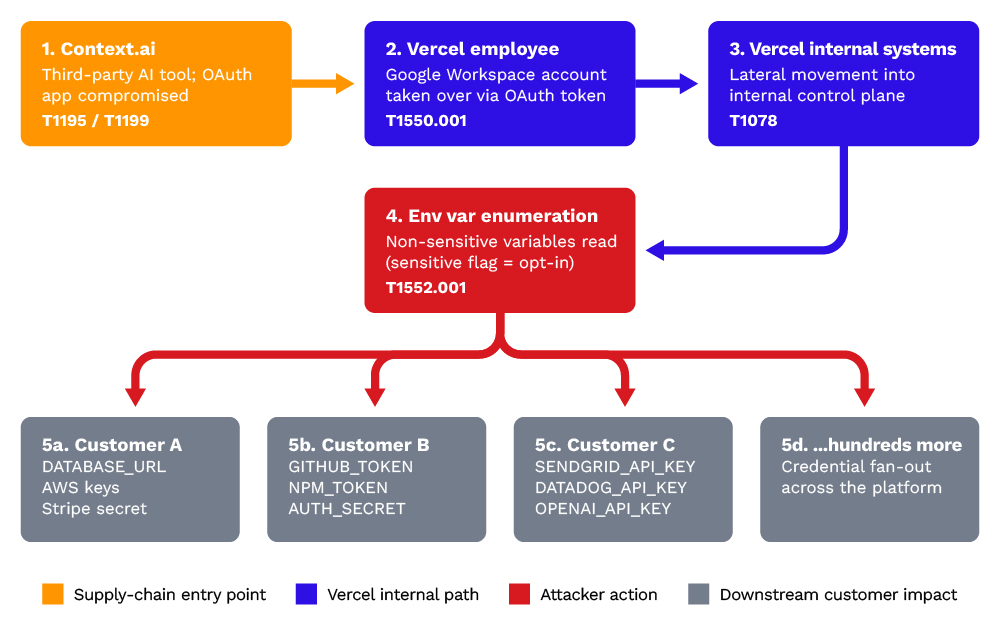

Attack chain

The attack exploited a trust chain that is endemic to modern SaaS environments: third-party OAuth applications granted access to corporate Google Workspace accounts.

Figure 2. Vercel breach attack chain

Stage 1: Third-Party OAuth compromise (T1199)

Context.ai, a company providing AI analytics tooling, had a Google Workspace OAuth application authorized by Vercel employees. The attacker compromised this OAuth application — the compromise has since been traced to a Lumma Stealer malware infection of a Context.ai employee in approximately February 2026, reportedly after the employee downloaded Roblox game exploit scripts (per Hudson Rock and CyberScoop). The stolen credentials enabled the attacker to access Context.ai’s AWS environment and exfiltrate OAuth tokens for consumer users of Context AI Office Suite, a self-serve consumer product launched in June 2025.

In his post on X, Rauch stated that Vercel has “reached out to Context to assist in understanding the full scale of the incident,” phrasing that suggests Context may not have detected the compromise itself. Context.ai has since published its own security bulletin confirming it detected and stopped the unauthorized access to its AWS environment in March 2026, though the OAuth token exfiltration was not identified until Vercel’s investigation.

This is the critical initial access vector. OAuth applications, once authorized, maintain persistent access tokens that:

- Do not require the user's password

- Survive password rotations

- Often have broad scopes (email, drive, calendar access)

- Are rarely audited after initial authorization

Stage 2: Workspace account takeover (T1550.001)

Using the compromised OAuth application's access, the attacker pivoted to a Vercel employee's Google Workspace account. This provided email access (potential for further credential harvesting), internal document access via Google Drive, calendar visibility into meetings and linked resources, and potential access to other OAuth-connected services.

Stage 3: Internal system access (T1078)

From the compromised Workspace account, the attacker pivoted into Vercel's internal systems. Rauch described the escalation as “a series of maneuvers that escalated from our colleague's compromised Vercel Google Workspace account.” The specific lateral movement technique — whether via SSO federation, harvested credentials from email/drive, or another OAuth-connected internal tool — has not been disclosed.

Stage 4: Environment variable enumeration (T1552.001)

The attacker accessed Vercel's internal systems with sufficient privileges to enumerate customer project environment variables. As per Rauch's public statement: Vercel stores all customer environment variables fully encrypted at rest, but the platform offers a capability to designate variables as “non-sensitive.” Through enumeration of these non-sensitive variables, the attacker obtained further access.

Stage 5: Potential downstream exploitation (T1078.004)

Exposed environment variables commonly contain credentials for downstream services. A single public customer report by Andrey Zagoruiko (April 19, 2026) described receiving an OpenAI leaked-key notification on April 10 for an API key that, according to the report, only existed only in Vercel—suggesting that at least one exposed credential was detected in the wild prior to Vercel’s disclosure.

This report introduces a potential detection-to-disclosure anomaly, which warrants closer examination and is explored in the following section.

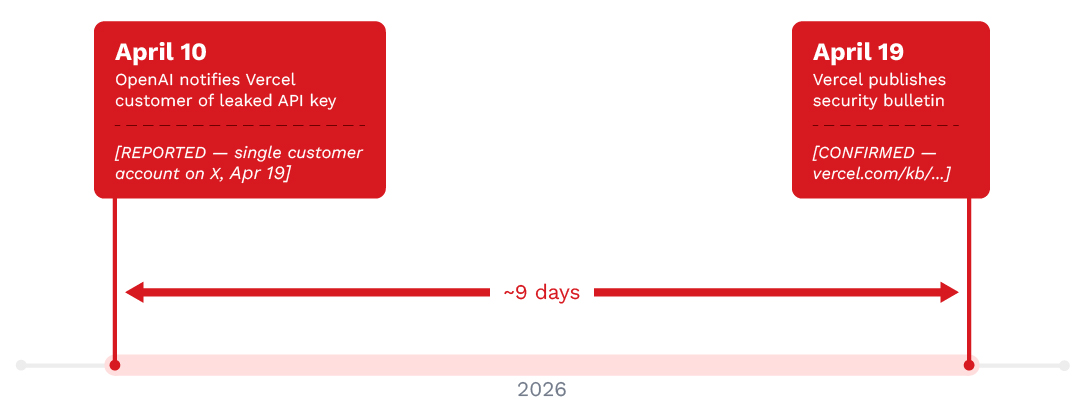

Disclosure timeline anomaly

A public reply to Guillermo Rauch's April 19 thread on X surfaced a timeline detail that deserves independent attention. A Vercel customer, Andrey Zagoruiko, reported receiving a leaked-key notification from OpenAI on April 10, 2026—for an API key that, according to the customer, had never existed outside Vercel.

OpenAI's leaked-credential detection system typically triggers when an API key is found in a public location where it should not appear in (e.g., GitHub, paste sites, and similar sources). The pathway from a Vercel environment variable to an OpenAI notification is not trivially explained. Notably, the date creates a nine-day window between the earliest public evidence of exposure and Vercel's disclosure.

Figure 3. Disclosure timeline anomaly showing a nine‑day gap between apparent credential exposure and public notification.

What the 9-day gap means and what it does not

It is important to note that this is a single public report, not a forensic finding. It should not be interpreted as proof that Vercel knew about the compromise on April 10.

It is, however, evidence that at least one credential was detected in the wild before customers were formally notified to rotate secrets. This distinction matters for three audiences:

- Regulators: Under GDPR, the 72-hour breach notification clock starts when a controller becomes aware of a breach. The question of when Vercel became aware is now public.

- Auditors: SOC 2 and ISO 27001 assessors will examine the detection-to-notification latency as part of continuous-monitoring evidence.

- Customers: Organizations whose credentials may have been exposed cannot assume the exposure window ended on April 19. It may have begun being actively exploited well before.

From an incident-response planning perspective, this data point also validates a practical point: unsolicited leaked-credential notifications from providers, such as OpenAI, Anthropic, GitHub, AWS, Stripe, and the likes, are now a primary early-warning channel for platform breaches. Security teams should treat them as high-priority signals, not routine noise.

AI-accelerated tradecraft (CEO Assessment)

In his April 19 thread on X, Vercel CEO Guillermo Rauch explicitly stated:

“We believe the attacking group to be highly sophisticated and, I strongly suspect, significantly accelerated by AI. They moved with surprising velocity and in-depth understanding of Vercel.”

This is a noteworthy on-record claim from a CEO of an affected platform and should be evaluated carefully. Attribution based on "velocity" is inherently interpretive, but it warrants attention for several reasons which we discuss in this section.

What "AI-accelerated" could plausibly look like in evidence

If Rauch’s assessment reflects something real rather than post-hoc rationalization, the underlying forensic signals would likely include one or more of the following:

- Enumeration speed that exceeds manual pace. Scripting alone accounts for some of this, but LLM-driven reconnaissance can parallelize schema discovery, endpoint probing, and credential format recognition faster than manual query construction.

- Contextual query construction. Queries that appear aware of Vercel-specific terminology (project slugs, deployment target names, env var naming conventions) without obvious prior reconnaissance.

- Adaptive behavior in response to errors. LLM-assisted attackers tend to recover from API errors and rate-limits more fluently than static scripts, shifting strategy on the fly.

- Prompt-engineered social artifacts. Phishing lures, commit messages, or support tickets that read as locally authentic rather than translated or templated.

Why this matters beyond the Vercel incident

Regardless of whether Rauch's assessment holds up to formal forensic review, the category itself—AI-augmented adversary operations—is no longer simply speculative. Microsoft's April 2026 publication on AI-enabled device-code phishing (Storm-2372 successor campaigns) documented live threat actors using generative AI for dynamic code generation, hyper-personalized lures, and backend automation orchestration. The implication is that telemetry baselines calibrated against human-paced attacker behavior may generate false negatives against AI-accelerated operators.

Detection-engineering implication

If AI-accelerated attackers compress the timeline of enumeration and lateral movement, detection rules tuned on dwell-time and velocity thresholds from older incident data may under-alert. In particular, teams should consider revisiting thresholds on: unique-resource enumeration rate per session, error-to-success ratio recovery curves, and diversity of query patterns within a short window.

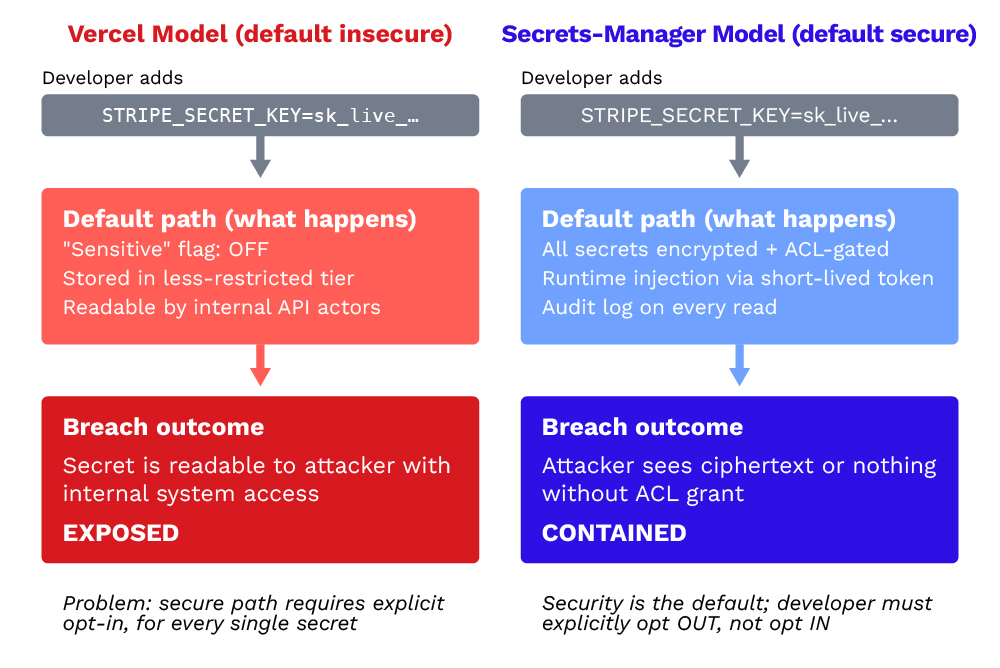

The environment variable design problem

The most consequential aspect of this breach is not the initial access vector — OAuth compromises are a known and studied risk. It is Vercel's environment variable sensitivity model, which created a default-insecure configuration for customer secrets.

Figure 4. The environment variable design problem, comparing default‑insecure secrets‑manager models with secure‑by‑default approaches.

How Vercel environment variables worked at the time of the breach

Vercel projects use environment variables to inject configuration and secrets into serverless functions and build processes. These variables have a "sensitive" flag that controls access restrictions, as seen in Table 2.

| Property | Default (Non-sensitive) | Sensitive |

|---|---|---|

| Default state |

|

ON (all new vars)

|

Must be explicitly enabled

| |

Visible in dashboard

|

Yes

|

Masked after creation

| |

Accessible via internal APIs

|

Yes

|

Restricted

| |

Encrypted at rest

|

No (according to Rauch)

|

Yes, with additional restrictions

| |

Accessible to attacker in this breach

|

Yes

|

Appears not

|

Table 2. Comparison of Vercel environment variable handling based on sensitivity flag.

The critical design choice

The sensitive flag is off by default. Every DATABASE_URL, API_KEY, STRIPE_SECRET_KEY, or AWS_SECRET_ACCESS_KEY added by a developer who did not explicitly toggle this flag was stored unencrypted at rest in Vercel's internal access model.

Any security control that requires explicit opt-in for every individual secret, with no guardrails or defaults, will have a low adoption rate in practice.

Vercel's response

Rauch confirmed that Vercel has already rolled out dashboard changes: an overview page for environment variables and an improved UI for sensitive variable creation and management. These changes improve discoverability, but as of this writing do not change the default — developers must still opt in per variable. Whether Vercel will flip the default remains an open question that customers should press on.

Comparison to industry peers

The industry trend is toward purpose-built secret storage, such as Vault, AWS Secrets Manager, Doppler, and Infisical, rather than environment variable stores with sensitivity tiers. This breach validates that architectural choice.

Table 3 summarizes how Vercel’s environment variable based approach compares to common practices among similar platforms.

| Platform | Default secret handling | Auto-detection |

|---|---|---|

| Vercel |

|

Non-sensitive by default; manual flag

|

No

| |

AWS SSM Parameter Store

|

Supports SecureString type

|

No (but distinct API)

| |

HashiCorp Vault

|

All secrets encrypted with ACL

|

N/A (purpose-built)

| |

GitHub Actions

|

All secrets masked in logs

|

No (but separate secrets UI)

| |

Netlify

|

Environment variables with secret toggle

|

No

|

Table 3. Comparison of Vercel’s environment variable–based secret handling with industry peer platforms that employ dedicated secret management systems.

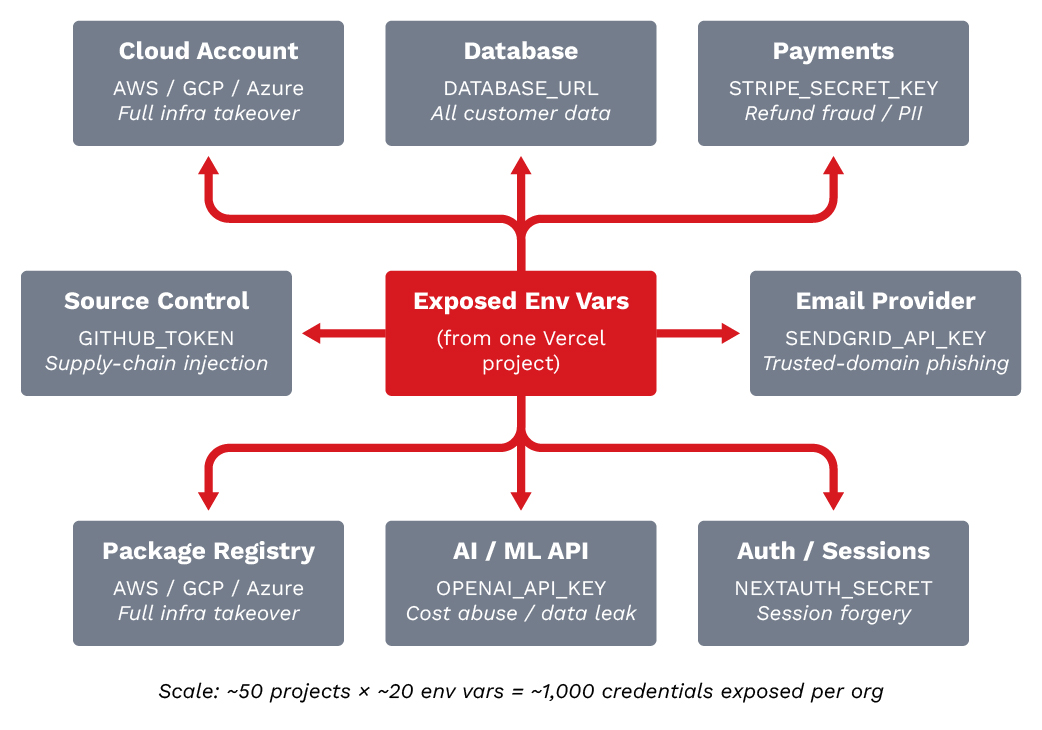

Credential fan-Out: Quantifying downstream risk

The term “credential fan-out” describes how a single platform breach cascades into exposure across every downstream service authenticated by credentials stored on that platform.

Figure 5. Illustration of credential fan-out and how one platform breach can turn into many

For this particular case, we summarize in Table 4 what Vercel project environment variables may typically include and their downstream impact.

| Category | Example variables | Downstream impact |

|---|---|---|

| Database |

|

DATABASE_URL, POSTGRES_PASSWORD

|

Full data access

| |

Cloud

|

AWS_ACCESS_KEY_ID, AWS_SECRET_ACCESS_KEY

|

Cloud account compromise

| |

Payment

|

STRIPE_SECRET_KEY, STRIPE_WEBHOOK_SECRET

|

Financial data, refund fraud

| |

Auth

|

AUTH0_SECRET, NEXTAUTH_SECRET

|

Session forgery, account takeover

| |

|

SENDGRID_API_KEY, POSTMARK_TOKEN

|

Phishing from trusted domains

| |

Monitoring

|

DATADOG_API_KEY, SENTRY_DSN

|

Telemetry manipulation

| |

Source

|

GITHUB_TOKEN, NPM_TOKEN

|

Supply chain injection

| |

AI/ML

|

OPENAI_API_KEY, ANTHROPIC_API_KEY

|

API abuse, cost generation

|

Table 4. Environment variables commonly stored in Vercel projects and the potential downstream impact if exposed.

A single Vercel project commonly contains 10 to 30 environment variables. At an organization scale, a portfolio of 50 projects could have 500 to 1,500 credentials within the platform. In this incident, the attacker accessed a limited subset of customer projects, but each exposed credential is a potential pivot point into an entirely separate system with its own blast radius.

This is the multiplier that elevates a platform breach from a confidentiality event into a potential cascade across the software supply chain.

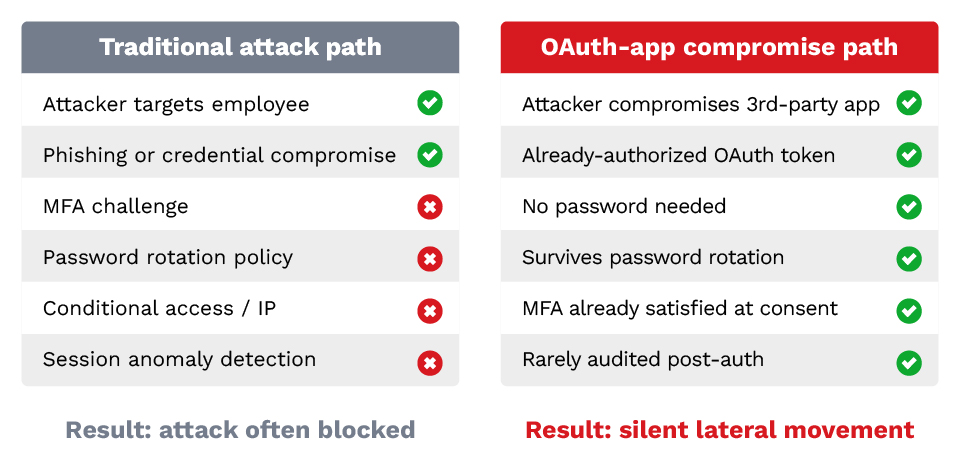

Why OAuth trust relationships bypass perimeter defenses

A fundamental reason this attack succeeded for approximately 22 months is that OAuth-based intrusion bypasses most of the controls that would catch a traditional credential-based attack.

Every defensive control in the left column is something security teams rely on to detect or block account compromise. Every one of those controls is either irrelevant or already satisfied in the OAuth-app compromise path. This asymmetry is the reason OAuth governance is emerging as a distinct security discipline, separate from identity and access management.

Figure 6. Comparison of traditional credential based attack paths and OAuth application compromise, illustrating how OAuth trust relationships bypass perimeter security controls and enable silent lateral movement.

OAuth governance as a vendor-risk function

Most organizations treat OAuth grants as a developer self-service problem: each employee authorizes the tools they need, with minimal central review. This incident argues OAuth grants should be treated as third-party risk management — every authorized OAuth app is effectively a vendor with persistent access to corporate data, and should be vendor-reviewed, periodically re-authorized, and monitored for anomalous use.

Threat actor claims and attribution

Threat actor claims on underground forums are inherently unreliable. The following is documented for awareness and threat tracking, not as confirmed fact. Attribution in breach scenarios is notoriously difficult, and forum claims are frequently exaggerated, fabricated, or made by parties tangentially related to an incident.

ShinyHunters-affiliated claims

A threat actor claiming affiliation with the ShinyHunters group posted on BreachForums alleging possession of Vercel data.

| Claimed data | Quantity |

|---|---|

| Employee records |

|

~580

| |

Source code repositories

|

Not specified

| |

API keys and internal tokens

|

Not specified

| |

GitHub and NPM tokens

|

Not specified

| |

Internal communications

|

Not specified

| |

Linear workspace access

|

Not specified

|

Table 5. Summary of claimed data and their quantity, all of which remain unverified.

Several factors complicate attribution of the incident to the actor claiming ShinyHunters affiliation:

- Known ShinyHunters members have publicly denied involvement to BleepingComputer.

- A $2M ransom demand was allegedly communicated via Telegram — a common monetization pattern for both legitimate and fabricated breach claims.

- The ShinyHunters brand has been used by multiple, potentially unrelated actors since the group's original campaigns.

- Vercel's security bulletin does not reference these claims; Rauch's thread addresses the attack chain but not the forum posting directly.

Supply chain release path: Vercel's position

Rauch directly addressed the highest-impact scenario stating that “We've analyzed our supply chain, ensuring Next.js, Turbopack, and our many open source projects remain safe for our community.”

Independent verification of release-path integrity is ongoing at the time of writing. Organizations using Next.js, Turbopack, or other Vercel open source projects should continue to monitor package integrity signals (checksums, signing, provenance attestations) as standard practice.

Without independent verification of the forum-claimed data, those claims should be treated as unconfirmed. The OAuth-based attack chain described by Vercel is technically sound and does not require the scope of access claimed by the forum poster, suggesting the claims may be exaggerated, may represent a separate unrelated incident, or may be fabricated.

MITRE ATT&CK Mapping

The confirmed attack chain maps cleanly to established MITRE ATT&CK techniques, as summarized in Table 6. The mapping reflects behaviors explicitly described in Vercel’s disclosure and aligns with well‑understood OAuth abuse patterns rather than novel exploitation.

| Tactic | Technique | ID | Application |

|---|---|---|---|

| Initial Access |

|

Trusted Relationship

|

T1199

|

Context.ai OAuth app as trusted third party

| |

Persistence

|

Application Access Token

|

T1550.001

|

OAuth token survives password rotation

| |

Credential Access

|

Valid Accounts

|

T1078

|

Compromised employee Workspace credentials

| |

Discovery

|

Account Discovery

|

T1087

|

Internal system and project enumeration

| |

Credential Access

|

Unsecured Credentials: Credentials in Files

|

T1552.001

|

Non-sensitive env vars accessible

| |

Lateral Movement

|

Valid Accounts: Cloud Accounts

|

T1078.004

|

Potential use of exposed cloud credentials

| |

Collection

|

Data from Information Repositories

|

T1213

|

Env var enumeration across projects

|

Table 6. MITRE ATT&CK technique mapping for the Vercel incident.

Based on this mapping, the pivot from OAuth application access to internal system access (T1199 to T1078) is the highest-value detection point.

Organizations should therefore monitor for anomalous OAuth application behavior, particularly applications accessing resources outside their expected scope or from unexpected IP ranges.

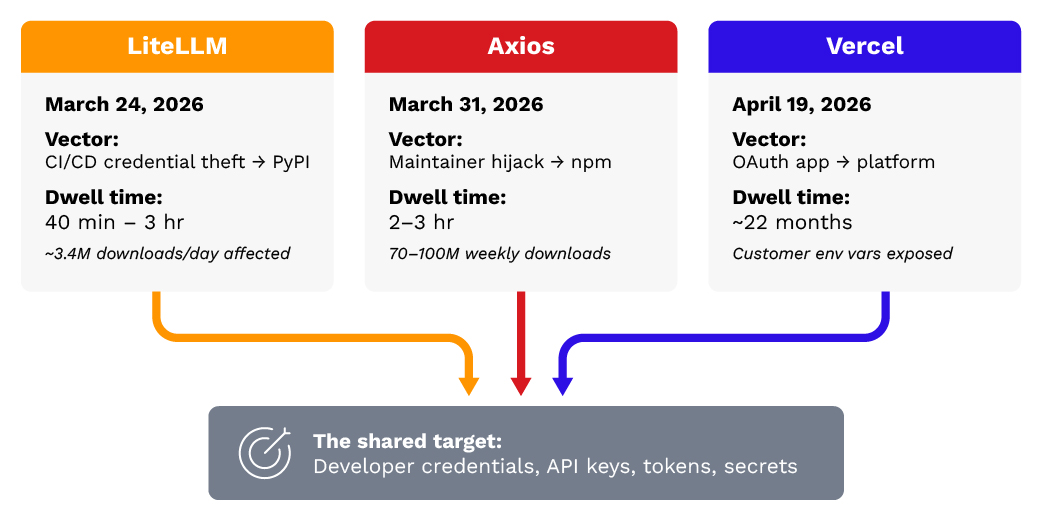

The supply chain siege: LiteLLM, Axios and a converged pattern

The Vercel breach did not occur in isolation. The period from March to April 2026 has seen an unprecedented concentration of software supply chain attacks, suggesting either coordinated campaign activity or—more likely—convergent discovery by multiple threat actors of the same structural weakness: the trust boundaries between package registries, CI/CD systems, OAuth providers, and deployment platforms.

Figure 7. Convergence of three distinct supply‑chain attack vectors on a single target: developer‑stored credentials and secrets.

March 24, 2026: LiteLLM PyPI supply chain compromise

Malicious PyPI packages litellm versions 1.82.7 and 1.82.8 were published using stolen CI/CD publishing credentials from Trivy (Aqua Security's vulnerability scanner). The attack targeted LiteLLM, a widely-used LLM proxy with ~3.4 million daily downloads.

- Initial access: Attacker (tracked as "TeamPCP") compromised Trivy's CI/CD pipeline credentials, which had PyPI publishing permissions.

- Payload: Three-stage backdoor targeting 50+ credential types across major cloud providers, with Kubernetes DaemonSet persistence for lateral movement.

- Dwell time: Malicious packages were live for approximately 40 minutes to 3 hours before detection and removal.

- CVE involved: CVE-2026-33634.

March 31, 2026: Axios npm supply chain compromise

The npm package axios (70–100 million weekly downloads) was compromised via maintainer account hijacking. Malicious versions 1.14.1 and 0.30.4 injected a dependency on plain-crypto-js@4.2.1, which contained a cross-platform Remote Access Trojan (RAT).

- Initial access: Maintainer account hijacked (mechanism not disclosed; credential stuffing or phishing suspected).

- Scale: 135 endpoints detected contacting attacker command-and-control infrastructure.

- Dwell time: 2–3 hours before detection.

- Attribution: Microsoft attributed the attack to Sapphire Sleet, a North Korean state-sponsored threat actor.

The convergence pattern

Three attacks in three weeks. Three different vectors. The same target: the credentials and secrets that developers store in their toolchains.

| Incident | Date | Vector | Target asset | Dwell time |

|---|---|---|---|---|

| LiteLLM |

|

Mar 24, 2026

|

CI/CD credential theft → PyPI

|

Developer credentials, API keys

|

40 min – 3 hrs

| |

Axios

|

Mar 31, 2026

|

Maintainer account hijack → npm

|

Developer workstations (RAT)

|

2–3 hrs

| |

Vercel

|

Apr 19, 2026

|

OAuth app compromise → platform

|

Customer env vars (credentials)

|

~22 months

|

Table 7. Summary of recent supply chain adjacent incidents targeting developer credentials and secret storage layers.

What previous platform breaches reveal

The Vercel breach follows a well-documented pattern of platform-level compromises that expose customer secrets at scale.

Codecov bash uploader breach (January – April 2021)

What happened: Attackers modified Codecov's Bash Uploader script (used in CI/CD pipelines) to exfiltrate environment variables from customers' CI environments. The compromise went undetected for approximately two months. 29,000+ customers potentially affected, including Twitch, HashiCorp, and Confluent.

Parallel to Vercel: Both incidents expose customer credentials stored as environment variables through a platform compromise.

CircleCI security incident (January 2023)

What happened: An attacker stole an employee's SSO session token via malware on a personal device, used it to access internal CircleCI systems, and exfiltrated customer secrets and encryption keys. CircleCI recommended all customers rotate every secret stored on the platform.

Parallel to Vercel: Nearly identical pattern — employee account compromise → internal system access → customer secret exfiltration.

Snowflake customer credential attacks (May–June 2024)

Threat actor UNC5537 used credentials obtained from infostealer malware to access Snowflake customer accounts that lacked MFA. Over 165 organizations affected, including Ticketmaster, Santander Bank, and AT&T.

Okta support system breach (October 2023)

Attackers accessed Okta's customer support case management system using stolen credentials, viewing HAR files that contained session tokens for Okta customers including Cloudflare, 1Password, and BeyondTrust.

Pattern summary

The pattern is clear. Platform-level access to customer secrets is a systemic risk that has been exploited repeatedly across CI/CD, identity, data warehouse, and deployment platforms. Each incident follows the same arc: initial access through a trust relationship or credential, lateral movement to internal systems, and exfiltration of customer secrets at varying scale — from targeted subsets to platform-wide exposure.

| Incident | Year | Initial vector | Customer asset exposed | Detection lag |

|---|---|---|---|---|

| Codecov |

|

2021

|

Supply chain (script modification)

|

CI env vars

|

~2 months

| |

Okta

|

2023

|

Stolen support credentials

|

Session tokens (HAR files)

|

Weeks

| |

CircleCI

|

2023

|

SSO session token theft

|

Secrets + encryption keys

|

Weeks

| |

Snowflake

|

2024

|

Infostealer credentials (no MFA)

|

Customer data

|

Months

| |

Vercel

|

2026

|

OAuth app compromise (via infostealer at vendor)

|

Deployment env vars

|

~2 months

|

Table 8. Pattern of recent platform level breaches illustrating repeated exposure of customer secrets following trust based initial access and prolonged detection latency.

What remains unknown

Despite the volume of public reporting, executive statements, and third party commentary surrounding this incident, material gaps remain in the public record. A rigorous analysis requires not only examining what is known but explicitly acknowledging what has not been disclosed or independently verified.

The following unresolved questions represent significant gaps in publicly available information that are directly relevant to understanding the root cause, scope, and impact of this incident:

- When Vercel first detected anomalous activity. The April 10 OpenAI notification received by a Vercel customer raises this question sharply. Vercel has not published an internal-detection timeline.

- Why the nine-day gap between the earliest public evidence of credential abuse and Vercel's disclosure. Multiple explanations are plausible (coordinated disclosure, ongoing investigation, customer notifications in progress); the public record does not resolve which applies.

- Number of affected customers. Rauch described the impact as "quite limited"; a specific count has not been disclosed.

- Whether the ShinyHunters forum claims represent the same attacker. Whether the claims match the confirmed attack chain or a separate incident remains unverified.

- Context.ai's current status and downstream-customer notifications. Whether Context.ai has published its own incident report or notified other customers is unknown.

- Full scope of internal access. Vercel has described the customer impact as limited, but the precise number of affected teams, projects, and environment variables has not been publicly disclosed. Whether the attacker accessed internal systems beyond customer environment variables also remains unconfirmed.

Detection and hunting guidance

This section provides practical detection and hunting guidance for organizations potentially affected by the incident.

For Vercel customers (Immediate)

1. Audit all environment variables by entering the following code in Vercel projects to verify the configuration

# List all env vars across all Vercel projects via CLI

vercel env ls --environment production

vercel env ls --environment preview

vercel env ls --environment development

# Check which variables are NOT marked as sensitive

# (Vercel CLI does not currently expose the sensitive flag —

check via dashboard or API)

2. Search for unauthorized usage of exposed credentials

- Query cloud provider CloudTrail/audit logs for API calls using exposed access keys from unexpected IP ranges or user agents.

- AWS CloudTrail: Filter on eventSource containing sts.amazonaws.com, iam.amazonaws.com, s3.amazonaws.com. Search for userIdentity.accessKeyId matching any rotated Vercel-stored access key. Flag any sourceIPAddress outside your known CIDR ranges or any userAgent containing python-requests, curl, Go-http-client, or unfamiliar automation strings. Time window: February 2026 – present.

- GCP Audit Logs: Query protoPayload.authenticationInfo.principalEmail for service accounts whose keys were stored in Vercel. Filter protoPayload.requestMetadata.callerIp against your known ranges. Look for protoPayload.methodName containing storage.objects.get, compute.instances.list, or iam.serviceAccountKeys.create from unexpected sources.

- Azure Activity Logs: Filter on caller matching any application ID or service principal whose credentials were in Vercel env vars. Flag callerIpAddress outside expected ranges. Priority queries: Microsoft.Storage/storageAccounts/listKeys, Microsoft.Compute/virtualMachines/write, Microsoft.Authorization/roleAssignments/write.

- Database access logs: For every database whose connection string was stored as a Vercel environment variable, query connection logs for the full exposure window (February 2026 – April 2026). Search for connections originating from IPs outside your application's known egress ranges (Vercel edge IPs, your VPN, your office). Flag connections using the exposed credentials that occurred outside normal deployment windows. For PostgreSQL: query pg_stat_activity and log_connections logs. For MySQL: query the general log or audit plugin. For MongoDB Atlas: query the Project Activity Feed for DATA_EXPLORER and CONNECT events from unknown IPs.

- Payment processors: For Stripe, check the Dashboard → Developers → Logs for API calls using the exposed key. Filter for source_ip outside your servers. Look for /v1/charges, /v1/transfers, /v1/payouts, and /v1/customers calls you don't recognize. For Braintree/Adyen, query the equivalent API transaction logs. Priority: any api_key that was stored in Vercel as a non-sensitive env var and has not yet been rotated. Audit email sending service logs for unexpected sends.

- Check for unsolicited leaked-credential notifications from OpenAI, Anthropic, GitHub, AWS, Stripe, and similar providers during the exposure window. These automated detection systems are now a primary early-warning channel for this class of breach.

3. Rotate AND redeploy

A critical operational detail to note is that a rotating Vercel environment variable does not retroactively invalidate old deployments. According to Vercel's documentation, prior deployments continue using the old credential value until they are redeployed.

Rotation without redeploy leaves the compromised credential live in any previous deployment artifact that is still reachable. Every credential rotation must be followed by a redeploy of every environment that used that variable, or the old deployments must be explicitly disabled.

- Priority order for rotation:

- Cloud provider credentials (AWS, GCP, Azure).

- Database connection strings.

- Payment processor keys.

- Authentication secrets (JWT secrets, session keys).

- Third-party API keys.

- Monitoring and logging tokens.

For security teams (Proactive)

OAuth application audit — Google Workspace

- Admin Console → Security → API Controls → Third-party app access.

- Review all authorized OAuth applications.

- Flag applications with broad scopes (Drive, Gmail, Calendar).

- Investigate applications from vendors without active business relationships.

- Monitor for OAuth token usage from unexpected IP ranges.

- Search for the known-bad OAuth Client ID: 110671459871-30f1spbu0hptbs60cb4vsmv79i7bbvqj.apps.googleusercontent.com

Detection Logic for SIEM Implementation

The following detection patterns map to the confirmed attack chain stages. Each pattern describes the observable behavior, the log source to instrument, and the conditions that should trigger investigation. Organizations should translate these into rules native to their SIEM platform (Sigma, Splunk SPL, KQL, Chronicle YARA-L) after validating field names against their specific log source schemas.

OAuth application anomalies (Stages 1–2)

Monitor Google Workspace token and admin audit logs for three patterns. First, any token refresh or authorization event associated with the known-bad OAuth Client ID (110671459871-30f1spbu0hptbs60cb4vsmv79i7bbvqj.apps.googleusercontent.com) should trigger an immediate alert, this is the compromised Context.ai application.

Second, any OAuth application authorization event that grants broad scope (including full mail access, Drive read/write, calendar access) warrants review against your active vendor inventory; applications that are no longer in active business use should be revoked. Third, token usage from any authorized OAuth application where the source IP falls outside your expected corporate and vendor CIDR ranges should be flagged for investigation, as this may indicate token theft or application compromise.

Internal system access and lateral movement (Stage 3, T1078)

Once attackers control a compromised Google Workspace account, they pivot into internal systems that trust that identity. Detection should focus on four indicators:

- Anomalous SSO/SAML authentication events. Monitor your identity provider logs for the compromised Workspace account authenticating into internal applications (Vercel dashboard, CI/CD platforms, internal tooling) from unfamiliar IP addresses, geolocations, or device fingerprints — particularly first-time access to systems that account had never previously touched.

- Email and Drive credential harvesting. Review Google Workspace audit logs for bulk email search queries (keywords like "API key," "secret," "token," "password," ".env"), unusual Google Drive file access patterns (opening shared credential stores, engineering runbooks, or infrastructure documentation), and mail forwarding rule creation on the compromised account.

- OAuth-connected internal tool access. The compromised Workspace identity likely had existing OAuth grants to internal tools (Slack, Jira, GitHub, internal dashboards). Monitor those downstream services for session creation or API activity tied to the compromised user that occurs outside normal working hours or from infrastructure inconsistent with the user's historical access pattern.

- Privilege escalation attempts. Watch for the compromised identity requesting elevated permissions, joining new groups or roles, or accessing admin consoles it had not previously used. In Google Workspace specifically, monitor for Directory API calls, delegation changes, or attempts to enumerate other users' OAuth tokens.

Environment variable enumeration (Stage 4)

Monitor Vercel team audit logs for unusual patterns of environment variable access. The specific event types will depend on Vercel's audit log schema, but the target behavior is any API call that reads, lists, or decrypts environment variables at a volume or frequency inconsistent with normal deployment activity.

Baseline your normal deployment cadence first — CI/CD pipelines legitimately read environment variables at build time — then alert access patterns that deviate from that baseline in volume, timing, or source identity. Pay particular attention to any environment variable access originating from user accounts rather than service accounts, or from accounts that do not normally interact with the projects being accessed.

Downstream credential abuse (Stage 5)

For every credential that was stored as a non-sensitive Vercel environment variable during the exposure window (February 2026 – April 2026), query the corresponding service's access logs for usage from unexpected sources. In AWS, this means CloudTrail queries filtered on the specific access key IDs, looking for API calls from IP addresses outside your known application, CI/CD, and corporate ranges.

In GCP and Azure, the equivalent is audit log queries filtered on the relevant service account or application identity. For SaaS APIs (Stripe, OpenAI, Anthropic, SendGrid, Twilio), check the provider's dashboard or API logs for key usage from unrecognized IPs or during time windows when your application was not active. Any credential showing usage that cannot be attributed to your own infrastructure should be treated as compromised, rotated immediately, and investigated for what actions the attacker performed with it.

Third-Party credential leak notifications

Configure monitoring for unsolicited leaked-credential notifications from providers that operate automated secret scanning, including but not limited to GitHub (secret scanning partner program), AWS (compromised key detection), OpenAI, Anthropic, Stripe, and Google Cloud. These notifications are now a primary early-warning channel for platform-level credential exposure. Any such notification for a key that exists only in a deployment platform should be treated as a potential indicator of platform compromise, not routine key hygiene noise.

Threat hunting

Google Workspace Admin Console — manual search steps:

- Admin Console → Reports → Audit and Investigation → OAuth Log Events

- Filter: Application Name = "Context.ai" OR Client ID = 110671459871-30f1spbu0hptbs60cb4vsmv79i7bbvqj.apps.googleusercontent.com

- Date range: February 2026 – present

- Export all results. Any hits = immediate revocation and incident investigation.

Google Workspace — all third-party OAuth apps with broad scopes:

- Admin Console → Security → API Controls → Third-party app access → Manage Google Services

- Sort by: "App access" → "Unrestricted"

- For each app: verify (a) active vendor relationship exists, (b) scopes are justified by business use, (c) last-used date is recent. Any app not used in 90+ days: revoke.

Defensive recommendations

This section outlines defensive recommendations based on the confirmed attack tactics from this incident.

Immediate actions (0–48 hours)

- Rotate all Vercel environment variables that were not marked as sensitive, regardless of whether you believe they were accessed. The cost of unnecessary rotation is trivial compared to the cost of a compromised credential.

- Redeploy every environment after rotation — rotation alone does not invalidate old deployments.

- Enable the sensitive flag on all environment variables containing any form of credential, token, key, or secret. Audit every project.

- Audit OAuth application authorizations in your Google Workspace (or Microsoft Entra) admin console. Revoke access for any application that is no longer actively used.

- Review access logs for all services whose credentials were stored as Vercel environment variables, covering the period February 2026 through present.

Short-term hardening (1–4 weeks)

- Migrate secrets to a dedicated secrets manager (HashiCorp Vault, AWS Secrets Manager, Doppler, Infisical). Inject secrets at runtime rather than storing them as platform environment variables.

- Implement OIDC-based authentication for CI/CD and deployment pipelines where supported, eliminating long-lived credentials entirely.

- Deploy OAuth application monitoring — commercial solutions (Nudge Security, Grip Security, Valence Security) or Google Workspace's built-in OAuth app management.

- Establish credential rotation automation — secrets should rotate on a defined schedule (30–90 days) regardless of incident status.

- Treat OAuth grants as vendor relationships — add them to your third-party risk inventory alongside contracted vendors.

Architectural changes (1–6 months)

- Adopt a zero-trust posture for environment variables — assume that any secret stored in a deployment platform may be exposed in a platform-level breach. Design systems so that a single credential exposure does not cascade.

- Implement least-privilege scoping for all credentials — database credentials should have minimum required permissions, API keys should be scoped to specific operations, cloud credentials should use role-based temporary credentials rather than long-lived access keys.

- Establish third-party vendor security review for any OAuth application or integration that accesses corporate identity systems. Include periodic re-review of existing authorizations.

- Include PaaS platforms in your SBOM/ASPM inventory — this breach argues deployment platforms should be treated as tier-1 supply-chain dependencies, not external services.

Recommended monitoring

- Audit Google Workspace Admin Console for the above OAuth Client ID.

- Monitor Vercel audit logs for unexpected env.read or env.list API calls.

- Review CloudTrail, GCP Audit Logs, and Azure Activity Logs for usage of credentials stored as Vercel env vars from unexpected IPs or user agents during February 2026 – April 2026.

- Monitor for any of the LiteLLM or Axios-related IOCs published by their respective advisories if those packages are in your dependency tree.

- Watch for unsolicited leaked-credential notifications from major API providers during the exposure window.

Regulatory and compliance implications

Organizations affected by credential exposure through the Vercel breach should evaluate notification obligations under:

- GDPR (EU): If exposed credentials provided access to systems containing EU personal data, the 72-hour breach notification clock may have started upon confirmation of exposure. The April 10 OpenAI notification raises the question of whether some organizations' awareness predates Vercel's April 19 disclosure.

- CCPA/CPRA (California): Exposure of credentials providing access to consumer data may trigger notification requirements.

- PCI DSS: If payment processor credentials (Stripe, Braintree, Adyen) were exposed, PCI incident response procedures and forensic investigation requirements may apply.

- SOC 2: Organizations with SOC 2 obligations should document the incident, credential rotation actions taken, and updated controls in their continuous monitoring evidence.

- SEC Cybersecurity Rules (8-K): Public companies determining the breach is material have a 4-business-day disclosure obligation.

The challenge is that many organizations may not yet know whether the exposed credentials were actually used for unauthorized access — but regulatory frameworks often trigger on exposure, not confirmed exploitation.

Conclusion

The Vercel breach is not an isolated incident — it is the latest manifestation of a structural vulnerability in how the software industry manages secrets and trust relationships. In the span of three weeks, we have seen:

- LiteLLM: CI/CD credentials stolen → malicious packages harvesting developer secrets at scale.

- Axios: Maintainer account hijacked → RAT deployed to millions of developer environments.

- Vercel: OAuth application compromised → internal access enabling enumeration of a limited subset of customer deployment secrets, with at least one public report suggesting downstream credential abuse detected in the wild prior to disclosure.

Each attack targets a different link in the software supply chain. Together, they paint a picture of an ecosystem where credentials are the universal target and trust relationships are the universal attack surface. The cascade the industry has warned about is no longer purely theoretical.

The defensive path forward is clear, if not easy:

- Stop storing long-lived credentials in platform environment variables. Use dedicated secret managers with runtime injection.

- Stop trusting OAuth applications implicitly. Audit, monitor, and periodically re-authorize.

- Stop assuming your platform provider's internal security posture. Design for the scenario where they are breached.

- Start rotating credentials proactively — and remember to redeploy afterward.

- Treat leaked-credential notifications from third-party providers as high-priority early-warning signals, not routine noise.

The organizations that will weather the next platform breach are those that assumed it would happen and built their credential architecture accordingly.

Indicators of Compromise (IoCs)

Confirmed IoC

| Type | Value | Context |

|---|---|---|

| OAuth Client ID |

|

110671459871-30f1spbu0hptbs60cb4vsmv79i7bbvqj.apps.googleusercontent.com

|

Compromised Context.ai OAuth application

|