[!WARNING]

Use at your own risk. Cal.diy is the open source community edition of Cal.com and it is intended for users who want to self-host their own Cal.diy instance. It is strictly recommended for personal, non-production use. Please review all installation and configuration steps carefully. Self-hosting requires advanced knowledge of server administration, database management, and securing sensitive data. Proceed only if you are comfortable with these responsibilities.

[!TIP] For any commercial and enterprise-ready scheduling infrastructure, use Cal.com, not Cal.diy; hosted by us or get invited to on-prem enterprise access here: https://cal.com/sales

Cal.diy

The community-driven, open-source scheduling platform.

GitHub

Discussions

·

Issues

·

Contributing

About Cal.diy

Cal.diy is the community-driven, fully open-source scheduling platform — a fork of Cal.com with all enterprise/commercial code removed.

Cal.diy is 100% MIT-licensed with no proprietary "Enterprise Edition" features. It's designed for individuals and self-hosters who want full control over their scheduling infrastructure without any commercial dependencies.

What's different from Cal.com?

- No enterprise features — Teams, Organizations, Insights, Workflows, SSO/SAML, and other EE-only features have been removed

- No license key required — Everything works out of the box, no Cal.com account or license needed

- 100% open source — The entire codebase is licensed under MIT, no "Open Core" split

- Community-maintained — Contributions are welcome and go directly into this project (see CONTRIBUTING.md)

Note: Cal.diy is a self-hosted project. There is no hosted/managed version. You run it on your own infrastructure.

Built With

Getting Started

To get a local copy up and running, please follow these simple steps.

Prerequisites

Here is what you need to be able to run Cal.diy.

- Node.js (Version: >=18.x)

- PostgreSQL (Version: >=13.x)

- Yarn (recommended)

If you want to enable any of the available integrations, you may want to obtain additional credentials for each one. More details on this can be found below under the integrations section.

Development

Setup

Clone the repo (or fork https://github.com/calcom/cal.diy/fork)

git clone https://github.com/calcom/cal.diy.gitIf you are on Windows, run the following command on

gitbashwith admin privileges:

>git clone -c core.symlinks=true https://github.com/calcom/cal.diy.gitGo to the project folder

cd cal.diyInstall packages with yarn

yarnSet up your

.envfile- Duplicate

.env.exampleto.env - Use

openssl rand -base64 32to generate a key and add it underNEXTAUTH_SECRETin the.envfile. - Use

openssl rand -base64 24to generate a key and add it underCALENDSO_ENCRYPTION_KEYin the.envfile.

- Duplicate

Windows users: Replace the

packages/prisma/.envsymlink with a real copy to avoid a Prisma error (unexpected character / in variable name):# Git Bash / WSL rm packages/prisma/.env && cp .env packages/prisma/.env

Setup Node If your Node version does not meet the project's requirements as instructed by the docs, "nvm" (Node Version Manager) allows using Node at the version required by the project:

nvm useYou first might need to install the specific version and then use it:

nvm install && nvm useYou can install nvm from here.

Quick start with yarn dx

- Requires Docker and Docker Compose to be installed

- Will start a local Postgres instance with a few test users - the credentials will be logged in the console

yarn dx

Default credentials created:

| Password | Role | |

|---|---|---|

free@example.com |

free |

Free user |

pro@example.com |

pro |

Pro user |

trial@example.com |

trial |

Trial user |

admin@example.com |

ADMINadmin2022! |

Admin user |

onboarding@example.com |

onboarding |

Onboarding incomplete |

You can use any of these credentials to sign in at http://localhost:3000

Tip: To view the full list of seeded users and their details, run

yarn db-studioand visit http://localhost:5555

Development tip

Add

export NODE_OPTIONS="--max-old-space-size=16384"to your shell script to increase the memory limit for the node process. Alternatively, you can run this in your terminal before running the app. Replace 16384 with the amount of RAM you want to allocate to the node process.Add

NEXT_PUBLIC_LOGGER_LEVEL={level}to your .env file to control the logging verbosity for all tRPC queries and mutations.

Where {level} can be one of the following:0for silly1for trace2for debug3for info4for warn5for error6for fatalWhen you set

NEXT_PUBLIC_LOGGER_LEVEL={level}in your .env file, it enables logging at that level and higher. Here's how it works:The logger will include all logs that are at the specified level or higher. For example: \

- If you set

NEXT_PUBLIC_LOGGER_LEVEL=2, it will log from level 2 (debug) upwards, meaning levels 2 (debug), 3 (info), 4 (warn), 5 (error), and 6 (fatal) will be logged. \ - If you set

NEXT_PUBLIC_LOGGER_LEVEL=3, it will log from level 3 (info) upwards, meaning levels 3 (info), 4 (warn), 5 (error), and 6 (fatal) will be logged, but level 2 (debug) and level 1 (trace) will be ignored. \

- If you set

echo 'NEXT_PUBLIC_LOGGER_LEVEL=3' >> .env

for Logger level to be set at info, for example.

Gitpod Setup

Click the button below to open this project in Gitpod.

This will open a fully configured workspace in your browser with all the necessary dependencies already installed.

Manual setup

Configure environment variables in the

.envfile. Replace<user>,<pass>,<db-host>, and<db-port>with their applicable valuesDATABASE_URL='postgresql://<user>:<pass>@<db-host>:<db-port>'If you don't know how to configure the DATABASE_URL, then follow the steps here to create a quick local DB

Download and install postgres in your local (if you don't have it already).

Create your own local db by executing

createDB <DB name>Now open your psql shell with the DB you created:

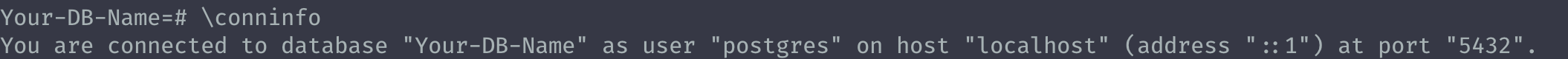

psql -h localhost -U postgres -d <DB name>Inside the psql shell execute

\conninfo. And you will get the following info.

Now extract all the info and add it to your DATABASE_URL. The url would look something like this

postgresql://postgres:postgres@localhost:5432/Your-DB-Name. The port is configurable and does not have to be 5432.

If you don't want to create a local DB. Then you can also consider using services like railway.app, Northflank or render.

Copy and paste your

DATABASE_URLfrom.envto.env.appStore.Set up the database using the Prisma schema (found in

packages/prisma/schema.prisma)In a development environment, run:

yarn workspace @calcom/prisma db-migrateIn a production environment, run:

yarn workspace @calcom/prisma db-deployRun mailhog to view emails sent during development

NOTE: Required when

E2E_TEST_MAILHOG_ENABLEDis "1"docker pull mailhog/mailhog docker run -d -p 8025:8025 -p 1025:1025 mailhog/mailhogRun (in development mode)

yarn dev

Setting up your first user

Approach 1

Open Prisma Studio to look at or modify the database content:

yarn db-studioClick on the

Usermodel to add a new user record.Fill out the fields

email,username,password, and setmetadatato empty{}(remembering to encrypt your password with BCrypt) and clickSave 1 Recordto create your first user.New users are set on a

TRIALplan by default. You might want to adjust this behavior to your needs in thepackages/prisma/schema.prismafile.Open a browser to http://localhost:3000 and login with your just created, first user.

Approach 2

Seed the local db by running

cd packages/prisma

yarn db-seed

The above command will populate the local db with dummy users.

E2E-Testing

Be sure to set the environment variable NEXTAUTH_URL to the correct value. If you are running locally, as the documentation within .env.example mentions, the value should be http://localhost:3000.

# In a terminal just run:

yarn test-e2e

# To open the last HTML report run:

yarn playwright show-report test-results/reports/playwright-html-report

Resolving issues

E2E test browsers not installed

Run npx playwright install to download test browsers and resolve the error below when running yarn test-e2e:

Executable doesn't exist at /Users/alice/Library/Caches/ms-playwright/chromium-1048/chrome-mac/Chromium.app/Contents/MacOS/Chromium

Upgrading from earlier versions

Pull the current version:

git pullCheck if dependencies got added/updated/removed

yarnApply database migrations by running one of the following commands:

In a development environment, run:

yarn workspace @calcom/prisma db-migrate(This can clear your development database in some cases)

In a production environment, run:

yarn workspace @calcom/prisma db-deployCheck for

.envvariables changesyarn predevStart the server. In a development environment, just do:

yarn devFor a production build, run for example:

yarn build yarn startEnjoy the new version.

Deployment

Docker

The Docker image can be found on DockerHub at https://hub.docker.com/r/calcom/cal.diy.

Note for ARM Users: Use the {version}-arm suffix for pulling images. Example: docker pull calcom/cal.diy:v5.6.19-arm.

Requirements

Make sure you have docker & docker compose installed on the server / system. Both are installed by most docker utilities, including Docker Desktop and Rancher Desktop.

Note: docker compose without the hyphen is now the primary method of using docker-compose, per the Docker documentation.

Running Cal.diy with Docker Compose

Clone the repository

git clone --recursive https://github.com/calcom/cal.diy.gitChange into the directory

cd cal.diyPrepare your configuration: Rename

.env.exampleto.envand then update.envcp .env.example .envMost configurations can be left as-is, but for configuration options see Important Run-time variables below.

Required Secret Keys

Before starting, you must generate secure values for

NEXTAUTH_SECRETandCALENDSO_ENCRYPTION_KEY. Using the defaultsecretplaceholder in production is a security risk.Generate

NEXTAUTH_SECRET(cookie encryption key):openssl rand -base64 32Generate

CALENDSO_ENCRYPTION_KEY(must be 32 bytes for AES256):openssl rand -base64 24Update your

.envfile with these values:NEXTAUTH_SECRET=<your_generated_secret> CALENDSO_ENCRYPTION_KEY=<your_generated_key>Push Notifications (VAPID Keys) If you see an error like:

Error: No key set vapidDetails.publicKeyThis means your environment variables for Web Push are missing. You must generate and set

NEXT_PUBLIC_VAPID_PUBLIC_KEYandVAPID_PRIVATE_KEY.Generate them with:

npx web-push generate-vapid-keysThen update your

.envfile:NEXT_PUBLIC_VAPID_PUBLIC_KEY=your_public_key_here VAPID_PRIVATE_KEY=your_private_key_hereDo not commit real keys to

.env.example— only placeholders.Update the appropriate values in your .env file, then proceed.

(optional) Pre-Pull the images by running the following command:

docker compose pullStart Cal.diy via docker compose

To run the complete stack, which includes a local Postgres database, Cal.diy web app, and Prisma Studio:

docker compose up -dTo run Cal.diy web app and Prisma Studio against a remote database, ensure that DATABASE_URL is configured for an available database and run:

docker compose up -d calcom studioTo run only the Cal.diy web app, ensure that DATABASE_URL is configured for an available database and run:

docker compose up -d calcomNote: to run in attached mode for debugging, remove

-dfrom your desired run command.Open a browser to http://localhost:3000, or your defined NEXT_PUBLIC_WEBAPP_URL. The first time you run Cal.diy, a setup wizard will initialize. Define your first user, and you're ready to go!

Note for first-time setup (Calendar integration): During the setup wizard, you may encounter a "Connect your Calendar" step that appears to be required. If you do not wish to connect a calendar at this time, you can skip this step by navigating directly to the dashboard at

<NEXT_PUBLIC_WEBAPP_URL>/event-types. Calendar integrations can be added later from the Settings > Integrations page.

Updating Cal.diy

Stop the Cal.diy stack

docker compose downPull the latest changes

docker compose pullUpdate env vars as necessary.

Re-start the Cal.diy stack

docker compose up -d

Building from source with Docker

Clone the repository

git clone https://github.com/calcom/cal.diy.gitChange into the directory

cd cal.diyRename

.env.exampleto.envand then update.envFor configuration options see Build-time variables below. Update the appropriate values in your .env file, then proceed.

Build the Cal.diy docker image:

Note: Due to application configuration requirements, an available database is currently required during the build process.

a) If hosting elsewhere, configure the

DATABASE_URLin the .env file, and skip the next stepb) If a local or temporary database is required, start a local database via docker compose.

docker compose up -d databaseBuild Cal.diy via docker compose (DOCKER_BUILDKIT=0 must be provided to allow a network bridge to be used at build time. This requirement will be removed in the future)

DOCKER_BUILDKIT=0 docker compose build calcomStart Cal.diy via docker compose

To run the complete stack, which includes a local Postgres database, Cal.diy web app, and Prisma Studio:

docker compose up -dTo run Cal.diy web app and Prisma Studio against a remote database, ensure that DATABASE_URL is configured for an available database and run:

docker compose up -d calcom studioTo run only the Cal.diy web app, ensure that DATABASE_URL is configured for an available database and run:

docker compose up -d calcomNote: to run in attached mode for debugging, remove

-dfrom your desired run command.Open a browser to http://localhost:3000, or your defined NEXT_PUBLIC_WEBAPP_URL. The first time you run Cal.diy, a setup wizard will initialize. Define your first user, and you're ready to go!

Configuration

Important Run-time variables

These variables must also be provided at runtime

| Variable | Description | Required | Default |

|---|---|---|---|

| DATABASE_URL | database url with credentials - if using a connection pooler, this setting should point there | required | postgresql://unicorn_user:magical_password@database:5432/calendso |

| NEXT_PUBLIC_WEBAPP_URL | Base URL of the site. NOTE: if this value differs from the value used at build-time, there will be a slight delay during container start (to update the statically built files). | optional | http://localhost:3000 |

| NEXTAUTH_URL | Location of the auth server. By default, this is the Cal.diy docker instance itself. | optional | {NEXT_PUBLIC_WEBAPP_URL}/api/auth |

| NEXTAUTH_SECRET | Cookie encryption key. Must match build variable. Generate with: openssl rand -base64 32 |

required | secret |

| CALENDSO_ENCRYPTION_KEY | Authentication encryption key (32 bytes for AES256). Must match build variable. Generate with: openssl rand -base64 24 |

required | secret |

Build-time variables

If building the image yourself, these variables must be provided at the time of the docker build, and can be provided by updating the .env file. Currently, if you require changes to these variables, you must follow the instructions to build and publish your own image.

| Variable | Description | Required | Default |

|---|---|---|---|

| DATABASE_URL | database url with credentials - if using a connection pooler, this setting should point there | required | postgresql://unicorn_user:magical_password@database:5432/calendso |

| MAX_OLD_SPACE_SIZE | Needed for Nodejs/NPM build options | required | 4096 |

| NEXTAUTH_SECRET | Cookie encryption key | required | secret |

| CALENDSO_ENCRYPTION_KEY | Authentication encryption key | required | secret |

| NEXT_PUBLIC_WEBAPP_URL | Base URL injected into static files | optional | http://localhost:3000 |

| NEXT_PUBLIC_WEBSITE_TERMS_URL | custom URL for terms and conditions website | optional | |

| NEXT_PUBLIC_WEBSITE_PRIVACY_POLICY_URL | custom URL for privacy policy website | optional | |

| CALCOM_TELEMETRY_DISABLED | Allow Cal.diy to collect anonymous usage data (set to 1 to disable) |

optional |

Troubleshooting

SSL edge termination

If running behind a load balancer which handles SSL certificates, you will need to add the environmental variable NODE_TLS_REJECT_UNAUTHORIZED=0 to prevent requests from being rejected. Only do this if you know what you are doing and trust the services/load-balancers directing traffic to your service.

Failed to commit changes: Invalid 'prisma.user.create()'

Certain versions may have trouble creating a user if the field metadata is empty. Using an empty json object {} as the field value should resolve this issue. Also, the id field will autoincrement, so you may also try leaving the value of id as empty.

CLIENT_FETCH_ERROR

If you experience this error, it may be the way the default Auth callback in the server is using the WEBAPP_URL as a base url. The container does not necessarily have access to the same DNS as your local machine, and therefore needs to be configured to resolve to itself. You may be able to correct this by configuring NEXTAUTH_URL=http://localhost:3000/api/auth, to help the backend loop back to itself.

docker-calcom-1 | @calcom/web:start: [next-auth][error][CLIENT_FETCH_ERROR]

docker-calcom-1 | @calcom/web:start: https://next-auth.js.org/errors#client_fetch_error request to http://testing.localhost:3000/api/auth/session failed, reason: getaddrinfo ENOTFOUND testing.localhost {

docker-calcom-1 | @calcom/web:start: error: {

docker-calcom-1 | @calcom/web:start: message: 'request to http://testing.localhost:3000/api/auth/session failed, reason: getaddrinfo ENOTFOUND testing.localhost',

docker-calcom-1 | @calcom/web:start: stack: 'FetchError: request to http://testing.localhost:3000/api/auth/session failed, reason: getaddrinfo ENOTFOUND testing.localhost\n' +

docker-calcom-1 | @calcom/web:start: ' at ClientRequest.<anonymous> (/calcom/node_modules/next/dist/compiled/node-fetch/index.js:1:65756)\n' +

docker-calcom-1 | @calcom/web:start: ' at ClientRequest.emit (node:events:513:28)\n' +

docker-calcom-1 | @calcom/web:start: ' at ClientRequest.emit (node:domain:489:12)\n' +

docker-calcom-1 | @calcom/web:start: ' at Socket.socketErrorListener (node:_http_client:494:9)\n' +

docker-calcom-1 | @calcom/web:start: ' at Socket.emit (node:events:513:28)\n' +

docker-calcom-1 | @calcom/web:start: ' at Socket.emit (node:domain:489:12)\n' +

docker-calcom-1 | @calcom/web:start: ' at emitErrorNT (node:internal/streams/destroy:157:8)\n' +

docker-calcom-1 | @calcom/web:start: ' at emitErrorCloseNT (node:internal/streams/destroy:122:3)\n' +

docker-calcom-1 | @calcom/web:start: ' at processTicksAndRejections (node:internal/process/task_queues:83:21)',

docker-calcom-1 | @calcom/web:start: name: 'FetchError'

docker-calcom-1 | @calcom/web:start: },

docker-calcom-1 | @calcom/web:start: url: 'http://testing.localhost:3000/api/auth/session',

docker-calcom-1 | @calcom/web:start: message: 'request to http://testing.localhost:3000/api/auth/session failed, reason: getaddrinfo ENOTFOUND testing.localhost'

docker-calcom-1 | @calcom/web:start: }

Railway

You can deploy Cal.diy on Railway. The team at Railway also have a detailed blog post on deploying on their platform.

Northflank

You can deploy Cal.diy on Northflank. The team at Northflank also have a detailed blog post on deploying on their platform.

Vercel

Currently Vercel Pro Plan is required to be able to Deploy this application with Vercel, due to limitations on the number of serverless functions on the free plan.

Render

Elestio

License

Cal.diy is fully open source, licensed under the MIT License.

Unlike Cal.com's "Open Core" model, Cal.diy has no commercial/enterprise code. The entire codebase is available under the same open-source license.

Enabling Content Security Policy

- Set CSP_POLICY="non-strict" env variable, which enables Strict CSP except for unsafe-inline in style-src . If you have some custom changes in your instance, you might have to make some code change to make your instance CSP compatible. Right now it enables strict CSP only on login page and on other SSR pages it is enabled in Report only mode to detect possible issues. On, SSG pages it is still not supported.

Integrations

Obtaining the Google API Credentials

- Open Google API Console. If you don't have a project in your Google Cloud subscription, you'll need to create one before proceeding further. Under Dashboard pane, select Enable APIS and Services.

- In the search box, type calendar and select the Google Calendar API search result.

- Enable the selected API.

- Next, go to the OAuth consent screen from the side pane. Select the app type (Internal or External) and enter the basic app details on the first page.

- In the second page on Scopes, select Add or Remove Scopes. Search for Calendar.event and select the scope with scope value

.../auth/calendar.events,.../auth/calendar.readonlyand select Update. - In the third page (Test Users), add the Google account(s) you'll be using. Make sure the details are correct on the last page of the wizard and your consent screen will be configured.

- Now select Credentials from the side pane and then select Create Credentials. Select the OAuth Client ID option.

- Select Web Application as the Application Type.

- Under Authorized redirect URI's, select Add URI and then add the URI

<Cal.diy URL>/api/integrations/googlecalendar/callbackand<Cal.diy URL>/api/auth/callback/googlereplacing Cal.diy URL with the URI at which your application runs. - The key will be created and you will be redirected back to the Credentials page. Select the newly generated client ID under OAuth 2.0 Client IDs.

- Select Download JSON. Copy the contents of this file and paste the entire JSON string in the

.envfile as the value forGOOGLE_API_CREDENTIALSkey.

Adding google calendar to Cal.diy App Store

After adding Google credentials, you can now Google Calendar App to the app store. You can repopulate the App store by running

cd packages/prisma

yarn seed-app-store

You will need to complete a few more steps to activate Google Calendar App. Make sure to complete section "Obtaining the Google API Credentials". After that do the following

- Add extra redirect URL

<Cal.diy URL>/api/auth/callback/google - Under 'OAuth consent screen', click "PUBLISH APP"

Obtaining Microsoft Graph Client ID and Secret

- Open Azure App Registration and select New registration

- Name your application

- Set Who can use this application or access this API? to Accounts in any organizational directory (Any Azure AD directory - Multitenant)

- Set the Web redirect URI to

<Cal.diy URL>/api/integrations/office365calendar/callbackreplacing Cal.diy URL with the URI at which your application runs. - Use Application (client) ID as the MS_GRAPH_CLIENT_ID attribute value in .env

- Click Certificates & secrets create a new client secret and use the value as the MS_GRAPH_CLIENT_SECRET attribute

Obtaining Zoom Client ID and Secret

- Open Zoom Marketplace and sign in with your Zoom account.

- On the upper right, click "Develop" => "Build App".

- Select "General App" , click "Create".

- Name your App.

- Choose "User-managed app" for "Select how the app is managed".

- De-select the option to publish the app on the Zoom App Marketplace, if asked.

- Now copy the Client ID and Client Secret to your

.envfile into theZOOM_CLIENT_IDandZOOM_CLIENT_SECRETfields. - Set the "OAuth Redirect URL" under "OAuth Information" as

<Cal.diy URL>/api/integrations/zoomvideo/callbackreplacing Cal.diy URL with the URI at which your application runs. - Also add the redirect URL given above as an allow list URL and enable "Subdomain check". Make sure, it says "saved" below the form.

- You don't need to provide basic information about your app. Instead click on "Scopes" and then on "+ Add Scopes". On the left,

- click the category "Meeting" and check the scope

meeting:write:meeting. - click the category "User" and check the scope

user:read:settings.

- click the category "Meeting" and check the scope

- Click "Done".

- You're good to go. Now you can easily add your Zoom integration in the Cal.diy settings.

Obtaining Daily API Credentials

- Open Daily.co and create an account.

- From within your dashboard, go to the developers tab.

- Copy your API key.

- Now paste the API key to your

.envfile into theDAILY_API_KEYfield in your.envfile. - If you have the Daily Scale Plan set the

DAILY_SCALE_PLANvariable totruein order to use features like video recording.

Obtaining Basecamp Client ID and Secret

- Visit the 37 Signals Integrations Dashboard and sign in.

- Register a new application by clicking the Register one now link.

- Fill in your company details.

- Select Basecamp 4 as the product to integrate with.

- Set the Redirect URL for OAuth

<Cal.diy URL>/api/integrations/basecamp3/callbackreplacing Cal.diy URL with the URI at which your application runs. - Click on done and copy the Client ID and secret into the

BASECAMP3_CLIENT_IDandBASECAMP3_CLIENT_SECRETfields. - Set the

BASECAMP3_CLIENT_SECRETenv variable to{your_domain} ({support_email}).

Obtaining HubSpot Client ID and Secret

- Open HubSpot Developer and sign into your account, or create a new one.

- From within the home of the Developer account page, go to "Manage apps".

- Click "Create legacy app" button top right and select public app.

- Fill in any information you want in the "App info" tab

- Go to tab "Auth"

- Now copy the Client ID and Client Secret to your

.envfile into theHUBSPOT_CLIENT_IDandHUBSPOT_CLIENT_SECRETfields. - Set the Redirect URL for OAuth

<Cal.diy URL>/api/integrations/hubspot/callbackreplacing Cal.diy URL with the URI at which your application runs. - In the "Scopes" section at the bottom of the page, make sure you select "Read" and "Write" for scopes called

crm.objects.contactsandcrm.lists. - Click the "Save" button at the bottom footer.

- You're good to go. Now you can see any booking in Cal.diy created as a meeting in HubSpot for your contacts.

Obtaining Webex Client ID and Secret

Obtaining ZohoCRM Client ID and Secret

- Open Zoho API Console and sign into your account, or create a new one.

- From within the API console page, go to "Applications".

- Click "ADD CLIENT" button top right and select "Server-based Applications".

- Fill in any information you want in the "Client Details" tab

- Go to tab "Client Secret" tab.

- Now copy the Client ID and Client Secret to your

.envfile into theZOHOCRM_CLIENT_IDandZOHOCRM_CLIENT_SECRETfields. - Set the Redirect URL for OAuth

<Cal.diy URL>/api/integrations/zohocrm/callbackreplacing Cal.diy URL with the URI at which your application runs. - In the "Settings" section check the "Multi-DC" option if you wish to use the same OAuth credentials for all data centers.

- Click the "Save"/ "UPDATE" button at the bottom footer.

- You're good to go. Now you can easily add your ZohoCRM integration in the Cal.diy settings.

Obtaining Zoho Calendar Client ID and Secret

Obtaining Zoho Bigin Client ID and Secret

Obtaining Pipedrive Client ID and Secret

Rate Limiting with Unkey

Cal.diy uses Unkey for rate limiting. This is an optional feature and is not required for self-hosting.

If you want to enable rate limiting:

- Sign up for an account at unkey.com

- Create a Root key with permissions for

ratelimit.create_namespaceandratelimit.limit - Copy the root key to your

.envfile into theUNKEY_ROOT_KEYfield

Note: If you don't configure Unkey, Cal.diy will work normally without rate limiting enabled.

Contributing

We welcome contributions! Whether it's fixing a typo, improving documentation, or building new features, your help makes Cal.diy better.

Important: Cal.diy is a community fork. Contributions to this repo do not flow to Cal.com's production platform. See CONTRIBUTING.md for details.

- Check out our Contributing Guide for detailed steps.

- Join the discussion on GitHub Discussions.

- Please follow our coding standards and commit message conventions to keep the project consistent.

Even small improvements matter — thank you for helping us grow!

Good First Issues

We have a list of help wanted that contain small features and bugs which have a relatively limited scope. This is a great place to get started, gain experience, and get familiar with our contribution process.

Contributors

Translations

Don't code but still want to contribute? Join our Discussions and help translate Cal.diy into your language.

Acknowledgements

Cal.diy is built on the foundation created by Cal.com and the many contributors to the original project. Special thanks to: