Yeah yeah, I know, data-point of 1.

I recently read Susam's blog post where they said that "most of the traffic to my personal website still comes from web feeds" - I wondered if that was true for my site.

I've been writing this blog for a while. I've never much bothered with "aggressive" SEO - I have a fairly semantic layout, all my reviews have metadata, and stuff like that - but I'm not cramming in keywords, using AMP, or whatever other chickens Google requires to be sacrificed for a higher ranking. Nevertheless, I do OK.

Last year, I added a bit of local-only, lightweight statistics-gathering to my blog. I can see which sites people click on to reach mine. Google is right up the top, DuckDuckGo is surprisingly high, Bing is lucky to crack the top 20 on any day. Similarly, I can see how much traffic I get from the Fediverse and BlueSky (Twitter has all but vanished).

A few weeks ago I added RSS and Newsletter tracking. These data are very lossy. If someone is subscribed to my RSS feed and opens a post and their client downloads a lazy-loaded image at the end of the post, I get a hit. For email it's broadly the same. If an email is opened and the tracker image is loaded, I get a hit (although Gmail does obfuscate that somewhat).

I'm not looking for super-accurate numbers (although I do block as many AI crawlers and bots as possible). I'm not creepily following people around the web nor am I trying to sell them anything. I just want a rough idea of where people find me.

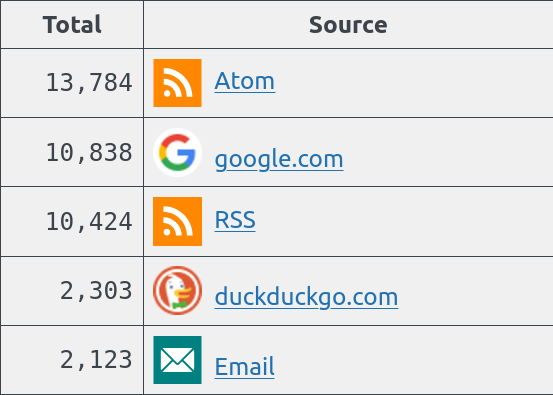

Here are my blog's views for the last 28 days.

Some months I get a surge of hits from link aggregators like HN or Reddit. Sometimes I'm linked to from a popular site or cited in academic work. But most of the time I bumble along getting hits from here, there, and everywhere. Nevertheless, it's lovely to see so many people choosing to subscribe (for free!) and astonishing that they provide more traffic than a major search engine.

Obviously, these are two very different types of traffic. People who are searching for a specific thing and stumble upon my blog are different from those who decide to like and subscribe.

But, yeah, about 25% of my traffic comes from people who have chosen to subscribe.

I'm just delighted that so many people read my random thoughts.