published 5/6/2026

OpenAI posted a technical blog a few days ago. This blog post triggered me more than it should have. I urge to slap my meaty fingers on the keyboard.

You should NOT copy OpenAI.

I don’t think you should use WebRTC for voice AI. WebRTC is the problem.

Me

Like 6 years ago I wrote a WebRTC SFU at Twitch. Originally we used Pion (Go) just like OpenAI, but forked after benchmarking revealed that it was too slow. I ended up rewriting every protocol, because of course I did!

Just a year ago, I was at Discord and I rewrote the WebRTC SFU in Rust. Because of course I did! You’re probably noticing a trend.

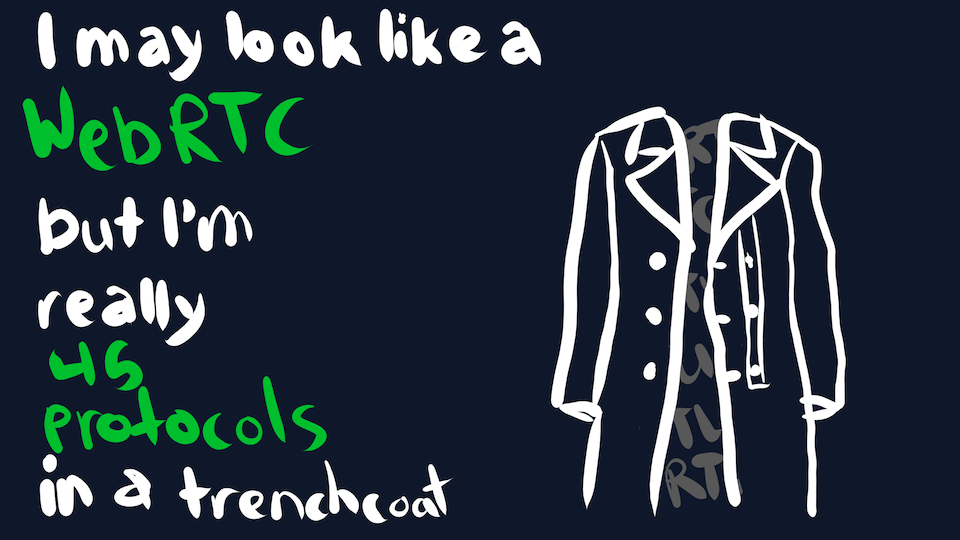

Fun Fact: WebRTC consists of ~45 RFCs dating back to the early 2000s. And some de-facto standards that are technically drafts (ex. TWCC, REMB). Not a fun fact when you have to implement them all.

You should consider me a Certified WebRTC Expert. Which is why I never, never want to use WebRTC again.

Product Fit

I’m going to cheat a little bit and start with the hot takes before they get cold. Don’t worry, we’ll get right back to talking about the OpenAI blog post and load balancing, I promise.

WebRTC is a poor fit for Voice AI.

But that seems counter-intuitive? WebRTC is for conferencing, and that involves speaking? And robots can speak, right?

WebRTC is too aggressive

Let’s say I pull up my OpenAI app on my phone. I say hi to Scarlett Johansson Sky and then I utter:

should I walk or drive to the car wash?

WebRTC is designed to degrade and drop my prompt during poor network conditions.

wtf my dude

WebRTC aggressively drops audio packets to keep latency low. If you’ve ever heard distorted audio on a conference call, that’s WebRTC baybee. The idea is that conference calls depend on rapid back-and-forth, so pausing to wait for audio is unacceptable.

…but as a user, I would much rather wait an extra 200ms for my slow/expensive prompt to be accurate. After all, I’m paying good money to boil the ocean, and a garbage prompt means a garbage response. It’s not like LLMs are particularly responsive anyway.

But I’m not allowed to wait. It’s impossible to even retransmit a WebRTC audio packet within a browser; we tried at Discord. The implementation is hard-coded for real-time latency or else.

UPDATE: Some WebRTC folks are claiming this is a skill issue. It might be possible to enable audio NACKs, but we couldn’t figure out the correct SDP munging. Either way, the WebRTC jitter buffer is aggressively small.

And yes, Voice AI agents will eventually get the latency down to the conversational range. But reducing latency has trade-offs. I’m not even sure that purposely degrading audio prompts will ever be worth it.

Two roads diverged in a yellow wood. And sorry I could not travel both. And be one traveler, long I stood. And looked down one as far as I could. Until I ran out of tokens.

TTS is faster than real-time

You speak into the microphone, it gets sent to one of OpenAI’s billion servers, and then a GPU pretends to talk to you via text-to-speech. Neato.

Let’s say it takes 2s of GPUs to generate 8s of audio. In an ideal world, we would stream the audio as it’s being generated (over 2s) and the client would start playing it back (over 8s). That way, if there’s a network blip, some audio is buffered locally. The user might not even notice the network blip.

But nope, WebRTC has no buffering and renders based on arrival time. Like seriously, timestamps are just suggestions. It’s even more annoying when video enters the picture.

To compensate for this, OpenAI has to make sure packets arrive exactly when they should be rendered. They need to add a sleep in front of every audio packet before sending it. But if there’s network congestion, oops we lost that audio packet and it’ll never be retransmitted.

OpenAI is literally introducing artificial latency, and then aggressively dropping packets to “keep latency low”. It’s the equivalent of screen sharing a YouTube video instead of buffering it. The quality will be degraded.

Thank for Mr Robot friend for the unambiguous advice

Fun fact: WebRTC actually adds latency. It’s not much, but WebRTC has a dynamic jitter buffer that can be sized anywhere from 20ms to 200ms (for audio). This is meant to smooth out network jitter, but none of this is needed if you transfer faster than real-time.

Ports Ports Ports

Okay but let’s talk about the technical meat of the OpenAI article. We’re no longer on a boat, but let’s talk about ports.

When you host a TCP server, you open a port (ex. 443 for HTTPS) and listen for incoming connections. The TCP client will randomly select an ephemeral port to use, and the connection is identified by the source/destination IP/ports. For example, a connection might be identified as 123.45.67.89:54321 -> 192.168.1.2:443.

But there’s a minor problem… client addresses can change. When your phone switches from WiFi to cellular, oops your IP changes. NATs can also arbitrarily change your source IP/port because of course they can.

Whenever this happens, bye bye connection, it’s time to dial a new one. And that means an expensive TCP + TLS handshake which takes at least 2-3 RTTs. The users definitely notice the network hiccup when you’re live streaming.

WebRTC tried to solve this issue but made things worse. Seriously.

A WebRTC implementation is supposed to allocate an ephemeral port for each connection. That way, a WebRTC session can identified by the destination IP/port only; the source is irrelevant. If the source IP/port changes, oh hey that’s still Bob because the destination port is the same.

But as OpenAI corroborates, this causes issues at scale because…

- Servers only have a limited number of ports available.

- Firewalls love to block ephemeral ports.

- Kubernetes lul

You could probably abuse IPv6 to work around this, but IDK I never tried. Twitch didn’t even support IPv6…

Hacks by Necessity

So most services end up ignoring the WebRTC specifications. Because of course they do. We mux multiple connections onto a single port instead.

At Twitch I literally hosted my WebRTC server on UDP:443. That’s supposed to be the HTTPS/QUIC port, but lying meant we could get past more firewalls. Like the Amazon corporate network, which blocked all but ~30 ports.

Discord uses ports 50000-50032, one for each CPU core. As a result it gets blocked on more corporate networks. But like, if you’re on a Discord voice call on the Amazon corporate network, you probably won’t be there much longer anyway.

HOWEVER, HUGE PROBLEM.

WebRTC is actually a bunch of standards in a trenchcoat, and 5 of those go over UDP directly. It’s not hard to figure out which protocol a packet is using, but we need to figure out how to route each packet.

- STUN: We can choose a unique

ufragand route on it. - SRTP/SRTCP: The browser chooses a random

ssrc(u32)… which we can usually route based on. - DTLS: Uh oh. We pray that RFC9146 gets widespread support.

- TURN: IDK I’ve never implemented it.

So OpenAI only uses STUN:

No protocol termination: Relay parses only STUN headers/ufrag; it uses cached state for subsequent DTLS, RTP, and RTCP, keeping packets opaque.

It’s a positive way of saying:

We really hope the user’s source IP/port never changes, because we broke that functionality.

While it’s impressive load balancing anything at OpenAI scale, their custom load balancing is a hack. But a necessary hack, because the core protocol is at fault.

Personally, I would prefer 3 raccoons.

Fun fact: Browsers can randomly generate the same ssrc. If there is a collision, and no source IP/port mapping is available, Discord attempts to decrypt the packet with each possible decryption key. If the key worked, hey we identified the connection!

Round Trips and U

The OpenAI blog post starts with 3 requirements, one of them is:

- Fast connection setup so a user can start speaking as soon as a session begins

lol

It takes a minimum of 8* round trips (RTT) to establish a WebRTC connection. While we try to run CDN edge nodes close enough to every user to minimize RTT, it adds up.

Signaling server (ex. WHIP):

- 1 for TCP

- 1 for TLS 1.3

- 1 for HTTP

Media server:

- 1 for ICE (with server)

- 2 for DTLS 1.2

- 2 for SCTP

* It’s complicated to compute, because some protocols can be pipelined to avoid 0.5 RTT. Kinda like half an A-Press.

an obscure reference to an obscure reference

All of this nonsense is because WebRTC needs to support P2P. It doesn’t matter if you have a server with a static IP address, you still need to do this dance.

It’s extra depressing when the signaling and media server are running on the same host/process. You end up doing two redundant and expensive handshakes. It’s like walking AND driving your car to the car wash.

Forking the Protocol

Fun Fact: This was originally going to be a Fun Fact, but it gets its own section now.

WebRTC practically encourages you to fork the protocol. There’s so many limitations that I’ve barely scratched the surface. The browser implementation is owned by Google and tailor made for Google Meet, so it’s also an existential threat for conferencing apps.

Sad Fact: That’s why every conferencing app (except Google Meet) tries to shove a native app down your throat. It’s the only way to avoid using WebRTC.

OpenAI definitely has the debt funding to do this. But I think they should also throw the baby out with the bath water. Don’t fork WebRTC, replace it with something that has browser support.

Fun Fact: Discord has forked WebRTC so hard that native clients only implement a tiny fraction of the protocol. No more SDP/ICE/STUN/TURN/DTLS/SCTP/SRTP/etc. But we still have to implement everything for web clients.

But What Instead?

If not WebRTC, then what should you use for Voice AI?

Honestly, if I was working at OpenAI, I’d start by stream audio over WebSockets. You can leverage existing TCP/HTTP infrastructure instead of inventing a custom WebRTC load balancer. It makes for a boring blog post, but it’s simple, works with Kubernetes, and SCALES.

I think head-of-line blocking is a desirable user experience, not a liability. But the fated day will come and dropping/prioritizing some packets will be necessary. Then I think OpenAI should copy MoQ and utilize WebTransport, because…

QUIC FIXES THIS

Remember the round trip discussion? Good times. Here’s how many RTTs it takes to establish a QUIC connection:

- 1 for QUIC+TLS

But that was an easy one. Let’s dive into the deeper details of QUIC that you wouldn’t know about unless you’re a turbo QUIC nerd (it me).

Connection ID

Remember that link to RFC9146? In the DTLS section? That you didn’t click? Good times. The idea is literally copied from QUIC.

QUIC ditches source IP/port based routing. Instead, every packet contains a CONNECTION_ID, which can be 0-20 bytes long. And most importantly for us: it’s chosen by the receiver.

So our QUIC server generates a unique CONNECTION_ID for each connection. Now we can use a single port and still figure out when the source IP/port changes. When it does, QUIC automatically switches to the new address instead of severing the connection like TCP.

But if your gut reaction is: “how dare they! this is a waste of bytes!” These bytes are very important, keep reading u nerd.

Stateless Load Balancing

I glossed over this, but OpenAI’s load balancers (like most) depend on shared state. Even if you have a sticky packet router, load balancers can still restart/crash. Something has to store the mapping from source IP/port -> backend server.

They’re using a Redis instance to store the mapping of source IP/port to backend server. Simple and easy, I approve.

But do you know what is even simpler and easier? Not having a database. Here’s how QUIC-LB does it:

When a client initiates a QUIC connection, the load balancer forwards the packet to healthy backend server. The backend server completes the handshake and encodes its own ID into the CONNECTION_ID. That way every subsequent QUIC packet contains the ID of the backend server.

Now packets become trivial for load balancers to forward. They don’t need encryption keys or a routing table, just decode the first few bytes and forward it to that guy. It doesn’t even matter if the server reboots.

Zero state also means zero global state. These load balancers could listen on a global anycast address and forward packets globally to the indicated backend server. Cloudflare uses this extensively; no need for a global Redis cluster.

Unpaid Shill: AWS NLB offers QUIC load balancing using QUIC-LB. Other cloud providers need to step up their game and offer it too.

Anycast + Unicast

Based on the OpenAI blog, it sounds like they assign connections to regional load balancers. Functional but lame. Anycast is way cooler.

I brought this up in my ancient Quic Powers blog post, but I’ll excuse you for not reading it (yet). QUIC has something called preferred_address that is a game changer for load balancing.

Let’s say we have thousands of backend servers around the world that could accept a new connection. We have them all advertise the same anycast address, ex. 1.2.3.4. When a client tries to connect to 1.2.3.4, the magic internet routers forward the packet to one of the servers.

Now, we could just use QUIC-LB and route traffic to the indicated backend. But that would be boring.

Instead, we can give each QUIC server a unique unicast address, ex. 5.6.7.8. The idea is that we use anycast for handshakes and unicast for stateful connections.

- Server: Listen for QUIC packets on

1.2.3.4and5.6.7.8. - Client: Sends a QUIC handshake packet to

1.2.3.4. - Server: Establishes the QUIC connection, indicating

preferred_address=5.6.7.8. - Client: Sends future packets to

5.6.7.8.

When the server is overloaded and doesn’t want more connections, it stops advertising 1.2.3.4. We won’t drop existing connections because they’re safe on unicast.

Just like that, no load balancers needed! The anycast address is basically a health check!

Holy shit I wish I actually had the scale to build this. Reach out if you work for the orange butthole company.

Looks something like this but orange.

Summary

WebRTC

- hurts your product

- hurts your load balancing

- hurts your dog, maybe

QUIC

- loves your product

- loves your load balancing

- loves your dog, definitely

I have labeled QUIC as the chad, therefore it is the superior protocol.

To Be Fair

I know many engineers at OpenAI and they are extremely bright. They’re dealing with unprecedented levels of stress. They MUST scale and they MUST scale now.

I’m just some guy who quit my job to work on a passion project. I literally spend my time tracing memes. It’s easy for me to judge from my lofty position, like a movie critic ranting about how they casted Jared Leto again?

I just don’t think the obvious solution is a good fit for Voice AI. And the obvious solution is very difficult to scale. WebRTC is Jared Leto. There I said it.

And I’ll be honest, MoQ isn’t a perfect fit for Voice AI either. It’ll work, but a lot of the cache/fanout semantics are useless for 1:1 audio. You should definitely use QUIC though.

Me

Anyway, hit me up if you want to chat: meself@kixel.me

I’m cool. You won’t regret it. Probably.

Written by @kixelated. ![]()