I previously discussed how the main codebase at Google enforces strict tooling and conventions to allow the codebase to scale. For many years, there was one glaring exception: the IDE.

Context: I worked at Google from 2011 to 2024. Some of the information might be approximative, I’ll update it if there are reports. This blog post focuses on the main monorepo at Google (google3).

A fragmented ecosystem

Like in many companies, engineers at Google have been able to pick their IDE of choice, and this resulted in a lot of fragmentation. In 2011, some of the most senior engineers were asked a question: “Is there a way to get a good uniform IDE for all Googlers?“ The answer was essentially “No”. Among others, Jeff Dean replied:

“Trying to get a group of developers to all agree on a common editor is a recipe for unhappiness. Everyone has different opinions about what is important here, and the advantages and disadvantages of different systems are weighed differently by different people. In the end, it doesn’t matter that much.”

This was the prevalent opinion for years. After all, it doesn’t matter which IDE your colleagues use, as long as their code is good. But I worked at Google for 12 years on developer tools, and I sometimes wondered about it.

If you look at it from a company productivity standpoint: you don’t want each engineer to spend too much time setting up their editor. Although engineers used different IDEs, useful integrations eventually had to be reimplemented everywhere: Bazel support, Starlark tooling, code formatters, code search integration, and so on. Google’s internal culture made this manageable. Engineers would often start tooling projects organically, others would discover them through the shared codebase and contribute. This kind of contribution is generally encouraged (through 20% time and peer bonuses). Critical projects would eventually become officially staffed. As an example, a team dedicated to the IntelliJ integration was formed around 2015.

Some people might wonder why you’d need a full dedicated team for this. Was the IDE not good enough in the first place? Part of the reason is that Google has a set of unique tools, and it just makes engineers more productive if you can give them a nice IDE integration. But also, some problems were caused by the sheer size of the monorepo. Traditional IDEs assumed that source code, build metadata, indexing and analysis all happened locally. At Google scale, that assumption starts to break down.

A Cloud IDE

Around 2013(?), something happened that I hadn’t anticipated. Some people started building a web-based editor, named Cider. The name is a reference to “Cloud IDE”, with a trailing “r” to get a more memorable name.

In a company where most tools are web-based, where people spend time in their browser to do code-reviews, navigate the codebase using Code Search… in a company that uses Chromebooks, it actually makes sense to have a quick way to edit files from the browser.

What surprised me though is that Cider eventually became popular across engineers. At first, it was mostly used by technical writers who wanted to edit markdown files without having to deal with version control. The workflow was very efficient for fixing typos. In one click, you would send the pull request, with an option to automatically merge it once approved. Nowadays GitHub has this kind of feature too, but at that time, it felt new to me.

Over time the team added more and more developer-oriented features. The turning point came when they added support for code completion, through the language-server protocol.

Cider was a light client that opened much faster than traditional IDEs. All the magic happened on a backend that indexes the entire codebase, so that all the data was ready whenever someone opened the webpage.

Code intelligence requires connecting each identifier with its type and references. This forms a huge language graph that has to be updated at every commit. And well… the codebase receives many commits per second. But the IDE also needs access to historical data. If I’m working on a project and my colleague merges their code, I don’t want to pick up the changes immediately. So my editor needs to use the graph corresponding to my last sync date… augmented with my local changes, obviously.

With this kind of feature, the popularity of Cider continued to rise among certain demographics. For example, it was much easier to convince Go developers to switch than Java developers (because they expected a much more advanced editor). But the joy of searching and having cross-references across a billion files is real.

Cider V: Using VSCode as a frontend

The investment in the backend could be justified: it was solving Google-specific problems and there was no good alternative to it. But the frontend felt quite limited: it was good for quick fixes, but it couldn’t compete with actual IDEs.

The direction changed in 2020, when I joined the team as one of the tech leads. At that time, Cider was the dominant IDE in the company and the question of its future came up. It was decided to use the VSCode frontend in Cider. It was a natural fit: VSCode was already dominating the IDE landscape, it was language-agnostic, extensible and built for the web.

By switching to the VSCode frontend, we inherited a mature editor, a large extension ecosystem and years of existing features. Many Cider feature requests were already solved problems in VSCode. More importantly, the extension system would unlock teams across the company and remove the Cider team from the critical path.

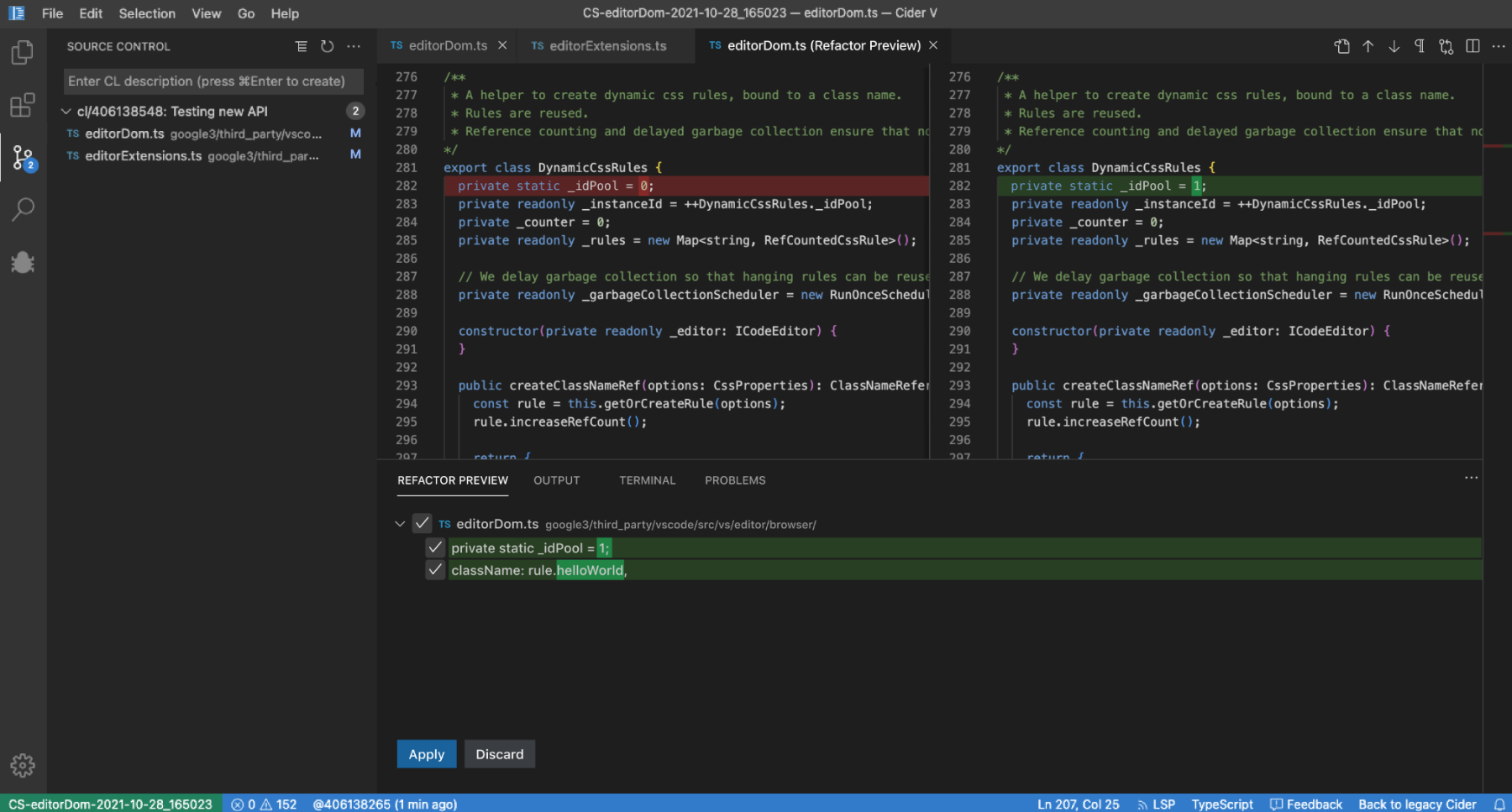

Screenshot of Cider V, 2022

Screenshot of Cider V, 2022

Even with a dozen engineers in the frontend team, it took a couple of years to build a complete successor to Cider. In 2021, the open beta was used by 5000 engineers, but a lot of work remained to integrate everything and polish the experience. The team had to support version control; integrate the code review tool; provide code completion and refactoring features using the Cider backend; redesign the way extensions are shipped and updated; etc.

Many users were passionate and used to the Cider editor, and expected every little detail to be the same in Cider V. Small workflow changes or an extra click here and there may become an adoption blocker for some users. So the polish part of the project required months of iterations. Even color schemes generated an absurd amount of discussions. As Joshua Bloch observed back in 2011, “the only thing that generates more religious fervour than programming languages is text editors and IDEs.”

I could also write about the interactions with the VSCode engineers and how we contributed changes back to VSCode, but this blog post is long enough. I’ll try to write more about it one day. But let’s say that we had to maintain our local fork, update monthly, and we tried as much as possible to reduce our local hacks and align with the upstream code.

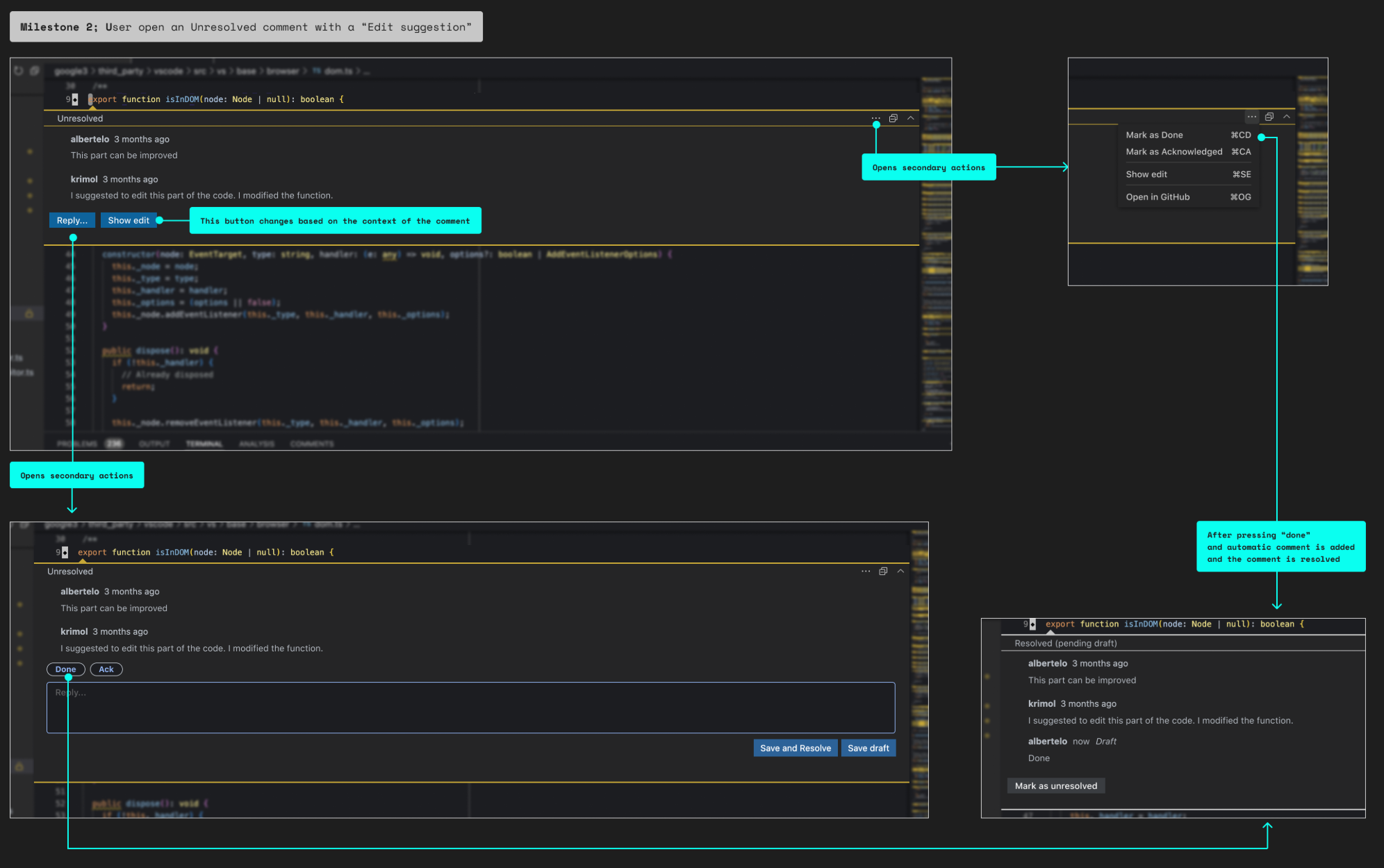

Design exploration for the code review integration, 2022

Design exploration for the code review integration, 2022

A Uniform IDE

I started the blog post with a question about a “uniform IDE for all Googlers”. It didn’t completely happen but, by 2023, 80% of the development in the main Google codebase happened in Cider V (and the number kept increasing).

Each IDE has its pros and cons, but Cider attracted users by having the best integrations with the company tools, such as excellent version control support and a code review integration where the reviewer comments are shown inline in the editor.

What I found most exciting was the side effects of having most users using the same tool. It meant that we could invest more resources in the tool (because each change has more impact). I was tech lead for the IDE extensibility and, soon, teams across the company reached out and started developing their own extensions to improve their specific workflows. After two years, around 100 internal extensions were being developed. This enabled many scenarios that were previously infeasible.

In 2023, the management pushed all the teams to integrate more and more AI features. This led to cool features such as Resolving Code Review Comments with Machine Learning and Smart Paste for context-aware adjustments to pasted code. And of course AI code completion.

As more AI features are integrated into the IDE, the advantages of having a single, extensible platform become even more obvious. Of course, it was very expensive and very few companies can justify this kind of work. But I believe that the move to a “standard” (even if it’s not mandated) IDE has been very impactful.

In the end, standard tooling creates leverage.

Comments are closed, but feedback is welcome. You can discuss on Hackernews or Mastodon. If you like this kind of content, you can subscribe through RSS. To get email notifications, try a third-party tool like Feedrabbit.