§01

A senior developer is a problem avoider

When I join a team there are two kinds of senior developers I meet.

The first kind says things like:

“I found this new tool and it’s pretty cool ...”

“This company <company totally unlike the one we’re in> does things this way, so …”

“Here, look at this HackerNews post that says this is best practice, we should probably …”

I don’t like this kind of senior developer. A little self-protective, lots of time spent in the industry, probably a good people person.

But not my wavelength.

Then there’s also this kind of senior developer:

“Do we really need that?”

“What happens if we don’t do this?”

“Can we make do for now? Maybe come back to this later when it becomes more important?”

Ah, baby, this is my senior developer. The avoider, the reducer, the recycler. They want to avoid development as much as they can.

Why? Because they hunt a singular monster in professional software development: complexity.

Special cases, if conditions, new database tables, new components. All yuck yucks. The senior developer wants as little of this as possible, spending lots of time making sure they absolutely need to add more code.

Because adding to a system is risking more complexity.

Yes, yes, of course this is simplistic. There are senior developers who excel at taking on unsolved problems and finding new creative designs.

But eventually, if you’re taking responsibility for a working system, you’re scared of complexity.

Now, why is that? What’s the downside of complexity? And why doesn’t anybody else get it?

§02

The rest of the business is scared of uncertainty

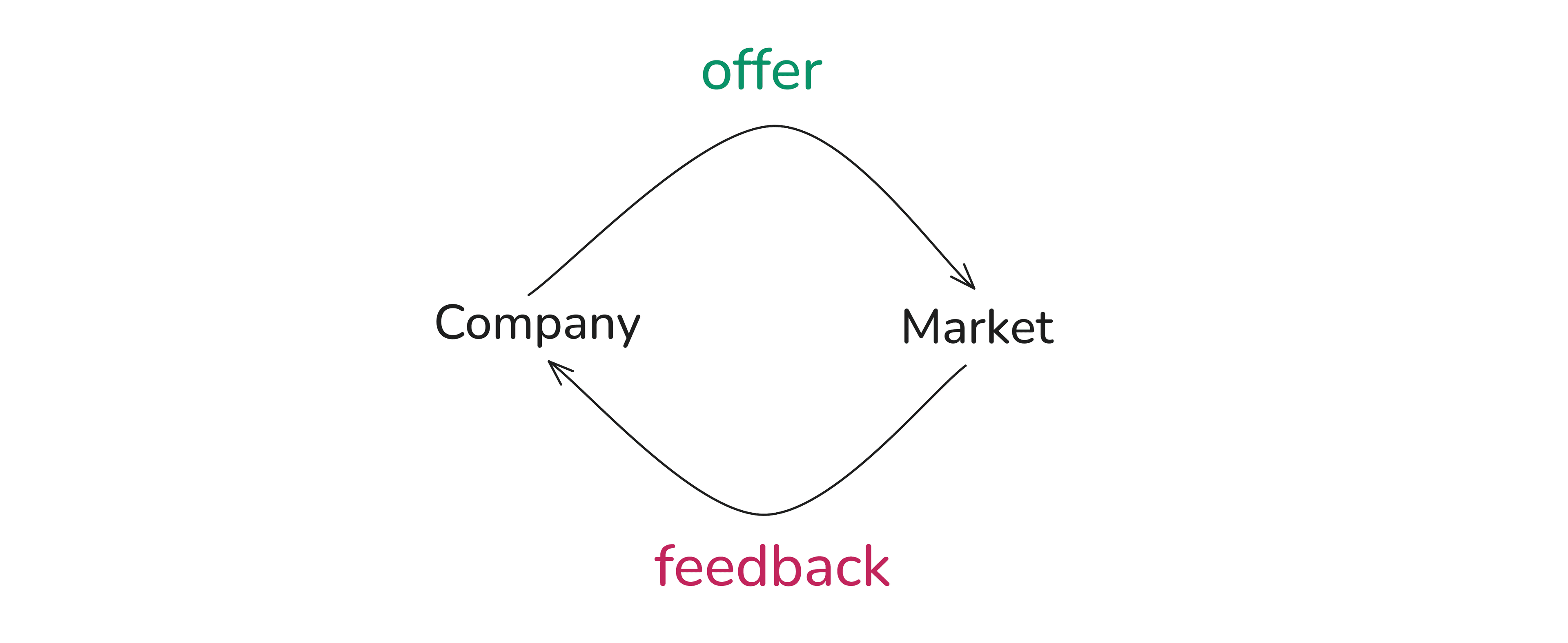

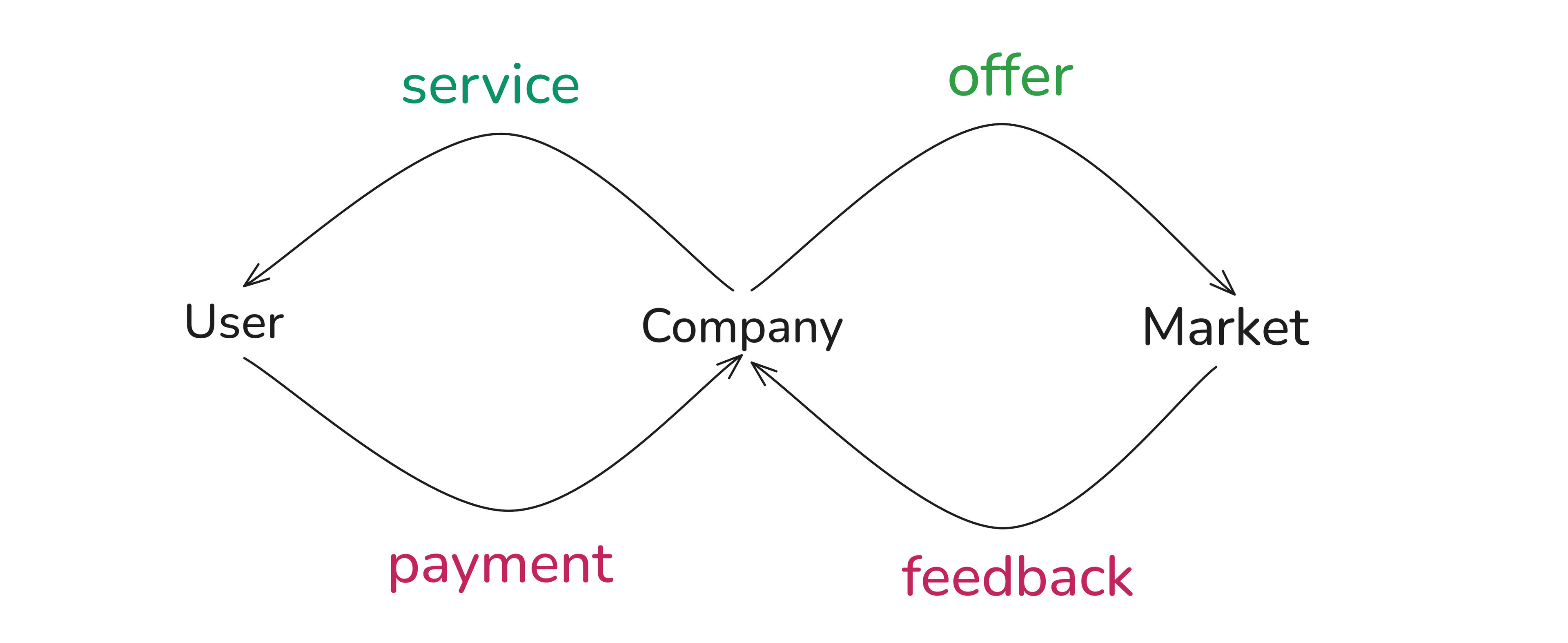

We’re going to be simplifying what a business is using two loops.

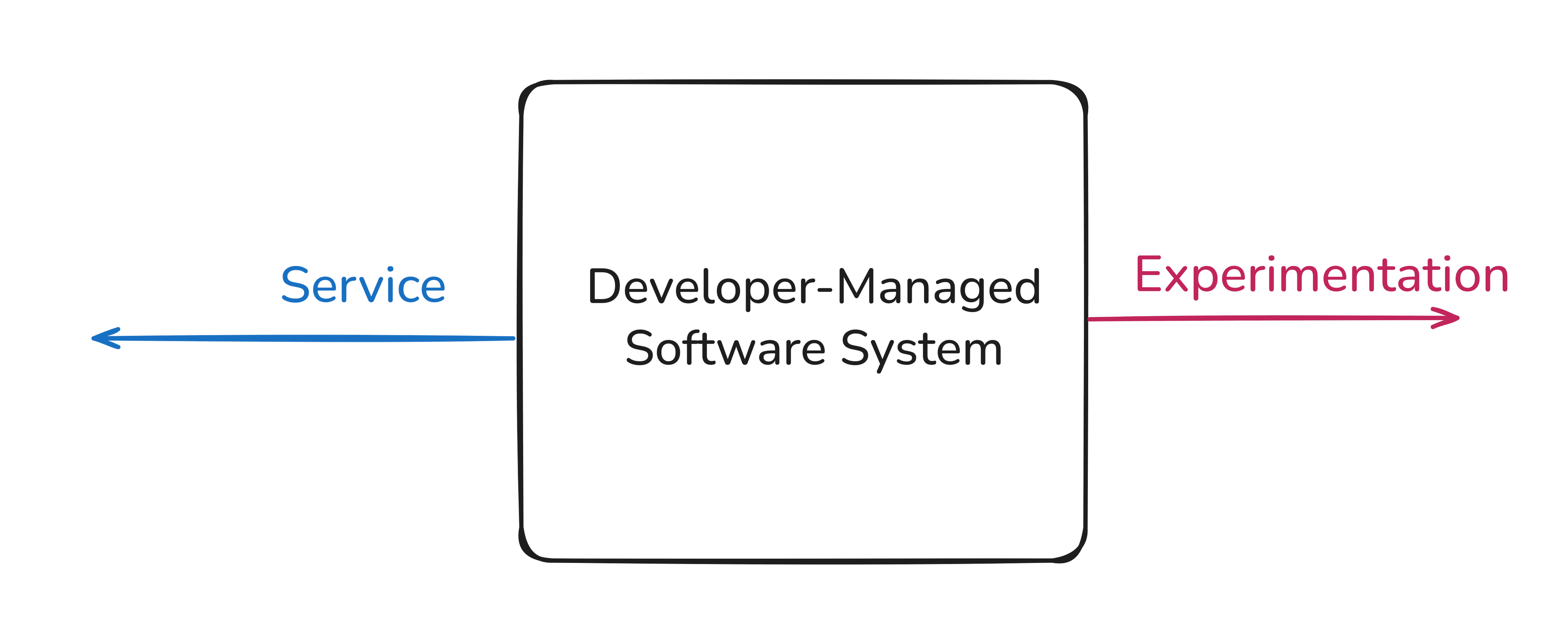

This is the first loop; marketers, salespeople, product managers, the CEO, they all live here:

The main goal of this loop is to try and learn. The business wants to take things to market and then get feedback on whether they’ve got something valuable or not.

The monster, for people in this loop, is uncertainty.

And uncertainty is cruel because no strategy is guaranteed to work. When combined with time (compensation for marketing/sales, or payroll for founders, or data for product managers) it can feel like taking things to market as fast as possible is the only way to reduce uncertainty before a deadline. The more you can take to the market, the more you can get feedback from it, the more you can (potentially) reduce uncertainty.

This loop, and all companies start with this loop, is about pure, raw, speed.

But what happens when a business gets customers?

§03

Senior developers care a lot about stability

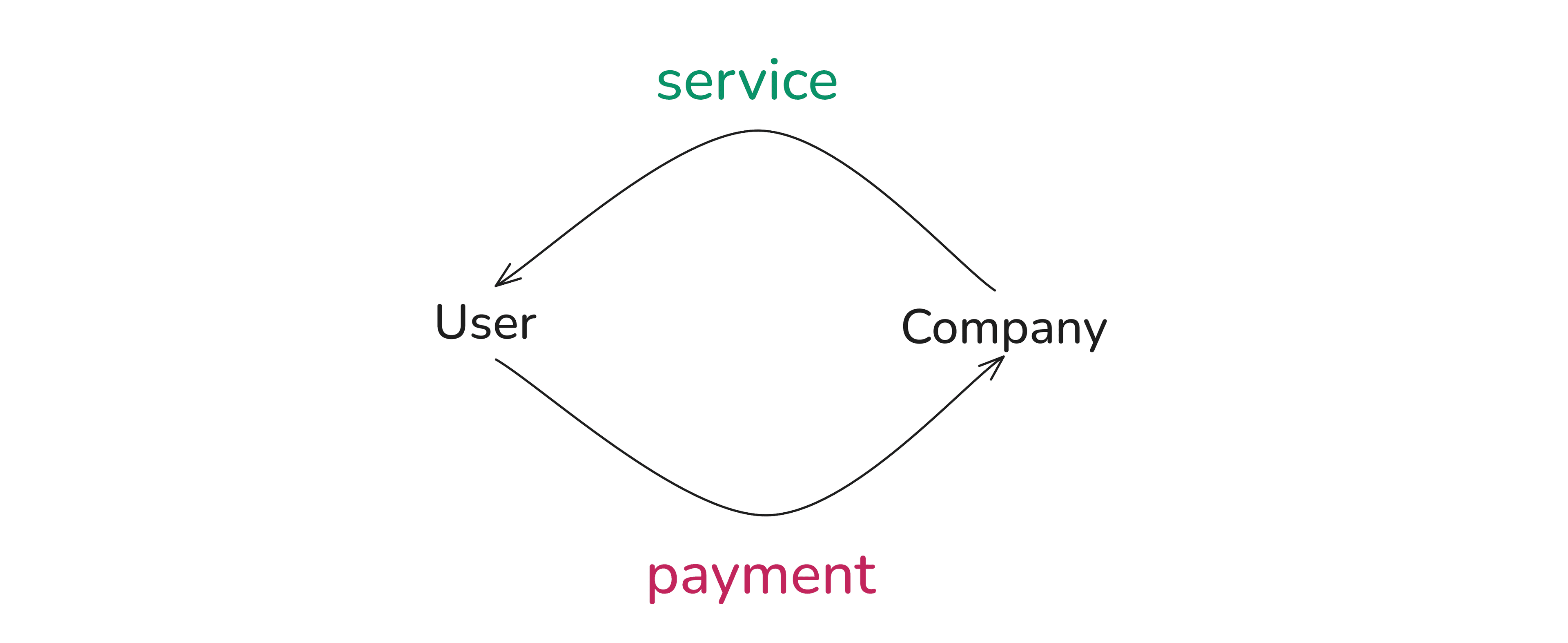

Ah, now, here’s our second loop. People paying for a service.

This loop is where a lot of senior developers find themselves in. The main goal in this loop is the continuation and guarantee of service.

Keep things working, keep things understandable, keep things debuggable, keep things fixable, keep things teachable, keep things stable.

Senior developers worry about stability because they take responsibility for the business to continue serving customers.

And what risks all of that?

Complexity.

It makes a system less understandable, less debuggable, less fixable, less teachable, and ultimately, less stable.

Rising complexity = lowering stability = senior developer failing responsibility = bad bad not nice, payments interrupted, everybody sad.

So, if the first loop’s goal was uncertainty reduction, the second loop’s goal is complexity management.

But why does this lead to communication failure?

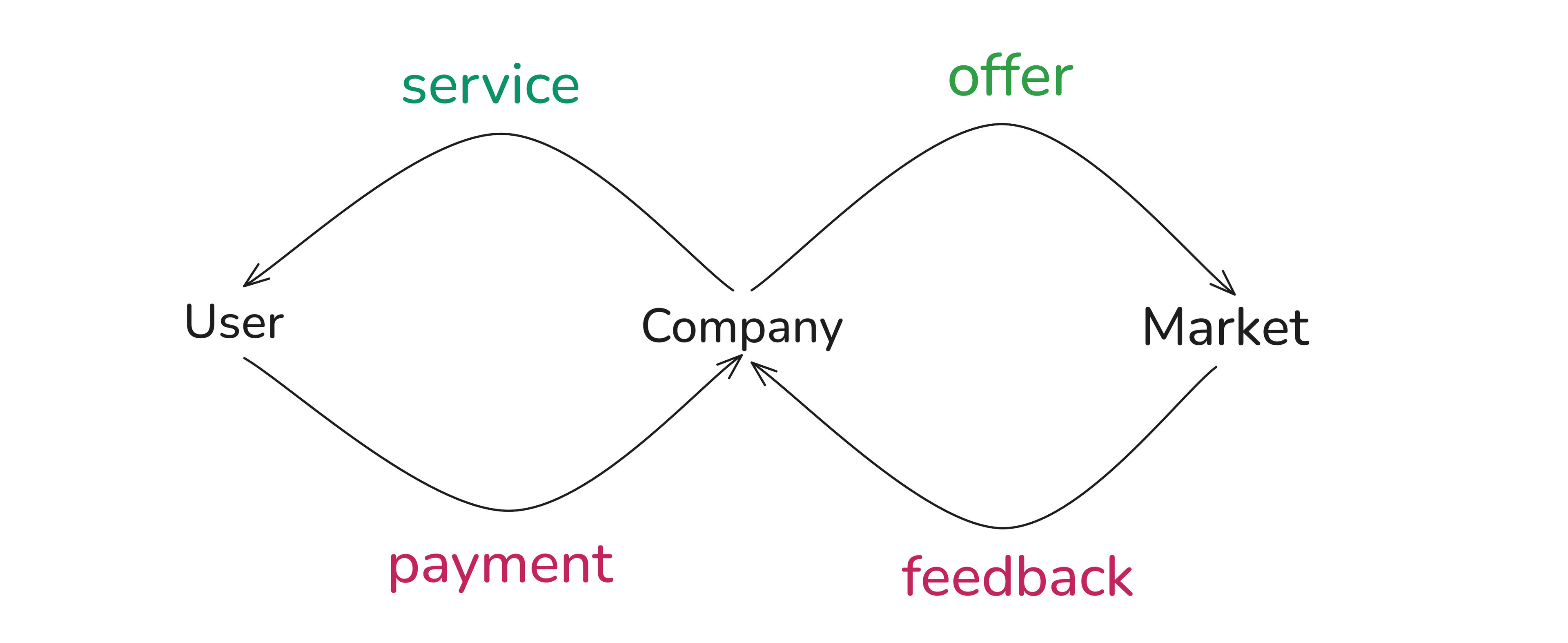

Because once you have customers, both loops are running simultaneously. A business needs to both explore possibilities and serve customers at the same time.

Ok, now you might be able to spot my answer to the question in the title of this post.

Depending on which loop you spend your time on, your problem is framed differently (which is why I think developers get split in their opinions on AI; some work more on one loop than the other)

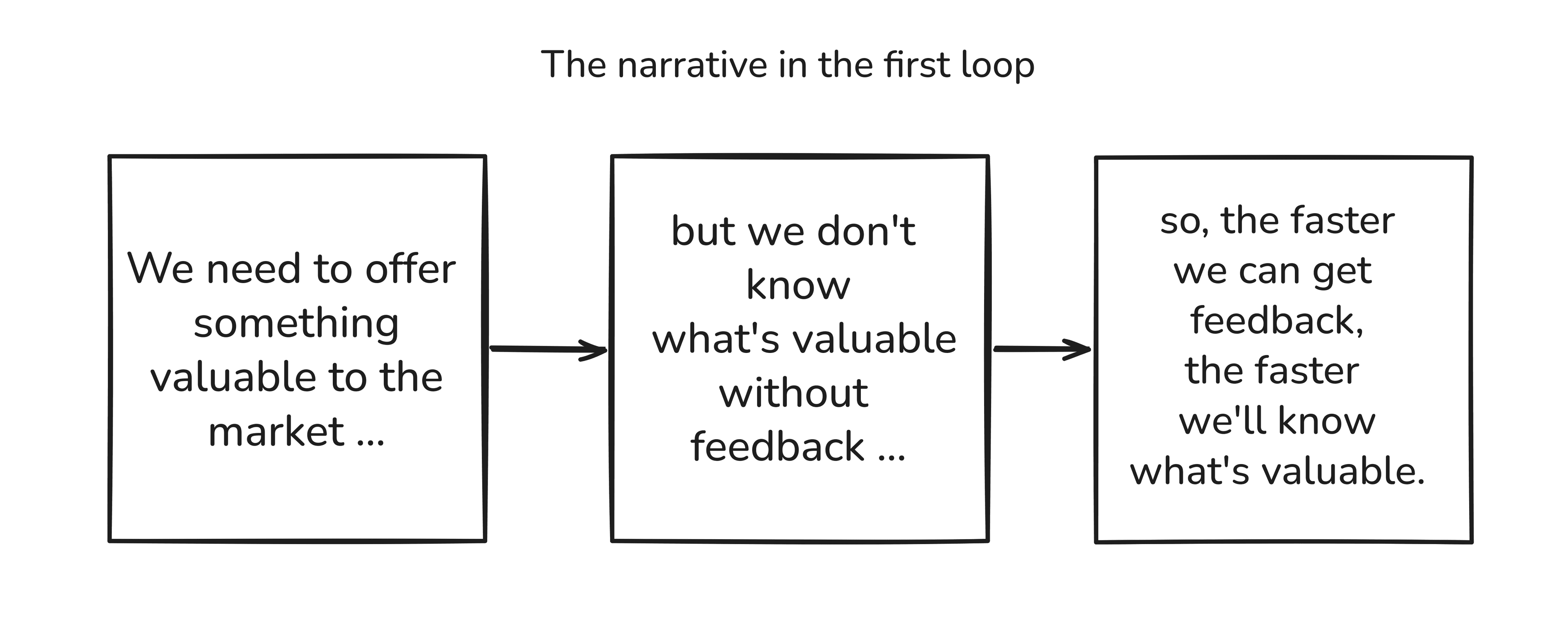

This was the story of the people in the first loop:

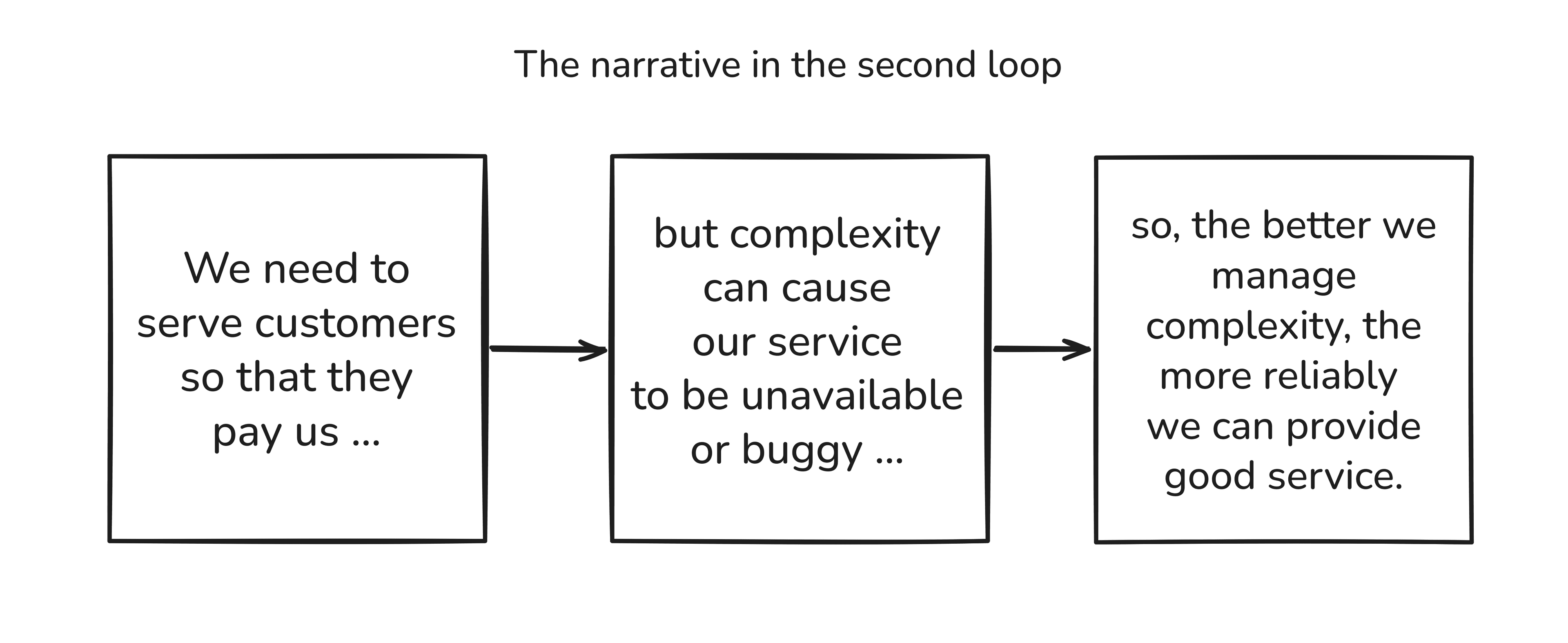

But this was the story of the senior developer in the second loop:

The stories don’t match.

The more requests to build and add to the system the senior developer gets, the more the senior developer wants to respond with “uhhh, no complexity … maintenance costs … understandability … speed of continuing development … productivity over time …”.

But that does nothing to address the rest of the business’s need for reducing uncertainty.

The copywriter’s diagnosis: You can’t explain away someone else’s problem using your own problems.

And the copywriter’s prescription: You need to describe your solution as a solution to their problem as well.

Senior developer’s fail to communicate because they express their problems in terms of complexity management when they should be expressing their solutions in terms of uncertainty reduction.

By acknowledging that what the rest of the company is seeking for is uncertainty reduction, the senior developer can use their expertise to help.

And what’s the most useful skill a senior developer has? The reluctance to build what’s not necessary; the ability to spot an opportunity to re-use something already built.

Need to collect survey data? Google forms, baby.

Need to build a whole new feature to test it? Have you tried putting a button in the existing UI and seeing if people click it?

Need new analytics service? What’s the most important decision we need analytics for? Can we start with one decision, one chart, one metric?

You want to bake me a whole birthday cake? Just put a candle on my sandwich.

This is what senior developers learn to do: they learn how to give people what they want by being resourceful with existing software.

But how do you communicate this without sending people whole essays?

Copywriters love boiling down multiple signals into singular phrases. And so, here’s the magical phrase every senior developer must learn: ‘Can we try something quicker?’

The use of ‘quicker’ acknowledges what they’re really looking for; ‘something’ implies another way of achieving it; ‘try’ implies imperfection, but also the possibility of it being good enough.

It perfectly cuts down to the requirement of the rest of the company, speed to reduce uncertainty, while allowing the senior developer to exercise their expertise: reduce, re-use, and if life is truly a blessing, avoid.

That’s it. That’s my answer to the title of the post: senior developers talk in terms of complexity when everyone else is worried about uncertainty.

But! Big but!

AI now seems to make all of this pointless, doesn’t it? Why reduce? Why re-use? Why avoid? The AI can build so much in so little time.

Ah, well, it can’t yet do the one thing senior developers still do.

Take responsibility.

§04

Senior developers as editors more than writers

Senior developers care a lot about understanding the system because understanding allows fixing it when things go wrong. It allows extending it intelligently when the system needs to grow. It allows, more than anything, the continued, reliable servicing of paying customers.

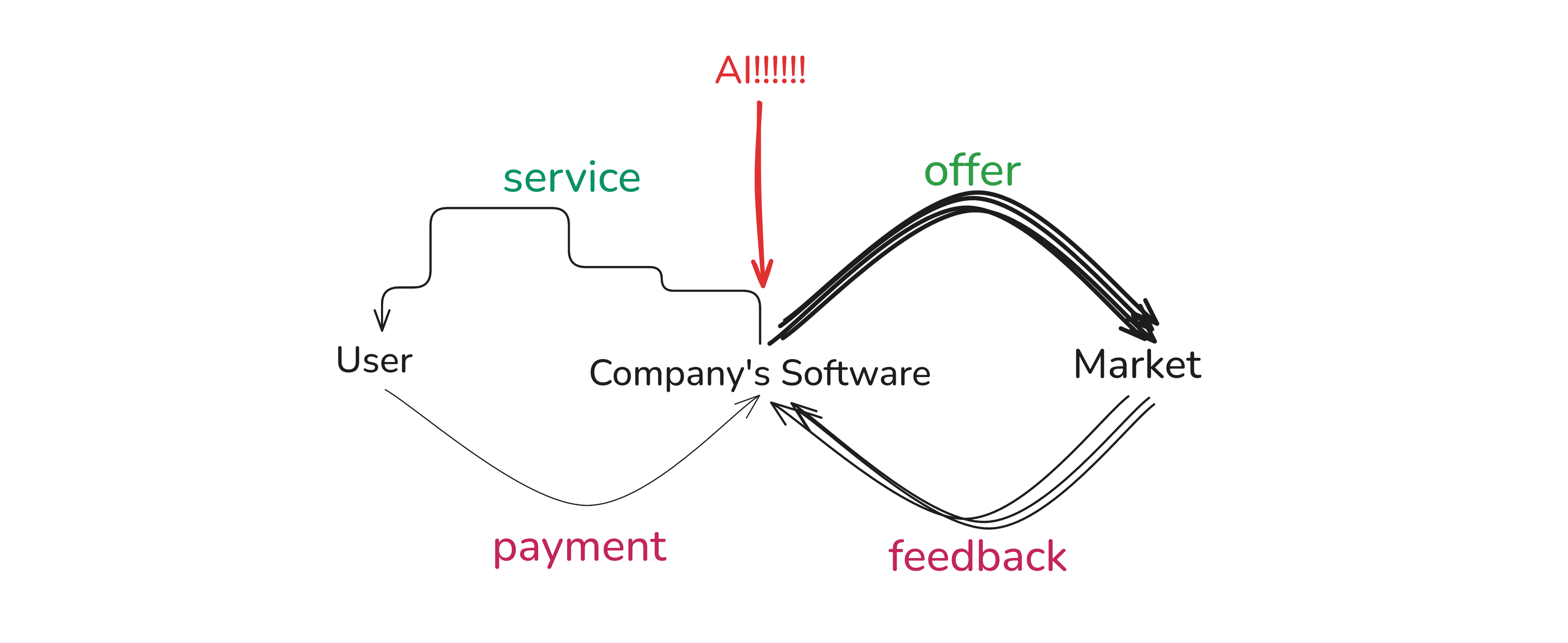

AI threatens this understandability. It is incredible at improving the speed of taking things to the market, but it also affects the other loop, the one the senior developers are responsible for.

If you have a bunch of AI agents, junior developers, non-developers, and your investors and their mothers adding code into the system, you get a system that overcompensates for speed by giving up stability.

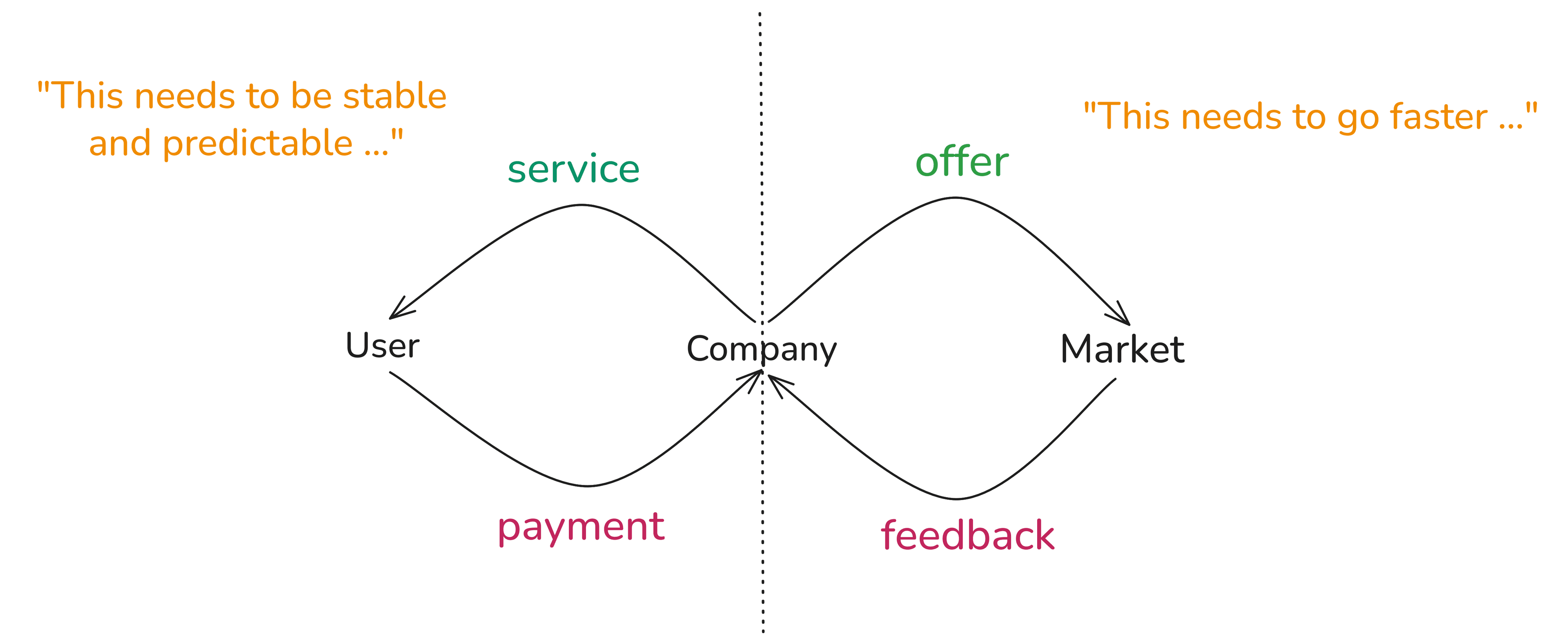

This was the business in two loops:

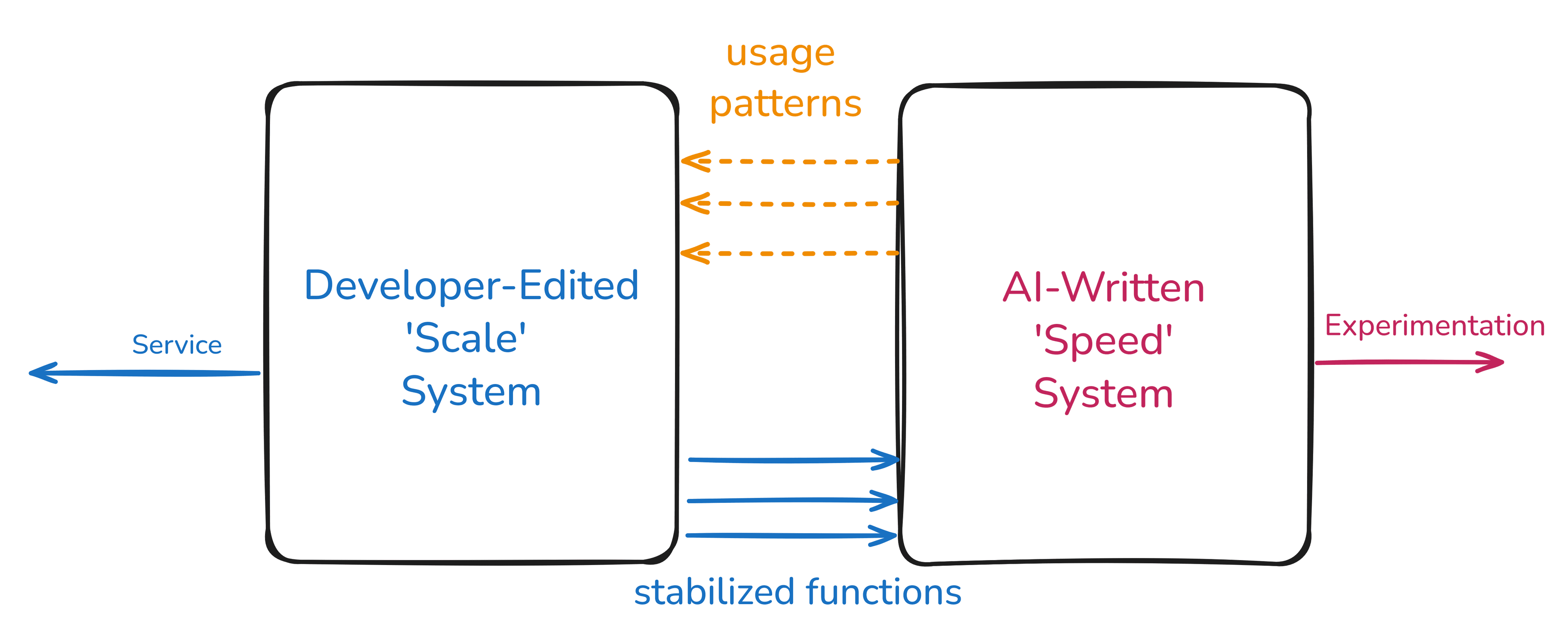

And this is how AI affects the two loops:

Forget maintaining stability, AI is a downright destabilizer. It worsens understandability, fixability, debuggability, teachability, guaranteability, all the bloody bilities.

AI does this and takes no responsibility.

Not nice. This is the senior developer’s main worry that’s being brushed away.

Luckily, senior developers have a few tricks up their sleeve.

Namely: decoupling.

For the longest time, software developers were the only ones who could build software. They were responsible for both loops.

That’s one system supporting two goals.

What if we had two systems, one for each goal?

An analogy: a fiction writer rushes to complete a first draft (often called a vomit draft) and later extracts what’s working and gets rid of what’s not. There’s an editing process after the first initial rapid write. The editor’s job is to take the bits that are working well and shape it all into a cohesive whole.

What if we had one system just for speed? Everyone focused on bringing things to life could work here. AI agents, our own generated and unreviewed code, junior devs, marketing etc.

We could call this the ‘Speed’ version of the system. It’s not meant to be understandable, the goal is getting things good enough to take it to the market for feedback.

And then what if we had a second system focused on stability?

We could call this the ‘Scale’ version of the system. It’s designed by senior developers to be stable, understandable, and scalable.

The ‘Speed’ version allows the rest of the business to continue learning from the market, as the senior developers build a trailing version of the system that’s well-reviewed and understandable.

Plus, the design of the 'Scale' version is influenced by what worked and what doesn’t work in the 'Speed' version of the system.

Features get built on ‘Speed’ but then stabilized on ‘Scale’.

What this looks like in practice might be unclear, but the idea is to have a well-communicated de-coupling that explains that there’s a difference between going for speed and going for stability.

Imagine you get asked to build something ambitious, and you say:

“Sure, I’ll have the Speed version ready in 3 days. Then the Scale version in about 6 weeks.”

They get what they want, speed and momentum. You get what you want, observation and design.

Maybe?

Your thoughts, senior software developer?

Or should I say, senior software editor?